Chapter 1

Introduction [#]

Software documentation is like sex: when it is good, it is very, very good; and when it is bad, it is better than nothing. (Anonymous.)

There are two ways of constructing a software design: one way is to make it so simple that there are obviously no deficiencies; the other way is to make it so complicated that there are no obvious deficiencies. (C.A.R. Hoare)

A computer language is not just a way of getting a computer to perform operations but rather that it is a novel formal medium for expressing ideas about methodology. Thus, programs must be written for people to read, and only incidentally for machines to execute. (The Structure and Interpretation of Computer Programs, H. Abelson, G. Sussman and J. Sussman, 1985.)

If you try to make something beautiful, it is often ugly. If you try to make something useful, it is often beautiful. (Oscar Wilde)1

GATE is an infrastructure for developing and deploying software components that process human language. GATE helps scientists and developers in three ways:

- by specifiying an architecture, or organisational structure, for language processing software;

- by providing a framework, or class library, that implements the architecture and can be used to embed language processing capabilities in diverse applications;

- by providing a development environment built on top of the framework made up of convenient graphical tools for developing components.

The architecture exploits component-based software development, object orientation and mobile code. The framework and development environment are written in Java and available as open-source free software under the GNU library (or lesser) licence2. GATE uses Unicode throughout [Unicode Consortium 96, Tablan et al. 02], and has been tested on a variety of Slavic, Germanic, Romance, and Indic languages [Maynard et al. 01, Gambäck & Olsson 00, McEnery et al. 00].

From a scientific point-of-view, GATE’s contribution is to quantitative measurement of accuracy and repeatability of results for verification purposes.

GATE has been in development at the University of Sheffield since 1995 and has been used in a wide variety of research and development projects [Maynard et al. 00]. Version 1 of GATE was released in 1996, was licensed by several hundred organisations, and used in a wide range of language analysis contexts including Information Extraction ([Cunningham 99b, Appelt 99, Gaizauskas & Wilks 98, Cowie & Lehnert 96]) in English, Greek, Spanish, Swedish, German, Italian, French, Bulgarian, Russian, and a number of other languages. Version 4 of the system is available from http://gate.ac.uk/download/.

This book describes how to use GATE to develop language processing components, test their performance and deploy them as parts of other applications. In the rest of this chapter:

- section 1.1 describes the best way to use this book;

- section 1.2 briefly notes that the context of GATE is applied language processing, or Language Engineering;

- section 1.3 gives an overview of developing using GATE;

- section 1.4 describes the structure of the rest of the book;

- section 1.5 lists other publications about GATE.

Note: if you don’t see the component you need in this document, or if we mention a component that you can’t see in the software, contact gate-users@lists.sourceforge.net3 – various components are developed by our collaborators, who we will be happy to put you in contact with. (Often the process of getting a new component is as simple as typing the URL into GATE; the system will do the rest.)

1.1 How to Use This Text [#]

It is a good idea to read all of this introduction (you can skip sections 1.2 and 1.5 if pressed); then you can either continue wading through the whole thing or just use chapter 3 as a reference and dip into other chapters for more detail as necessary. Chapter 3 gives instructions for completing common tasks with GATE, organised in a FAQ style: details, and the reasoning behind the various aspects of the system, are omitted in this chapter, so where more information is needed refer to later chapters.

The structure of the book as a whole is detailed in section 1.4 below.

1.2 Context [#]

GATE can be thought of as a Software Architecture for Language Engineering [Cunningham 00].

‘Software Architecture’ is used rather loosely here to mean computer infrastructure for software development, including development environments and frameworks, as well as the more usual use of the term to denote a macro-level organisational structure for software systems [Shaw & Garlan 96].

Language Engineering (LE) may be defined as:

…the discipline or act of engineering software systems that perform tasks involving processing human language. Both the construction process and its outputs are measurable and predictable. The literature of the field relates to both application of relevant scientific results and a body of practice. [Cunningham 99a]

The relevant scientific results in this case are the outputs of Computational Linguistics, Natural Language Processing and Artificial Intelligence in general. Unlike these other disciplines, LE, as an engineering discipline, entails predictability, both of the process of constructing LE-based software and of the performance of that software after its completion and deployment in applications.

Some working definitions:

- Computational Linguistics (CL): science of language that uses computation as an investigative tool.

- Natural Language Processing (NLP): science of computation whose subject matter is data structures and algorithms for computer processing of human language.

- Language Engineering (LE): building NLP systems whose cost and outputs are measurable and predictable.

- Software Architecture: macro-level organisational principles for families of systems. In this context is also used as infrastructure.

- Software Architecture for Language Engineering (SALE): software infrastructure, architecture and development tools for applied CL, NLP and LE.

(Of course the practice of these fields is broader and more complex than these definitions.)

In the scientific endeavours of NLP and CL, GATE’s role is to support experimentation. In this context GATE’s significant features include support for automated measurement (see section 13), providing a ‘level playing field’ where results can easily be repeated across different sites and environments, and reducing research overheads in various ways.

1.3 Overview [#]

1.3.1 Developing and Deploying Language Processing Facilities [#]

GATE as an architecture suggests that the elements of software systems that process natural language can usefully be broken down into various types of component, known as resources4. Components are reusable software chunks with well-defined interfaces, and are a popular architectural form, used in Sun’s Java Beans and Microsoft’s .Net, for example. GATE components are specialised types of Java Bean, and come in three flavours:

- LanguageResources (LRs) represent entities such as lexicons, corpora or ontologies;

- ProcessingResources (PRs) represent entities that are primarily algorithmic, such as parsers, generators or ngram modellers;

- VisualResources (VRs) represent visualisation and editing components that participate in GUIs.

These definitions can be blurred in practice as necessary.

Collectively, the set of resources integrated with GATE is known as CREOLE: a Collection of REusable Objects for Language Engineering. All the resources are packaged as Java Archive (or ‘JAR’) files, plus some XML configuration data. The JAR and XML files are made available to GATE by putting them on a web server, or simply placing them in the local file space. Section 1.3.2 introduces GATE’s built-in resource set.

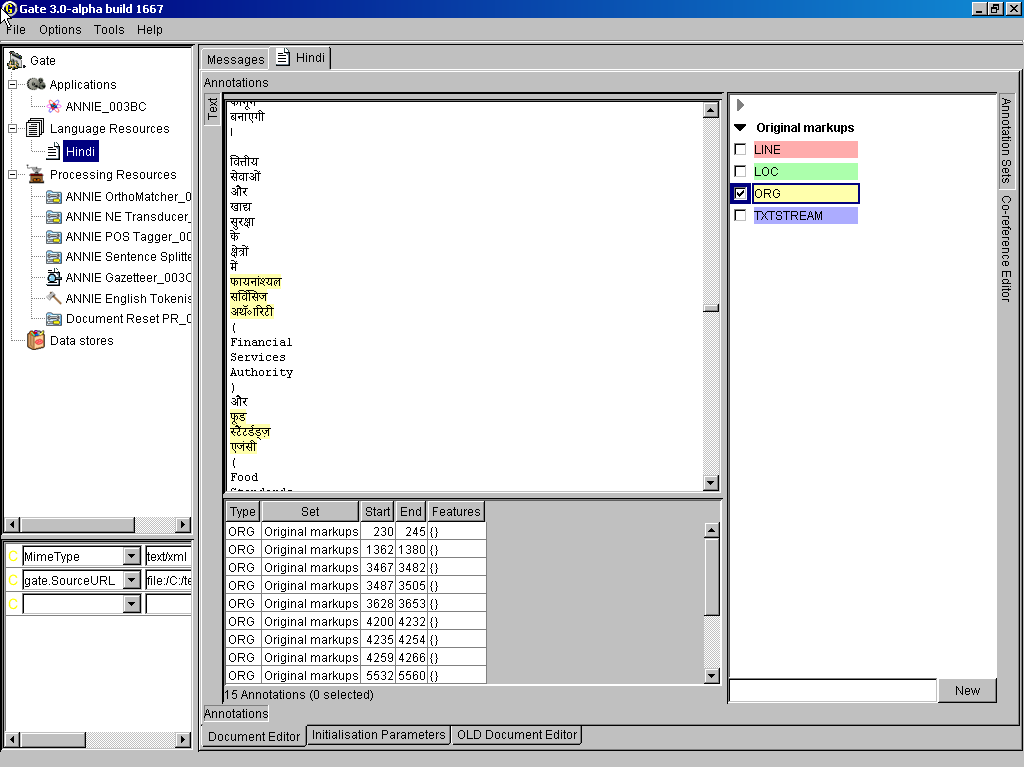

When using GATE to develop language processing functionality for an application, the developer uses the development environment and the framework to construct resources of the three types. This may involve programming, or the development of Language Resources such as grammars that are used by existing Processing Resources, or a mixture of both. The development environment is used for visualisation of the data structures produced and consumed during processing, and for debugging, performance measurement and so on. For example, figure 1.1 is a screenshot of one of the visualisation tools

(displaying named-entity extraction results for a Hindi sentence).

The GATE development environment is analogous to systems like Mathematica for Mathematicians, or JBuilder for Java programmers: it provides a convenient graphical environment for research and development of language processing software.

When an appropriate set of resources have been developed, they can then be embedded in the target client application using the GATE framework. The framework is supplied as two JAR files.5 To embed GATE-based language processing facilities in an application, these JAR files are all that is needed, along with JAR files and XML configuration files for the various resources that make up the new facilities.

1.3.2 Built-in Components [#]

GATE includes resources for common LE data structures and algorithms, including documents, corpora and various annotation types, a set of language analysis components for Information Extraction and a range of data visualisation and editing components.

GATE supports documents in a variety of formats including XML, RTF, email, HTML, SGML and plain text. In all cases the format is analysed and converted into a single unified model of annotation. The annotation format is a modified form the TIPSTER format [Grishman 97] which has been made largely compatible with the Atlas format [Bird & Liberman 99], and uses the now standard mechanism of ‘stand-off markup’. GATE documents, corpora and annotations are stored in databases of various sorts, visualised via the development environment, and accessed at code level via the framework. See chapter 6 for more details of corpora etc.

A family of Processing Resources for language analysis is included in the shape of ANNIE, A Nearly-New Information Extraction system. These components use finite state techniques to implement various tasks from tokenisation to semantic tagging or verb phrase chunking. All ANNIE components communicate exclusively via GATE’s document and annotation resources. See chapter 8 for more details. See chapter 5 for visual resources. See chapter 9 for other miscellaneous CREOLE resources.

1.3.3 Additional Facilities [#]

Three other facilities in GATE deserve special mention:

- JAPE, a Java Annotation Patterns Engine, provides regular-expression based pattern/action rules over annotations – see chapter 7.

- The ‘annotation diff’ tool in the development environment implements performance metrics such as precision and recall for comparing annotations. Typically a language analysis component developer will mark up some documents by hand and then use these along with the diff tool to automatically measure the performance of the components. See section 13.

- GUK, the GATE Unicode Kit, fills in some of the gaps in the JDK’s6 support for Unicode, e.g. by adding input methods for various languages from Urdu to Chinese. See section 3.35 for more details.

And by version 4 it will make a mean cup of tea.

1.3.4 An Example [#]

This section gives a very brief example of a typical use of GATE to develop and deploy language processing capabilities in an application, and to generate quantitative results for scientific publication.

Let’s imagine that a developer called Fatima is building an email client7 for Cyberdyne Systems’ large corporate Intranet. In this application she would like to have a language processing system that automatically spots the names of people in the corporation and transforms them into mailto hyperlinks.

A little investigation shows that GATE’s existing components can be tailored to this purpose. Fatima starts up the development environment, and creates a new document containing some example emails. She then loads some processing resources that will do named-entity recognition (a tokeniser, gazetteer and semantic tagger), and creates an application to run these components on the document in sequence. Having processed the emails, she can see the results in one of several viewers for annotations.

The GATE components are a decent start, but they need to be altered to deal specially with people from Cyberdyne’s personnel database. Therefore Fatima creates new “cyber-” vesions of the gazetteer and semantic tagger resources, using the “bootstrap” tool. This tool creates a directory structure on disk that has some Java stub code, a Makefile and an XML configuration file. After several hours struggling with badly written documentation, Fatima manages to compile the stubs and create a JAR file containing the new resources. She tells GATE the URL of these files8, and the system then allows her to load them in the same way that she loaded the built-in resources earlier on.

Fatima then creates a second copy of the email document, and uses the annotation editing facilities to mark up the results that she would like to see her system producing. She saves this and the version that she ran GATE on into her Oracle datastore (set up for her by the Herculean efforts of the Cyberdyne technical support team, who like GATE because it enables them to claim lots of overtime). From now on she can follow this routine:

- Run her application on the email test corpus.

- Check the performance of the system by running the ‘annotation diff’ tool to compare her manual results with the system’s results. This gives her both percentage accuracy figures and a graphical display of the differences between the machine and human outputs.

- Make edits to the code, pattern grammars or gazetteer lists in her resources, and recompile where necessary.

- Tell GATE to re-initialise the resources.

- Go to 1.

To make the alterations that she requires, Fatima re-implements the ANNIE gazetteer so that it regenerates itself from the local personnel data. She then alters the pattern grammar in the semantic tagger to prioritise recognition of names from that source. This latter job involves learning the JAPE language (see chapter 7), but as this is based on regular expressions it isn’t too difficult.

Eventually the system is running nicely, and her accuracy is 93% (there are still some problem cases, e.g. when people use nicknames, but the performance is good enough for production use). Now Fatima stops using the GATE development environment and works instead on embedding the new components in her email application. This application is written in Java, so embedding is very easy9: the two GATE JAR files are added to the project CLASSPATH, the new components are placed on a web server, and with a little code to do initialisation, loading of components and so on, the job is finished in half a day – the code to talk to GATE takes up only around 150 lines of the eventual application, most of which is just copied from the example in the sheffield.examples.StandAloneAnnie class.

Because Fatima is worried about Cyberdyne’s unethical policy of developing Skynet to help the large corporates of the West strengthen their strangle-hold over the World, she wants to get a job as an academic instead (so that her conscience will only have to cope with the torture of students, as opposed to humanity). She takes the accuracy measures that she has attained for her system and writes a paper for the Journal of Nasturtium Logarithm Encitement describing the approach used and the results obtained. Because she used GATE for development, she can cite the repeatability of her experiments and offer access to example binary versions of her software by putting them on an external web server.

And everybody lived happily ever after.

1.4 Structure of the Book [#]

The material presented in this book ranges from the conceptual (e.g. ‘what is software architecture?’) to practical instructions for programmers (e.g. how to deal with GATE exceptions) and linguists (e.g. how to write a pattern grammar). This diversity is something of an organisational challenge. Our (no doubt imperfect) solution is to collect specific instructions for ‘how to do X’ in a separate chapter (3). Other chapters give a more discursive presentation. In order to understand the whole system you must, unfortunately, read much of the book; in order to get help with a particular task, however, look first in chapter 3 and refer to other material as necessary.

The other chapters:

Chapter 4 describes the GATE architecture’s component-based model of language processing, describes the lifecycle of GATE components, and how they can be grouped into applications and stored in databases and files.

Chapter 5 describes the set of Visual Resources that are bundled with GATE.

Chapter 6 describes GATE’s model of document formats, annotated documents, annotation types, and corpora (sets of documents). It also covers GATE’s facilities for reading and writing in the XML data interchange language.

Chapter 7 describes JAPE, a pattern/action rule language based on regular expressions over annotations on documents. JAPE grammars compile into cascaded finite state transducers.

Chapter 8 describes ANNIE, a pipelined Information Extraction system which is supplied with GATE.

Chapter 9 describes CREOLE resources bundled with the system that don’t fit into the previous categories.

Chapter 10 describes processing resources and language resources for working with ontologies.

Chapter 11 describes a machine learning layer specifically targetted at NLP tasks including text classification, chunk learning (e.g. for named entity recognition) and relation learning.

Chapter 13 describes how to measure the performance of language analysis components.

Chapter 14 describes the data store security model.

Appendix A discusses the design of the system.

Appendix B describes the implementation details and formal definitions of the JAPE annotation patterns language.

Appendix C describes in some detail the JAPE pattern grammars that are used in ANNIE for named-entity recognition.

1.5 Further Reading [#]

Lots of documentation lives on the GATE web server, including:

- the concise application developer’s guide (with emphasis on using the GATE API);

- a guide to using GATE for manual annotation;

- movies of the system in operation;

- the main system documentation tree;

- JavaDoc API documentation;

- HTML of the source code;

- parts of the requirements analysis that version 3 is based on.

For more details about Sheffield University’s work in human language processing see the NLP group pages or A Definition and Short History of Language Engineering ([Cunningham 99a]). For more details about Information Extraction see IE, a User Guide or the GATE IE pages.

A list of publications on GATE and projects that use it (some of which are available on-line):

- [Cunningham 05]

- is an overview of the field of Information Extraction for the 2nd Edition of the Encyclopaedia of Language and Linguistics.

- [Cunningham & Bontcheva 05]

- is an overview of the field of Software Architecture for Language Engineering for the 2nd Edition of the Encyclopaedia of Language and Linguistics.

- [Li et al. 04]

- (Machine Learning Workshop 2004) describes an SVM based learning algortihm for IE using GATE.

- [Wood et al. 04]

- (NLDB 2004) looks at ontology-based IE from parallel texts.

- [Cunningham & Scott 04b]

- (JNLE) is a collection of papers covering many important areas of Software Architecture for Language Engineering.

- [Cunningham & Scott 04a]

- (JNLE) is the introduction to the above collection.

- [Bontcheva 04]

- (LREC 2004) describes lexical and ontological resources in GATE used for Natural Language Generation.

- [Bontcheva et al. 04]

- (JNLE) discusses developments in GATE in the early naughties.

- [Maynard et al. 04a]

- (LREC 2004) presents algorithms for the automatic induction of gazetteer lists from multi-language data.

- [Maynard et al. 04c]

- (AIMSA 2004) presents automatic creation and monitoring of semantic metadata in a dynamic knowledge portal.

- [Maynard et al. 04b]

- (ESWS 2004) discusses ontology-based IE in the hTechSight project.

- [Dimitrov et al. 04]

- (Anaphora Processing) gives a lightweight method for named entity coreference resolution.

- [Kiryakov 03]

- (Technical Report) discusses semantic web technology in the context of multimedia indexing and search.

- [Tablan et al. 03]

- (HLT-NAACL 2003) presents the OLLIE on-line learning for IE system.

- [Wood et al. 03]

- (Recent Advances in Natural Language Processing 2003) discusses using parallel texts to improve IE recall.

- [Maynard et al. 03a]

- (Recent Advances in Natural Language Processing 2003) looks at semantics and named-entity extraction.

- [Maynard et al. 03b]

- (ACL Workshop 2003) describes NE extraction without training data on a language you don’t speak (!).

- [Maynard et al. ]

- (EACL 2003) looks at the distinction between information and content extraction.

- [Manov et al. 03]

- (HLT-NAACL 2003) describes experiments with geographic knowledge for IE.

- [Saggion et al. 03a]

- (EACL 2003) discusses robust, generic and query-based summarisation.

- [Saggion et al. 03c]

- (EACL 2003) discusses event co-reference in the MUMIS project.

- [Saggion et al. 03b]

- (Data and Knowledge Engineering) discusses multimedia indexing and search from multisource multilingual data.

- [Cunningham et al. 03]

- (Corpus Linguistics 2003) describes GATE as a tool for collaborative corpus annotation.

- [Bontcheva et al. 03]

- (NLPXML-2003) looks at GATE for the semantic web.

- [Dimitrov 02a, Dimitrov et al. 02]

- (DAARC 2002, MSc thesis) discuss lightweight coreference methods.

- [Lal 02]

- (Master Thesis) looks at text summarisation using GATE.

- [Lal & Ruger 02]

- (ACL 2002) looks at text summarisation using GATE.

- [Cunningham et al. 02]

- (ACL 2002) describes the GATE framework and graphical development environment as a tool for robust NLP applications.

- [Bontcheva et al. 02b]

- (NLIS 2002) discusses how GATE can be used to create HLT modules for use in information systems.

- [Tablan et al. 02]

- (LREC 2002) describes GATE’s enhanced Unicode support.

- [Maynard et al. 02a]

- (ACL 2002 Summarisation Workshop) describes using GATE to build a portable IE-based summarisation system in the domain of health and safety.

- [Maynard et al. 02c]

- (Nordic Language Technology) describes various Named Entity recognition projects developed at Sheffield using GATE.

- [Maynard et al. 02b]

- (AIMSA 2002) describes the adaptation of the core ANNIE modules within GATE to the ACE (Automatic Content Extraction) tasks.

- [Maynard et al. 02d]

- (JNLE) describes robustness and predictability in LE systems, and presents GATE as an example of a system which contributes to robustness and to low overhead systems development.

- [Bontcheva et al. 02c], [Dimitrov 02a] and [Dimitrov 02b]

- (TALN 2002, DAARC 2002, MSc thesis) describe the shallow named entity coreference modules in GATE: the orthomatcher which resolves pronominal coreference, and the pronoun resolution module.

- [Bontcheva et al. 02a]

- (ACl 2002 Workshop) describes how GATE can be used as an environment for teaching NLP, with examples of and ideas for future student projects developed within GATE.

- [Pastra et al. 02]

- (LREC 2002) discusses the feasibility of grammar reuse in applications using ANNIE modules.

- [Baker et al. 02]

- (LREC 2002) report results from the EMILLE Indic languages corpus collection and processing project.

- [Saggion et al. 02b] and [Saggion et al. 02a]

- (LREC 2002, SPLPT 2002) describes how ANNIE modules have been adapted to extract information for indexing multimedia material.

- [Maynard et al. 01]

- (RANLP 2001) discusses a project using ANNIE for named-entity recognition across wide varieties of text type and genre.

- [Cunningham 00]

- (PhD thesis) defines the field of Software Architecture for Language Engineering, reviews previous work in the area, presents a requirements analysis for such systems (which was used as the basis for designing GATE versions 2 and 3), and evaluates the strengths and weaknesses of GATE version 1.

- [Cunningham 02]

- (Computers and the Humanities) describes the philosophy and motivation behind the system, describes GATE version 1 and how well it lived up to its design brief.

- [McEnery et al. 00]

- (Vivek) presents the EMILLE project in the context of which GATE’s Unicode support for Indic languages has been developed.

- [Cunningham et al. 00d] and [Cunningham 99c]

- (technical reports) document early versions of JAPE (superceded by the present document).

- [Cunningham et al. 00a], [Cunningham et al. 98a] and [Peters et al. 98]

- (OntoLex 2000, LREC 1998) presents GATE’s model of Language Resources, their access and distribution.

- [Maynard et al. 00]

- (technical report) surveys users of GATE up to mid-2000.

- [Cunningham et al. 00c] and [Cunningham et al. 99]

- (COLING 2000, AISB 1999) summarise experiences with GATE version 1.

- [Cunningham et al. 00b]

- (LREC 2000) taxonomises Language Engineering components and discusses the requirements analysis for GATE version 2.

- [Bontcheva et al. 00] and [Brugman et al. 99]

- (COLING 2000, technical report) describe a prototype of GATE version 2 that integrated with the EUDICO multimedia markup tool from the Max Planck Institute.

- [Gambäck & Olsson 00]

- (LREC 2000) discusses experiences in the Svensk project, which used GATE version 1 to develop a reusable toolbox of Swedish language processing components.

- [Cunningham 99a]

- (JNLE) reviewed and synthesised definitions of Language Engineering.

- [Stevenson et al. 98] and [Cunningham et al. 98b]

- (ECAI 1998, NeMLaP 1998) report work on implementing a word sense tagger in GATE version 1.

- [Cunningham et al. 97b]

- (ANLP 1997) presents motivation for GATE and GATE-like infrastructural systems for Language Engineering.

- [Gaizauskas et al. 96b, Cunningham et al. 97a, Cunningham et al. 96e]

- (ICTAI 1996, TITPSTER 1997, NeMLaP 1996) report work on GATE version 1.

- [Cunningham et al. 96c, Cunningham et al. 96d, Cunningham et al. 95]

- (COLING 1996, AISB Workshop 1996, technical report) report early work on GATE version 1.

- [Cunningham et al. 96b]

- (TIPSTER) discusses a selection of projects in Sheffield using GATE version 1 and the TIPSTER architecture it implemented.

- [Cunningham et al. 96a]

- (manual) was the guide to developing CREOLE components for GATE version 1.

- [Gaizauskas et al. 96a]

- (manual) was the user guide for GATE version 1.

- [Humphreys et al. 96]

- (manual) desribes the language processing components distributed with GATE version 1.

- [Cunningham 94, Cunningham et al. 94]

- (NeMLaP 1994, technical report) argue that software engineering issues such as reuse, and framework construction, are important for language processing R&D.

- [Dowman et al. 05b]

- (World Wide Web Conference Paper) The Web is used to assist the annotation and indexing of broadcast news.

- [Dowman et al. 05a]

- (Euro Interactive Television Conference Paper) A system which can use material from the Internet to augment television news broadcasts.

- [Dowman et al. 05c]

- (Second European Semantic Web Conference Paper) A system that semantically annotates television news broadcasts using news websites as a resource to aid in the annotation process.

- [Li et al. 05a]

- (Proceedings of Sheffield Machine Learning Workshop) describe an SVM based IE system which uses the SVM with uneven margins as learning component and the GATE as NLP processing module.

- [Li et al. 05b]

- (Proceedings of Ninth Conference on Computational Natural Language Learning (CoNLL-2005)) uses the uneven margins versions of two popular learning algorithms SVM and Perceptron for IE to deal with the imbalanced classification problems derived from IE.

- [Li et al. 05c]

- (Proceedings of Fourth SIGHAN Workshop on Chinese Language processing (Sighan-05)) used Perceptron learning, a simple, fast and effective learning algorithm, for Chinese word segmentation.

- [Aswani et al. 05]

- (Proceedings of Fifth International Conference on Recent Advances in Natural Language Processing (RANLP2005)) It is a full-featured annotation indexing and search engine, developed as a part of the GATE. It is powered with Apache Lucene technology and indexes a variety of documents supported by the GATE.

- [Li et al. 05c]

- (Proceedings of Fourth SIGHAN Workshop on Chinese Language processing (Sighan-05)) a system for Chinese word segmentation based on Perceptron learning, a simple, fast and effective learning algorithm.

- [Wang et al. 05]

- (Proceedings of the 2005 IEEE/WIC/ACM International Conference on Web Intelligence (WI 2005)) Extracting a Domain Ontology from Linguistic Resource Based on Relatedness Measurements.

- [Ursu et al. 05]

- (Proceedings of the 2nd European Workshop on the Integration of Knowledge, Semantic and Digital Media Technologies (EWIMT 2005))Digital Media Preservation and Access through Semantically Enhanced Web-Annotation.

- [Polajnar et al. 05] (University of Sheffield-Research Memorandum CS-05-10) User-Friendly Ontology Authoring Using a Controlled Language.

- [Aswani et al. 06] (Proceedings of the 5th International Semantic Web Conference (ISWC2006)) In this paper the problem of disambiguating author instances in ontology is addressed. We describe a web-based approach that uses various features such as publication titles, abstract, initials and co-authorship information.

1These were, at least, our ideals; of course we didn’t completely live up to them…

2This is a restricted form of the main GNU licence, which means that GATE can be embedded in commercial products if required.

3Follow the ‘support’ link from the GATE web server to subscribe to the mailing list.

4The terms ‘resource’ and ‘component’ are synonymous in this context. ‘Resource’ is used instead of just ‘component’ because it is a common term in the literature of the field: cf. the Language Resources and Evaluation conference series [LREC-1 98, LREC-2 00].

5The main JAR file (gate.jar) supplies the framework, built-in resources and various 3rd-party libraries; the second file (guk.jar, the GATE Unicode Kit) contains Unicode support (e.g. additional input methods for languages not currently supported by the JDK). They are separate because the latter has to be a Java extension with a privileged security profile.

6JDK: Java Development Kit, Sun Microsystem’s Java implementation. Unicode support is being actively improved by Sun, but at the time of writing many languages are still unsupported. In fact, Unicode itself doesn’t support all languages, e.g. Sylheti; hopefully this will change in time.

7Perhaps because Outlook Express trashed her mail folder again, or because she got tired of Microsoft-specific viruses and hadn’t heard of Netscape or Emacs.

8While developing, she uses a file:/… URL; for deployment she can put them on a web server.

9Languages other than Java require an additional interface layer, such as JNI, the Java Native Interface, which is in C.