Developing Language Processing

Components with GATE

Version 5 (a User Guide)

For GATE version 5.0

(built May 28, 2009)

Hamish Cunningham

Diana Maynard

Kalina Bontcheva

Valentin Tablan

Cristian Ursu

Marin Dimitrov

Mike Dowman

Niraj Aswani

Ian Roberts

Yaoyong Li

Andrey Shafirin

Adam Funk

©The University of Sheffield 2001-2009

http://gate.ac.uk/

Work on GATE has been partly supported by EPSRC grants GR/K25267 (Large-Scale Information Extraction), GR/M31699 (GATE 2), RA007940 (EMILLE), GR/N15764/01 (AKT) and GR/R85150/01 (MIAKT), AHRB grant APN16396 (ETCSL/GATE), and several EU-funded projects (SEKT, TAO, NeOn, MediaCampaign, MUSING, KnowledgeWeb, PrestoSpace, h-TechSight, enIRaF).

Contents

1.1 How to Use This Text

1.2 Context

1.3 Overview

1.3.1 Developing and Deploying Language Processing Facilities

1.3.2 Built-in Components

1.3.3 Additional Facilities

1.3.4 An Example

1.4 Structure of the Book

1.5 Further Reading

2 Change Log

2.1 Version 5.0 (May 2009)

2.1.1 Major new features

2.1.2 Other new features and improvements

2.1.3 Specific bug fixes

2.2 Version 4.0 (July 2007)

2.2.1 Major new features

2.2.2 Other new features and improvements

2.2.3 Bug fixes and optimizations

2.3 Version 3.1 (April 2006)

2.3.1 Major new features

2.3.2 Other new features and improvements

2.3.3 Bug fixes

2.4 January 2005

2.5 December 2004

2.6 September 2004

2.7 Version 3 Beta 1 (August 2004)

2.8 July 2004

2.9 June 2004

2.10 April 2004

2.11 March 2004

2.12 Version 2.2 – August 2003

2.13 Version 2.1 – February 2003

2.14 June 2002

3 How To…

3.1 Download GATE*

3.2 Install and Run GATE*

3.2.1 The Easy Way

3.2.2 The Hard Way (1)

3.2.3 The Hard Way (2): Subversion

3.3 [D,F] Use System Properties with GATE

3.4 [D,F] Use (CREOLE) Plug-ins

3.5 Troubleshooting

3.6 [D] Get Started with the GUI*

3.7 [D,F] Configure GATE

3.7.1 [F] Save Config Data to gate.xml

3.8 Build GATE

3.9 [D] Use GATE with Maven or JPF

3.10 [D,F] Create a New (CREOLE) Resource

3.11 [F] Instantiate (CREOLE) Resources

3.12 [D] Load Resources: document, tokenizer...*

3.12.1 Loading Language Resources: document, corpora...

3.12.2 Loading Processing Resources: tokenizer, gazetteer...

3.12.3 Loading and Processing Large Corpora

3.13 [D,F] Configure (CREOLE) Resources

3.14 [D] Create and Run an Application*

3.15 [D] Run PRs Conditionally on Document Features

3.16 [D] View Annotations*

3.17 [D] Do Information Extraction with ANNIE*

3.18 [D] Modify ANNIE

3.19 [D] Create and Edit Annotations*

3.19.1 Schema-driven editing

3.20 [D] Saving annotations*

3.21 [D,F] Create a New Annotation Schema

3.22 [D] Save and Restore LRs in Data Stores

3.23 [D] Save Resource Parameter State to File

3.24 [D] Save an application with its resources (e.g. GATE Teamware)

3.25 [D,F] Perform Evaluation with the AnnotationDiff tool

3.26 [D] Use the Corpus Benchmark Evaluation tool

3.26.1 GUI mode

3.26.2 How to define the properties of the benchmark tool

3.27 [D] Write JAPE Grammars

3.28 [F] Embed NLE in other Applications

3.29 [F] Use GATE within a Spring application

3.30 [F] Use GATE within a Tomcat Web Application

3.30.1 Recommended Directory Structure

3.30.2 Configuration files

3.30.3 Initialization code

3.31 [F] Use GATE in a Multithreaded Environment

3.32 [D,F] Add support for a new document format

3.33 [D] Dump Results to File

3.34 [D] Stop GUI ‘Freezing’ on Linux

3.35 [D] Stop GUI Crashing on Linux

3.36 [D] Stop GATE Restoring GUI Sessions/Options

3.37 Work with Unicode

3.38 Work with Oracle and PostgreSQL

3.39 Annotate using ontologies

4 CREOLE: the GATE Component Model

4.1 The Web and CREOLE

4.2 Java Beans: a Simple Component Architecture

4.3 The GATE Framework

4.4 Language Resources and Processing Resources

4.5 The Lifecycle of a CREOLE Resource

4.6 Processing Resources and Applications

4.7 Language Resources and Datastores

4.8 Built-in CREOLE Resources

4.9 CREOLE Resource Configuration

4.9.1 Configuration with XML

4.9.2 Configuring resources using annotations

4.9.3 Mixing the configuration styles

5 Visual CREOLE

5.1 Gazetteer Visual Resource - GAZE

5.1.1 Running Modes

5.1.2 Loading a Gazetteer

5.1.3 Linear Definition Pane

5.1.4 Linear Definition Toolbar

5.1.5 Operations on Linear Definition Nodes

5.1.6 Gazetteer List Pane

5.1.7 Mapping Definition Pane

5.2 Ontogazetteer

5.2.1 Gazetteer Lists Editor and Mapper

5.2.2 Ontogazetteer Editor

5.3 The Document Editor

5.3.1 The Annotation Sets View

5.3.2 The Annotations List View

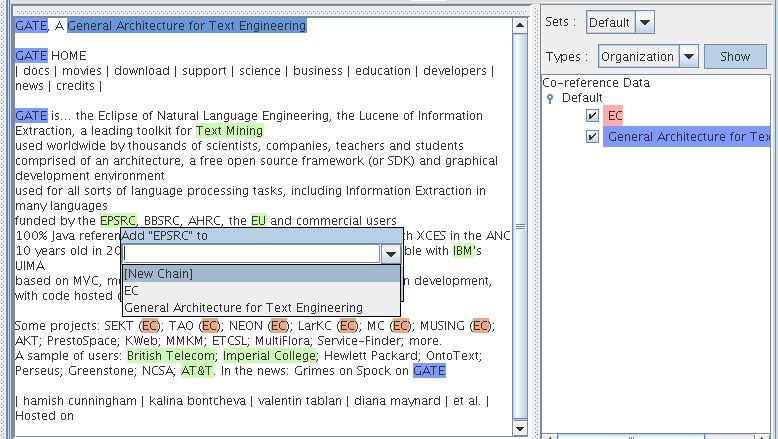

5.3.3 The Co-reference Editor

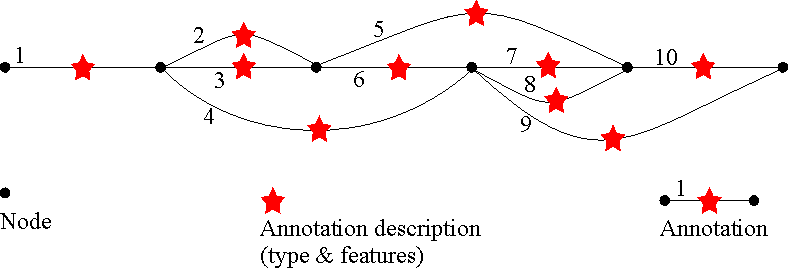

6 Language Resources: Corpora, Documents and Annotations

6.1 Features: Simple Attribute/Value Data

6.2 Corpora: Sets of Documents plus Features

6.3 Documents: Content plus Annotations plus Features

6.4 Annotations: Directed Acyclic Graphs

6.4.1 Annotation Schemas

6.4.2 Examples of Annotated Documents

6.4.3 Creating, Viewing and Editing Diverse Annotation Types

6.5 Document Formats

6.5.1 Detecting the right reader

6.5.2 XML

6.5.3 HTML

6.5.4 SGML

6.5.5 Plain text

6.5.6 RTF

6.5.7 Email

6.6 XML Input/Output

7 JAPE: Regular Expressions Over Annotations

7.1 Matching operators in detail

7.1.1 Equality operators (“==” and “!=”)

7.1.2 Comparison operators (“<”, “<=”, “>=” and “>”)

7.1.3 Regular expression operators (“=~”, “==~”, “!~” and “!=~”)

7.1.4 Contextual operators (“contains” and “within”)

7.2 Use of Context

7.3 Use of Priority

7.4 Use of negation

7.5 Useful tricks

7.6 Ontology aware grammar transduction

7.7 Using Java code in JAPE rules

7.7.1 Adding a feature to the document

7.7.2 Using named blocks

7.7.3 Java RHS overview

7.8 Optimising for speed

7.9 Serializing JAPE Transducer

7.9.1 How to serialize?

7.9.2 How to use the serialized grammar file?

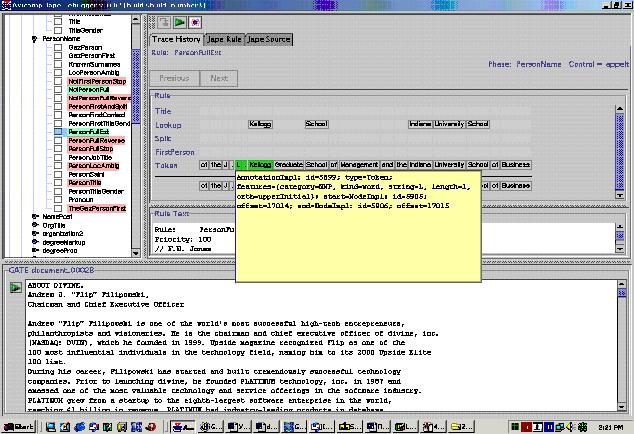

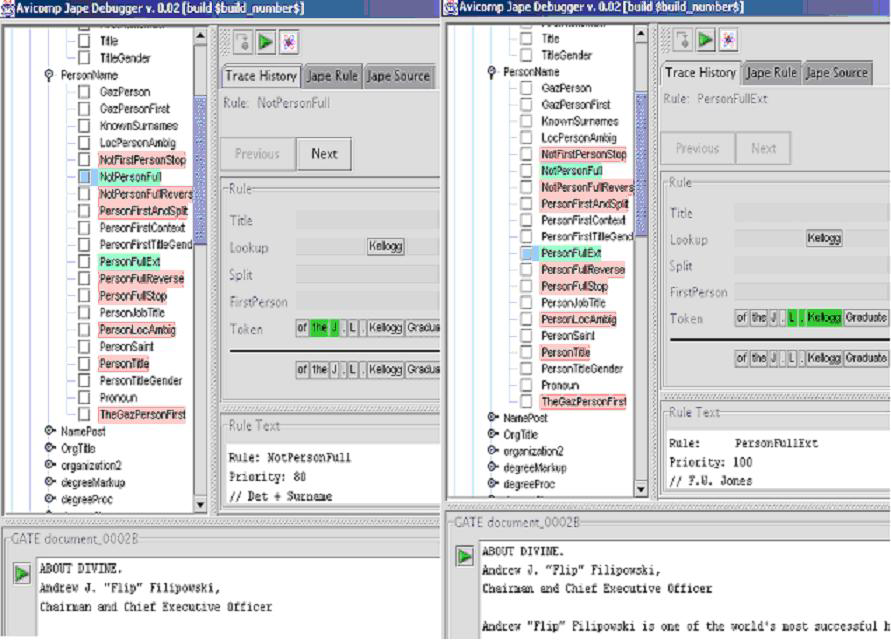

7.10 The JAPE Debugger

7.10.1 Debugger GUI

7.10.2 Using the Debugger

7.10.3 Known Bugs

7.11 Notes for Montreal Transducer users

8 ANNIE: a Nearly-New Information Extraction System

8.1 Tokeniser

8.1.1 Tokeniser Rules

8.1.2 Token Types

8.1.3 English Tokeniser

8.2 Gazetteer

8.3 Sentence Splitter

8.4 RegEx Sentence Splitter

8.5 Part of Speech Tagger

8.6 Semantic Tagger

8.7 Orthographic Coreference (OrthoMatcher)

8.7.1 GATE Interface

8.7.2 Resources

8.7.3 Processing

8.8 Pronominal Coreference

8.8.1 Quoted Speech Submodule

8.8.2 Pleonastic It submodule

8.8.3 Pronominal Resolution Submodule

8.8.4 Detailed description of the algorithm

8.9 A Walk-Through Example

8.9.1 Step 1 - Tokenisation

8.9.2 Step 2 - List Lookup

8.9.3 Step 3 - Grammar Rules

9 (More CREOLE) Plugins

9.1 Document Reset

9.2 Verb Group Chunker

9.3 Noun Phrase Chunker

9.3.1 Differences from the Original

9.3.2 Using the Chunker

9.4 OntoText Gazetteer

9.4.1 Prerequisites

9.4.2 Setup

9.5 Flexible Gazetteer

9.6 Gazetteer List Collector

9.7 Tree Tagger

9.7.1 POS tags

9.8 Stemmer

9.8.1 Algorithms

9.9 GATE Morphological Analyzer

9.9.1 Rule File

9.10 MiniPar Parser

9.10.1 Platform Supported

9.10.2 Resources

9.10.3 Parameters

9.10.4 Prerequisites

9.10.5 Grammatical Relationships

9.11 RASP Parser

9.12 SUPPLE Parser (formerly BuChart)

9.12.1 Requirements

9.12.2 Building SUPPLE

9.12.3 Running the parser in GATE

9.12.4 Viewing the parse tree

9.12.5 System properties

9.12.6 Configuration files

9.12.7 Parser and Grammar

9.12.8 Mapping Named Entities

9.12.9 Upgrading from BuChart to SUPPLE

9.13 Stanford Parser

9.13.1 Input requirements

9.13.2 Initialization parameters

9.13.3 Runtime parameters

9.14 Montreal Transducer

9.14.1 Main Improvements

9.14.2 Main Bug fixes

9.15 Language Plugins

9.15.1 French Plugin

9.15.2 German Plugin

9.15.3 Romanian Plugin

9.15.4 Arabic Plugin

9.15.5 Chinese Plugin

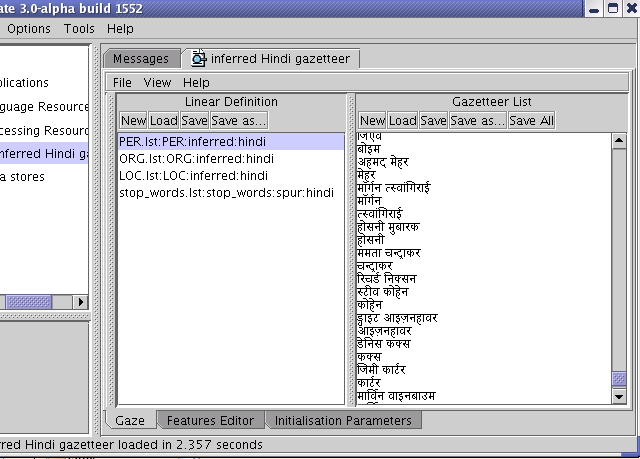

9.15.6 Hindi Plugin

9.16 Chemistry Tagger

9.16.1 Using the tagger

9.17 Flexible Exporter

9.18 Annotation Set Transfer

9.19 Information Retrieval in GATE

9.19.1 Using the IR functionality in GATE

9.19.2 Using the IR API

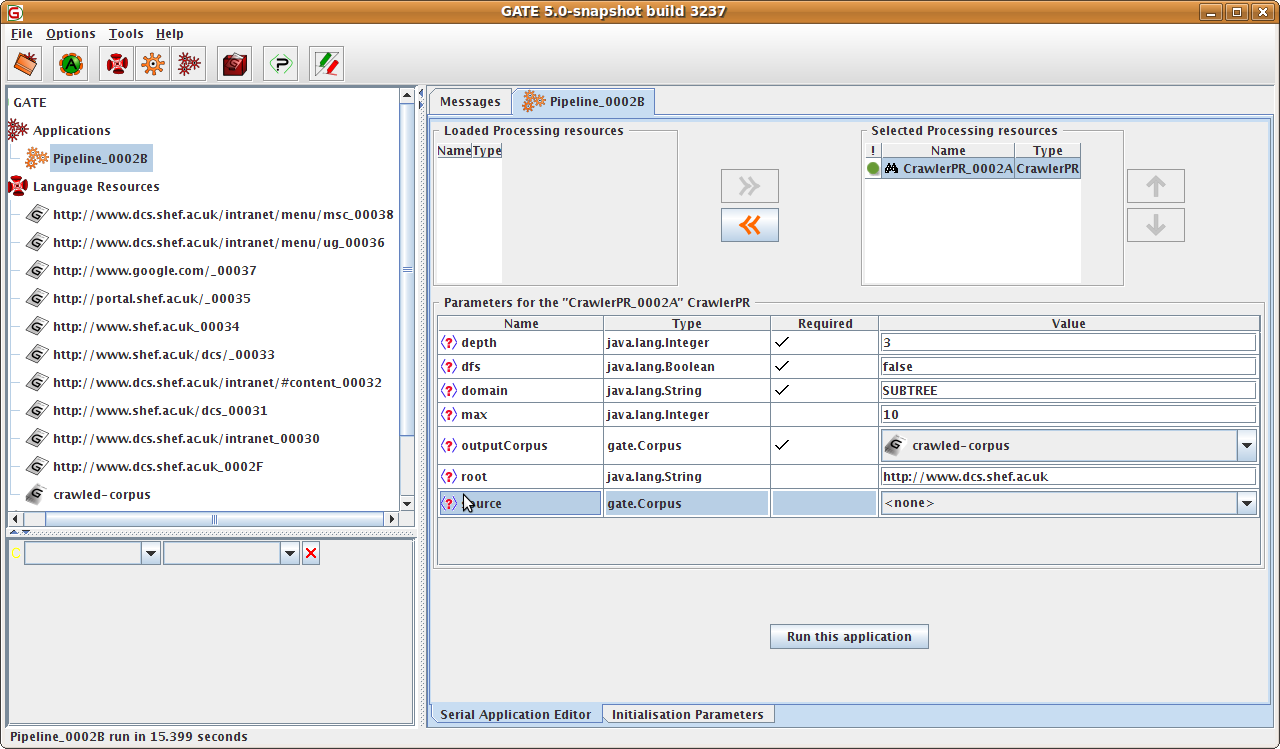

9.20 Crawler

9.20.1 Using the Crawler PR

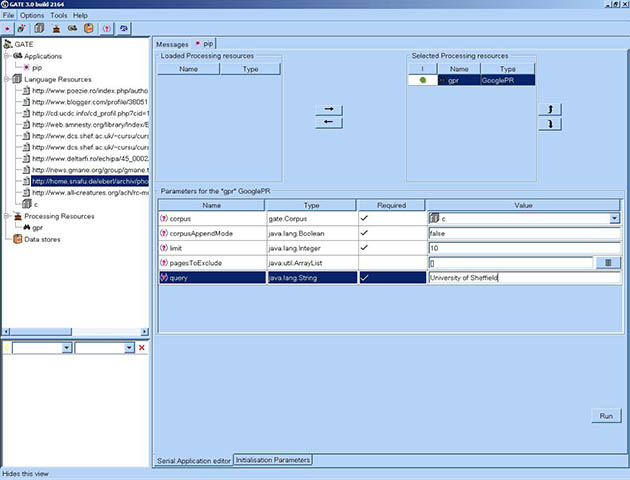

9.21 Google Plugin

9.21.1 Using the GooglePR

9.22 Yahoo Plugin

9.22.1 Using the YahooPR

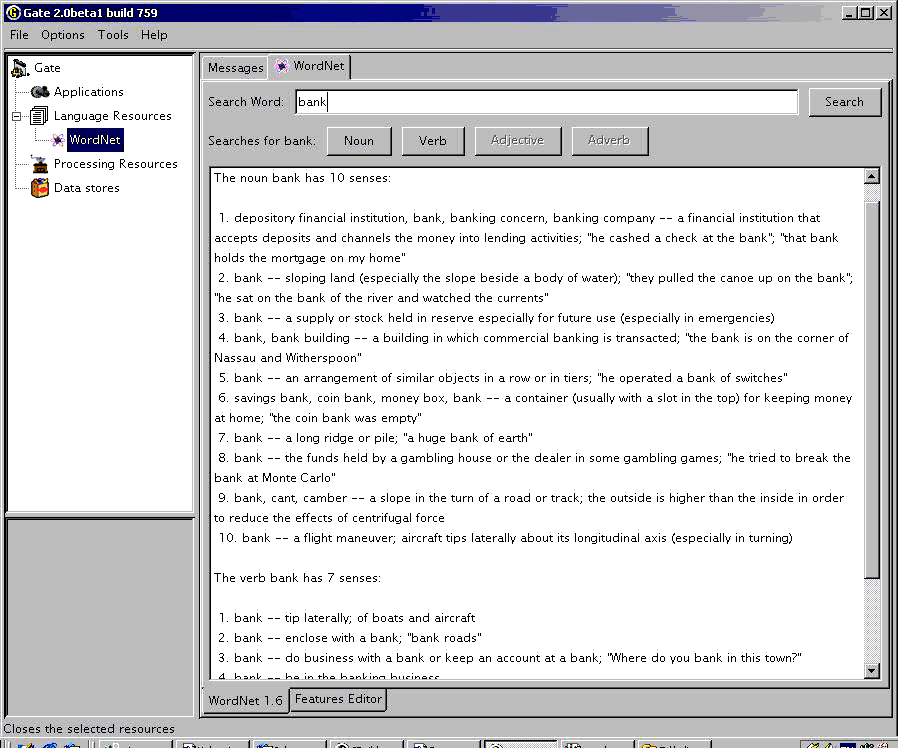

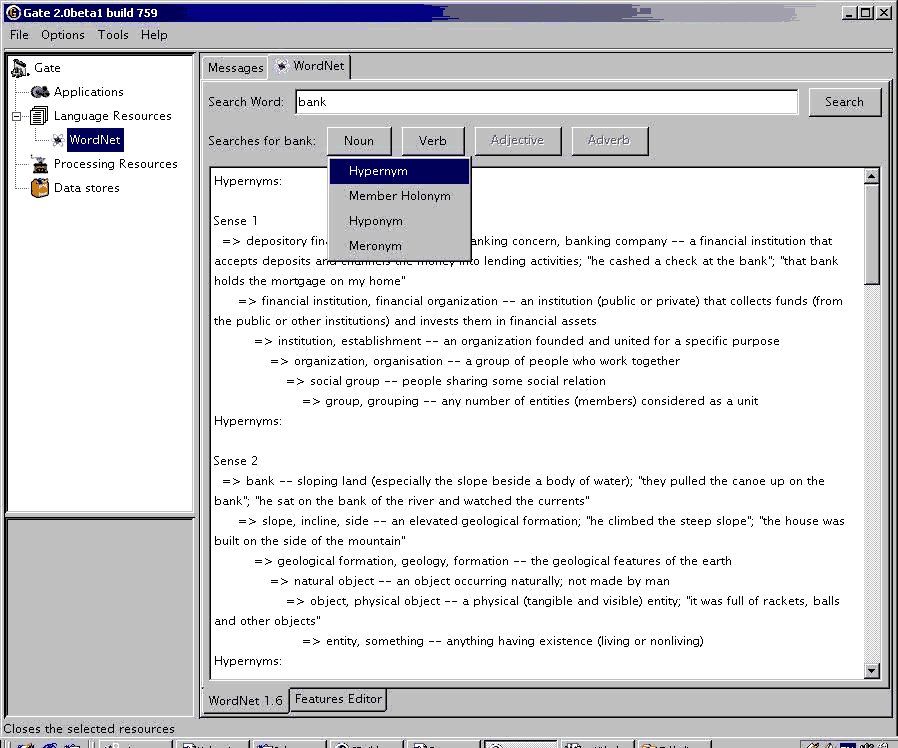

9.23 WordNet in GATE

9.23.1 The WordNet API

9.24 Machine Learning in GATE

9.24.1 ML Generalities

9.24.2 The Machine Learning PR in GATE

9.24.3 The WEKA Wrapper

9.24.4 Training an ML model with the ML PR and WEKA wrapper

9.24.5 Applying a learnt model

9.24.6 The MAXENT Wrapper

9.24.7 The SVM Light Wrapper

9.25 MinorThird

9.26 MIAKT NLG Lexicon

9.26.1 Complexity and Generality

9.27 Kea - Automatic Keyphrase Detection

9.27.1 Using the “KEA Keyphrase Extractor” PR

9.27.2 Using Kea corpora

9.28 Ontotext JapeC Compiler

9.29 ANNIC

9.29.1 Instantiating SSD

9.29.2 Search GUI

9.29.3 Using SSD from your code

9.30 Annotation Merging

9.30.1 Two implemented methods

9.30.2 Annotation Merging Plugin

9.31 OntoRoot Gazetteer

9.31.1 How does it work?

9.31.2 Initialisation of OntoRoot Gazetteer

9.32 Chinese Word Segmentation

9.33 Copying Annotations Between Documents

10 Working with Ontologies

10.1 Data Model for Ontologies

10.1.1 Hierarchies of classes and restrictions

10.1.2 Instances

10.1.3 Hierarchies of properties

10.2 Ontology Event Model (new in Gate 4)

10.2.1 What happens when a resource is deleted?

10.3 OWLIM Ontology LR

10.4 GATE’s Ontology Editor

10.5 Instantiating OWLIM Ontology using GATE API

10.6 Ontology-Aware JAPE Transducer

10.7 Annotating text with Ontological Information

10.8 Populating Ontologies

10.9 Ontology Annotation Tool

10.9.1 Viewing Annotated Texts

10.9.2 Editing Existing Annotations

10.9.3 Adding New Annotations

10.9.4 Options

11 Machine Learning API

11.1 ML Generalities

11.1.1 Some definitions

11.1.2 GATE-specific interpretation of the above definitions

11.2 The Batch Learning PR in GATE

11.2.1 The settings not specified in the configuration file

11.2.2 All the settings in the XML configuration file

11.3 Examples of configuration file for the three learning types

11.4 How to use the ML API

11.5 The outputs of the ML API

11.5.1 Training results

11.5.2 Application results

11.5.3 Evaluation results

11.5.4 Feature files

12 Tools for Alignment Tasks

12.1 Introduction

12.2 Tools for Alignment Tasks

12.2.1 Compound Document

12.2.2 Compound Document Editor

12.2.3 Composite Document

12.2.4 DeleteMembersPR

12.2.5 SwitchMembersPR

12.2.6 Saving as XML

12.2.7 Alignment Editor

13 Performance Evaluation of Language Analysers

13.1 The AnnotationDiff Tool

13.2 The six annotation relations explained

13.3 Benchmarking tool

13.4 Metrics for Evaluation in Information Extraction

13.5 Metrics for Evaluation of Inter-Annotator Agreement

13.6 A Plugin Computing Inter-Annotator Agreement (IAA)

13.6.1 IAA for Classification Task

13.6.2 IAA For Named Entity Annotation

13.6.3 The BDM Based IAA Scores

13.7 A Plugin Computing the BDM Scores for an Ontology

14 Users, Groups, and LR Access Rights

14.1 Java serialisation and LR access rights

14.2 Oracle Datastore and LR access rights

14.2.1 Users, Groups, Sessions and Access Modes

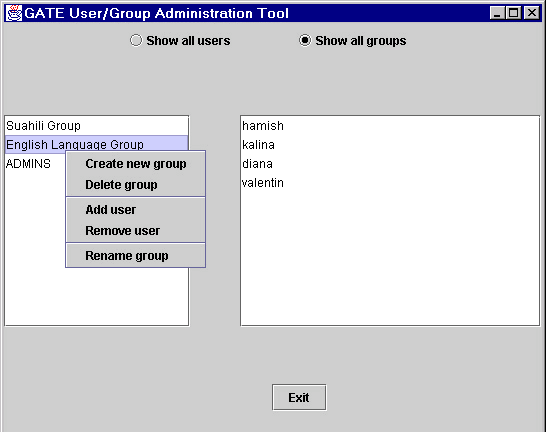

14.2.2 User/Group Administration

14.2.3 The API

15 Developing GATE

15.1 Creating new plugins

15.1.1 Where to keep plugins in the GATE hierarchy

15.1.2 Writing a new PR

15.1.3 Writing a new VR

15.1.4 Adding plugins to the nightly build

15.2 Updating this User Guide

15.2.1 Building the User Guide

15.2.2 Making changes to the User Guide

16 Combining GATE and UIMA

16.1 Embedding a UIMA TAE in GATE

16.1.1 Mapping File Format

16.1.2 The UIMA component descriptor

16.1.3 Using the AnalysisEnginePR

16.1.4 Current limitations

16.2 Embedding a GATE CorpusController in UIMA

16.2.1 Mapping file format

16.2.2 The GATE application definition

16.2.3 Configuring the GATEApplicationAnnotator

Appendices

A Design Notes

A.1 Patterns

A.1.1 Components

A.1.2 Model, view, controller

A.1.3 Interfaces

A.2 Exception Handling

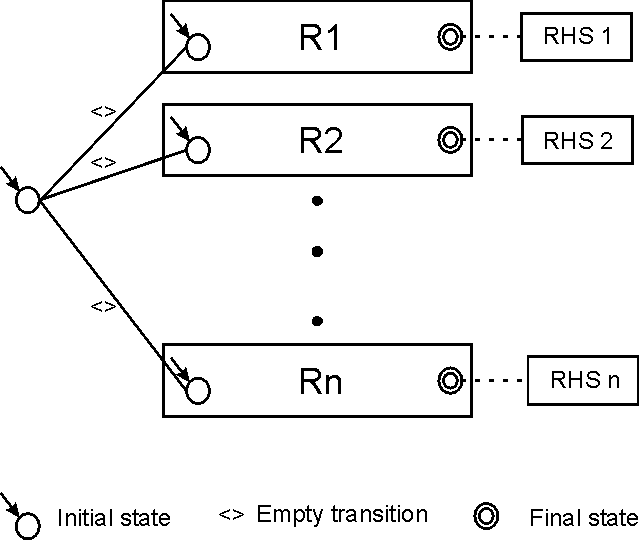

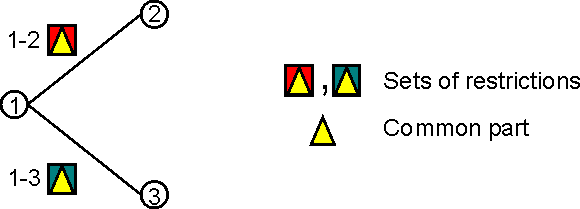

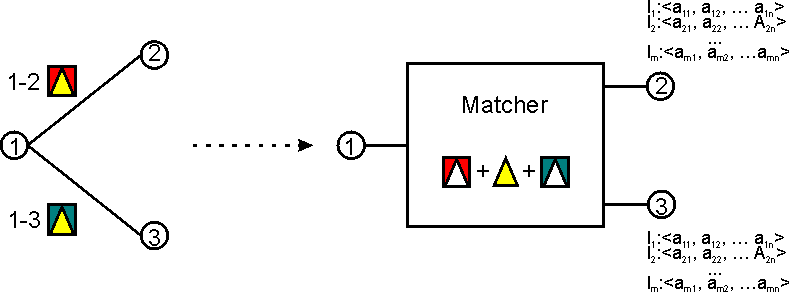

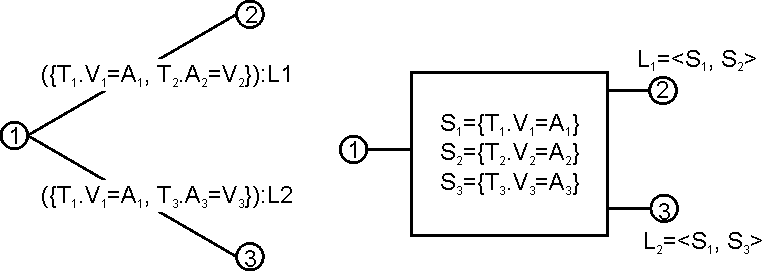

B JAPE: Implementation

B.1 Formal Description of the JAPE Grammar

B.2 Relation to CPSL

B.3 Algorithms for JAPE Rule Application

B.3.1 The first algorithm

B.3.2 Algorithm 2

B.4 Label Binding Scheme

B.5 Classes

B.6 Implementation

B.6.1 A Walk-Through

B.6.2 Example RHS code

B.7 Compilation

B.8 Using a Different Java Compiler

C Ant Tasks for GATE

C.1 Declaring the Tasks

C.2 The packagegapp task - bundling an application with its dependencies

C.2.1 Introduction

C.2.2 Basic Usage

C.2.3 Handling non-plugin resources

C.2.4 Streamlining your plugins

C.2.5 Bundling extra resources

C.3 The expandcreoles task - merging annotation-driven config into creole.xml

D Named-Entity State Machine Patterns

D.1 Main.jape

D.2 first.jape

D.3 firstname.jape

D.4 name.jape

D.4.1 Person

D.4.2 Location

D.4.3 Organization

D.4.4 Ambiguities

D.4.5 Contextual information

D.5 name_post.jape

D.6 date_pre.jape

D.7 date.jape

D.8 reldate.jape

D.9 number.jape

D.10 address.jape

D.11 url.jape

D.12 identifier.jape

D.13 jobtitle.jape

D.14 final.jape

D.15 unknown.jape

D.16 name_context.jape

D.17 org_context.jape

D.18 loc_context.jape

D.19 clean.jape

E Part-of-Speech Tags used in the Hepple Tagger

F Sample ML Configuration File

G IAA Measures for Classification Tasks

H Keyboard shortcuts for GATE

References

Chapter 1

Introduction [#]

Software documentation is like sex: when it is good, it is very, very good; and when it is bad, it is better than nothing. (Anonymous.)

There are two ways of constructing a software design: one way is to make it so simple that there are obviously no deficiencies; the other way is to make it so complicated that there are no obvious deficiencies. (C.A.R. Hoare)

A computer language is not just a way of getting a computer to perform operations but rather that it is a novel formal medium for expressing ideas about methodology. Thus, programs must be written for people to read, and only incidentally for machines to execute. (The Structure and Interpretation of Computer Programs, H. Abelson, G. Sussman and J. Sussman, 1985.)

If you try to make something beautiful, it is often ugly. If you try to make something useful, it is often beautiful. (Oscar Wilde)1

GATE is an infrastructure for developing and deploying software components that process human language. GATE helps scientists and developers in three ways:

- by specifiying an architecture, or organisational structure, for language processing software;

- by providing a framework, or class library, that implements the architecture and can be used to embed language processing capabilities in diverse applications;

- by providing a development environment built on top of the framework made up of convenient graphical tools for developing components.

The architecture exploits component-based software development, object orientation and mobile code. The framework and development environment are written in Java and available as open-source free software under the GNU library (or lesser) licence2. GATE uses Unicode throughout [Unicode Consortium 96, Tablan et al. 02], and has been tested on a variety of Slavic, Germanic, Romance, and Indic languages [Maynard et al. 01, Gambäck & Olsson 00, McEnery et al. 00].

From a scientific point-of-view, GATE’s contribution is to quantitative measurement of accuracy and repeatability of results for verification purposes.

GATE has been in development at the University of Sheffield since 1995 and has been used in a wide variety of research and development projects [Maynard et al. 00]. Version 1 of GATE was released in 1996, was licensed by several hundred organisations, and used in a wide range of language analysis contexts including Information Extraction ([Cunningham 99b, Appelt 99, Gaizauskas & Wilks 98, Cowie & Lehnert 96]) in English, Greek, Spanish, Swedish, German, Italian, French, Bulgarian, Russian, and a number of other languages. Version 4 of the system is available from http://gate.ac.uk/download/.

This book describes how to use GATE to develop language processing components, test their performance and deploy them as parts of other applications. In the rest of this chapter:

- section 1.1 describes the best way to use this book;

- section 1.2 briefly notes that the context of GATE is applied language processing, or Language Engineering;

- section 1.3 gives an overview of developing using GATE;

- section 1.4 describes the structure of the rest of the book;

- section 1.5 lists other publications about GATE.

Note: if you don’t see the component you need in this document, or if we mention a component that you can’t see in the software, contact gate-users@lists.sourceforge.net3 – various components are developed by our collaborators, who we will be happy to put you in contact with. (Often the process of getting a new component is as simple as typing the URL into GATE; the system will do the rest.)

1.1 How to Use This Text [#]

It is a good idea to read all of this introduction (you can skip sections 1.2 and 1.5 if pressed); then you can either continue wading through the whole thing or just use chapter 3 as a reference and dip into other chapters for more detail as necessary. Chapter 3 gives instructions for completing common tasks with GATE, organised in a FAQ style: details, and the reasoning behind the various aspects of the system, are omitted in this chapter, so where more information is needed refer to later chapters.

The structure of the book as a whole is detailed in section 1.4 below.

1.2 Context [#]

GATE can be thought of as a Software Architecture for Language Engineering [Cunningham 00].

‘Software Architecture’ is used rather loosely here to mean computer infrastructure for software development, including development environments and frameworks, as well as the more usual use of the term to denote a macro-level organisational structure for software systems [Shaw & Garlan 96].

Language Engineering (LE) may be defined as:

…the discipline or act of engineering software systems that perform tasks involving processing human language. Both the construction process and its outputs are measurable and predictable. The literature of the field relates to both application of relevant scientific results and a body of practice. [Cunningham 99a]

The relevant scientific results in this case are the outputs of Computational Linguistics, Natural Language Processing and Artificial Intelligence in general. Unlike these other disciplines, LE, as an engineering discipline, entails predictability, both of the process of constructing LE-based software and of the performance of that software after its completion and deployment in applications.

Some working definitions:

- Computational Linguistics (CL): science of language that uses computation as an investigative tool.

- Natural Language Processing (NLP): science of computation whose subject matter is data structures and algorithms for computer processing of human language.

- Language Engineering (LE): building NLP systems whose cost and outputs are measurable and predictable.

- Software Architecture: macro-level organisational principles for families of systems. In this context is also used as infrastructure.

- Software Architecture for Language Engineering (SALE): software infrastructure, architecture and development tools for applied CL, NLP and LE.

(Of course the practice of these fields is broader and more complex than these definitions.)

In the scientific endeavours of NLP and CL, GATE’s role is to support experimentation. In this context GATE’s significant features include support for automated measurement (see section 13), providing a ‘level playing field’ where results can easily be repeated across different sites and environments, and reducing research overheads in various ways.

1.3 Overview [#]

1.3.1 Developing and Deploying Language Processing Facilities [#]

GATE as an architecture suggests that the elements of software systems that process natural language can usefully be broken down into various types of component, known as resources4. Components are reusable software chunks with well-defined interfaces, and are a popular architectural form, used in Sun’s Java Beans and Microsoft’s .Net, for example. GATE components are specialised types of Java Bean, and come in three flavours:

- LanguageResources (LRs) represent entities such as lexicons, corpora or ontologies;

- ProcessingResources (PRs) represent entities that are primarily algorithmic, such as parsers, generators or ngram modellers;

- VisualResources (VRs) represent visualisation and editing components that participate in GUIs.

These definitions can be blurred in practice as necessary.

Collectively, the set of resources integrated with GATE is known as CREOLE: a Collection of REusable Objects for Language Engineering. All the resources are packaged as Java Archive (or ‘JAR’) files, plus some XML configuration data. The JAR and XML files are made available to GATE by putting them on a web server, or simply placing them in the local file space. Section 1.3.2 introduces GATE’s built-in resource set.

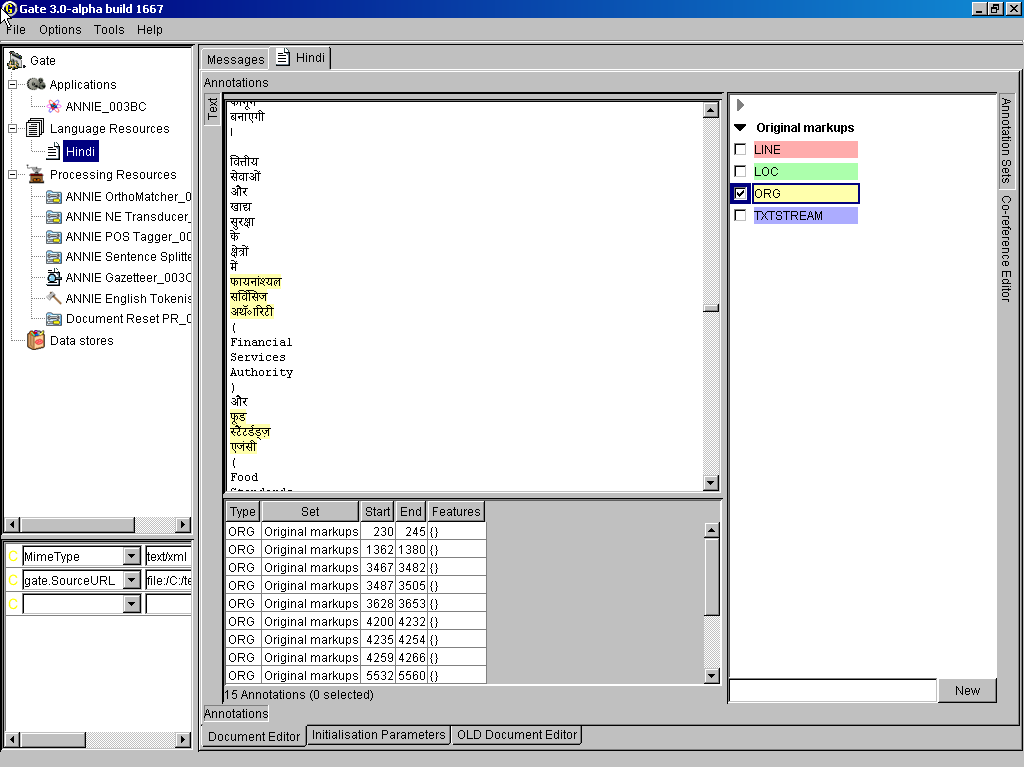

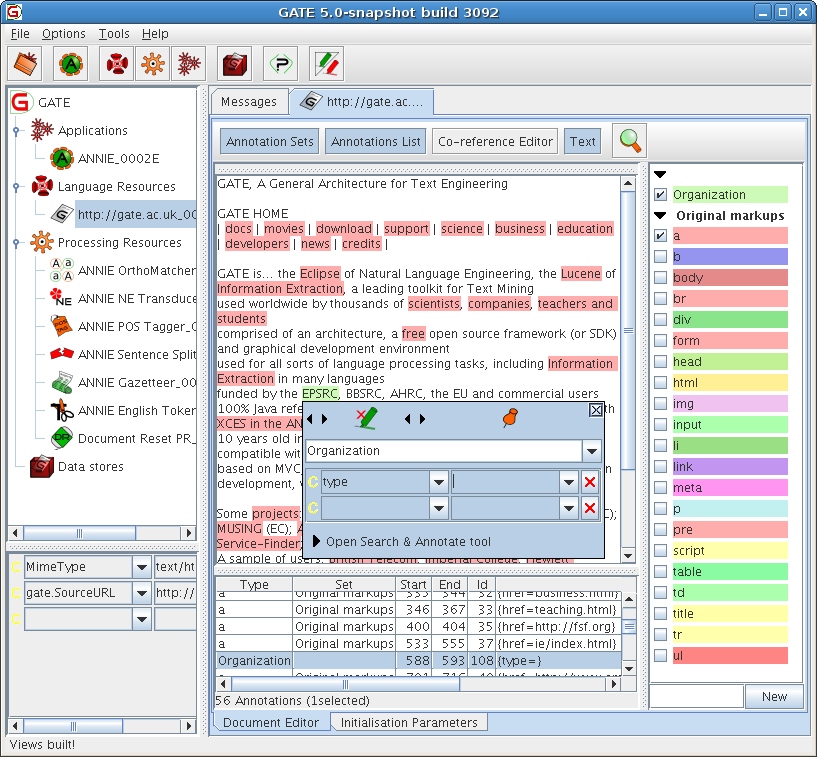

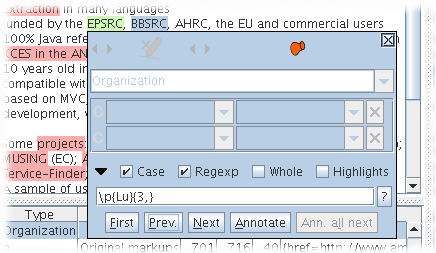

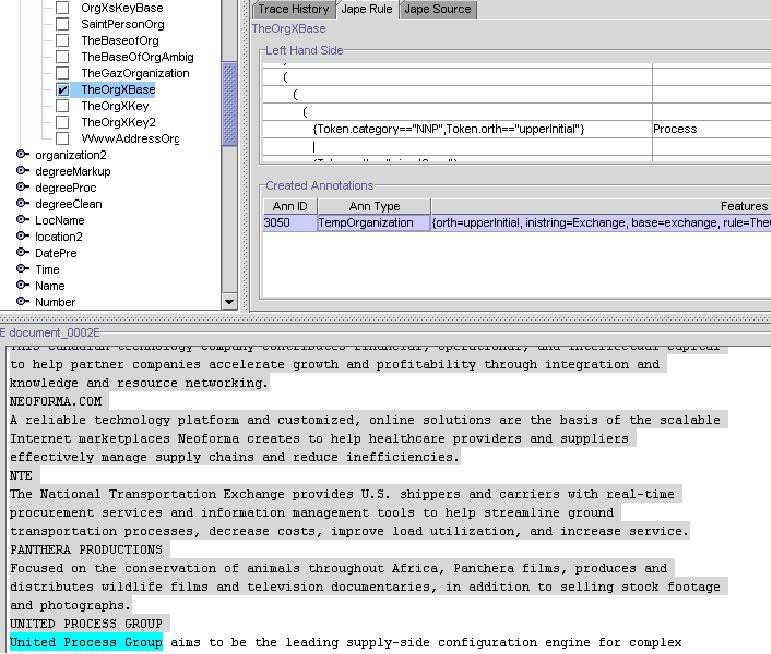

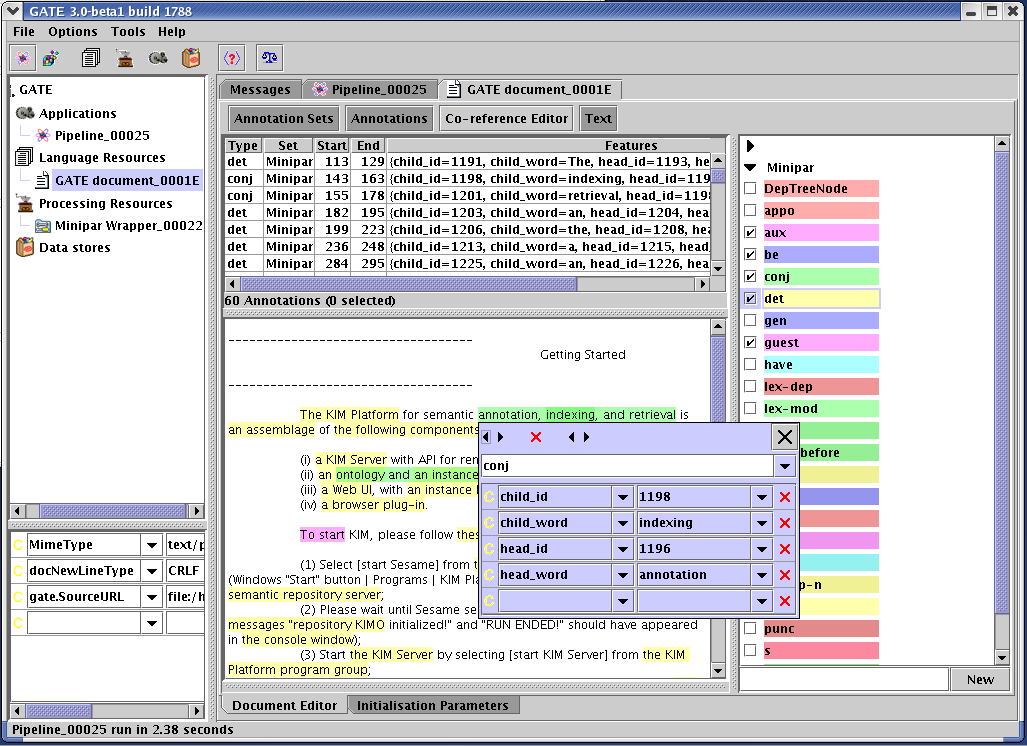

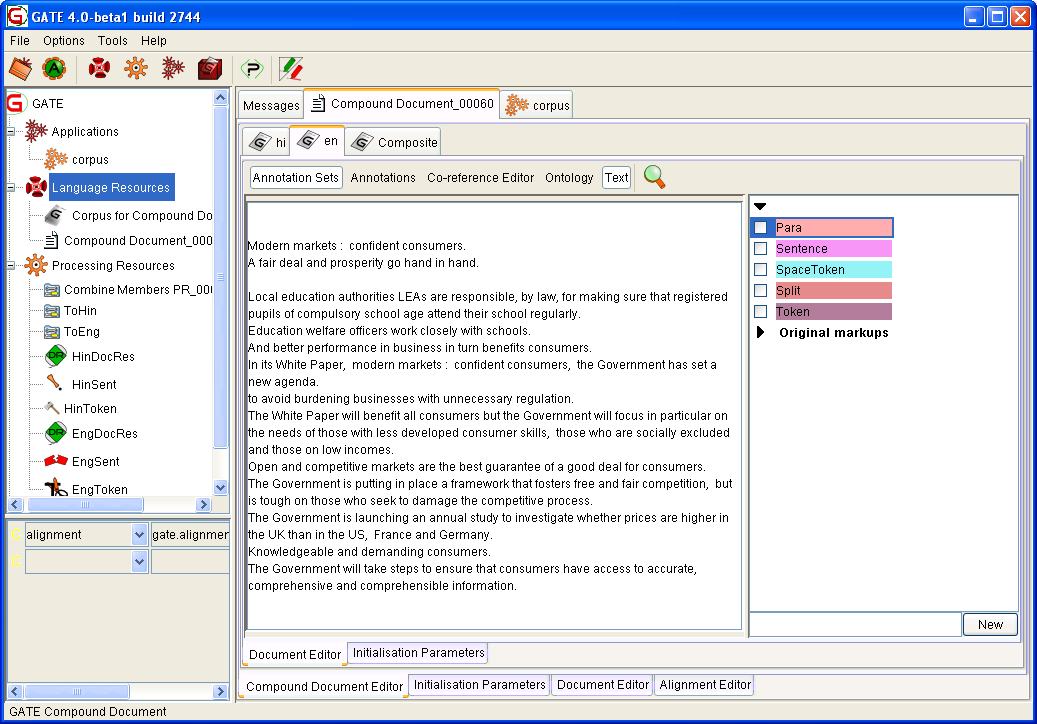

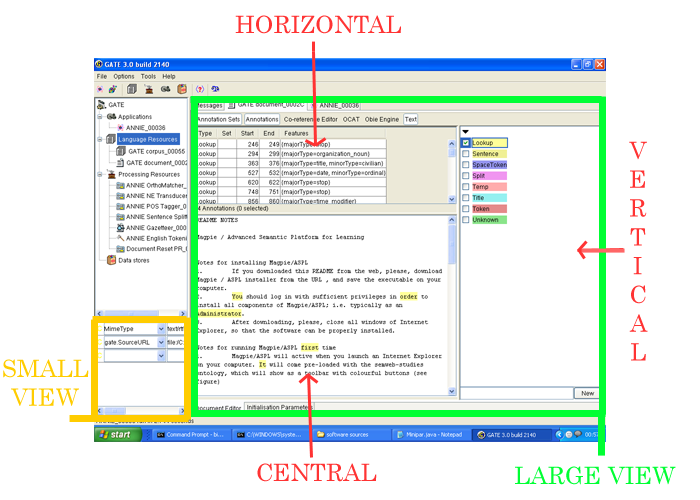

When using GATE to develop language processing functionality for an application, the developer uses the development environment and the framework to construct resources of the three types. This may involve programming, or the development of Language Resources such as grammars that are used by existing Processing Resources, or a mixture of both. The development environment is used for visualisation of the data structures produced and consumed during processing, and for debugging, performance measurement and so on. For example, figure 1.1 is a screenshot of one of the visualisation tools

(displaying named-entity extraction results for a Hindi sentence).

The GATE development environment is analogous to systems like Mathematica for Mathematicians, or JBuilder for Java programmers: it provides a convenient graphical environment for research and development of language processing software.

When an appropriate set of resources have been developed, they can then be embedded in the target client application using the GATE framework. The framework is supplied as two JAR files.5 To embed GATE-based language processing facilities in an application, these JAR files are all that is needed, along with JAR files and XML configuration files for the various resources that make up the new facilities.

1.3.2 Built-in Components [#]

GATE includes resources for common LE data structures and algorithms, including documents, corpora and various annotation types, a set of language analysis components for Information Extraction and a range of data visualisation and editing components.

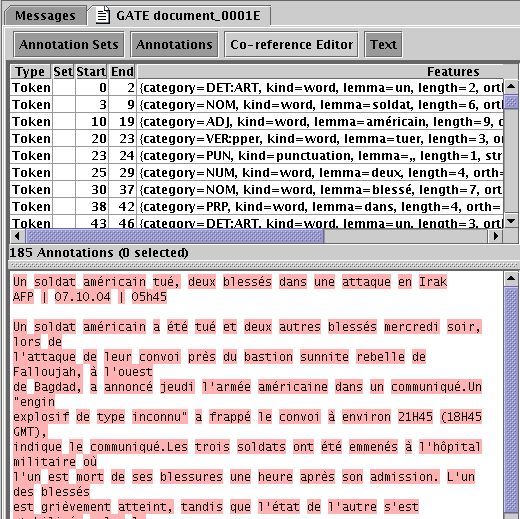

GATE supports documents in a variety of formats including XML, RTF, email, HTML, SGML and plain text. In all cases the format is analysed and converted into a single unified model of annotation. The annotation format is a modified form the TIPSTER format [Grishman 97] which has been made largely compatible with the Atlas format [Bird & Liberman 99], and uses the now standard mechanism of ‘stand-off markup’. GATE documents, corpora and annotations are stored in databases of various sorts, visualised via the development environment, and accessed at code level via the framework. See chapter 6 for more details of corpora etc.

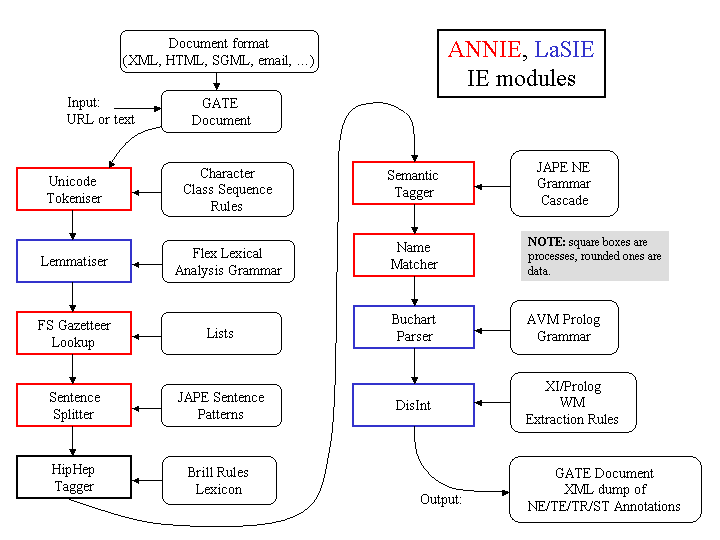

A family of Processing Resources for language analysis is included in the shape of ANNIE, A Nearly-New Information Extraction system. These components use finite state techniques to implement various tasks from tokenisation to semantic tagging or verb phrase chunking. All ANNIE components communicate exclusively via GATE’s document and annotation resources. See chapter 8 for more details. See chapter 5 for visual resources. See chapter 9 for other miscellaneous CREOLE resources.

1.3.3 Additional Facilities [#]

Three other facilities in GATE deserve special mention:

- JAPE, a Java Annotation Patterns Engine, provides regular-expression based pattern/action rules over annotations – see chapter 7.

- The ‘annotation diff’ tool in the development environment implements performance metrics such as precision and recall for comparing annotations. Typically a language analysis component developer will mark up some documents by hand and then use these along with the diff tool to automatically measure the performance of the components. See section 13.

- GUK, the GATE Unicode Kit, fills in some of the gaps in the JDK’s6 support for Unicode, e.g. by adding input methods for various languages from Urdu to Chinese. See section 3.37 for more details.

And by version 4 it will make a mean cup of tea.

1.3.4 An Example [#]

This section gives a very brief example of a typical use of GATE to develop and deploy language processing capabilities in an application, and to generate quantitative results for scientific publication.

Let’s imagine that a developer called Fatima is building an email client7 for Cyberdyne Systems’ large corporate Intranet. In this application she would like to have a language processing system that automatically spots the names of people in the corporation and transforms them into mailto hyperlinks.

A little investigation shows that GATE’s existing components can be tailored to this purpose. Fatima starts up the development environment, and creates a new document containing some example emails. She then loads some processing resources that will do named-entity recognition (a tokeniser, gazetteer and semantic tagger), and creates an application to run these components on the document in sequence. Having processed the emails, she can see the results in one of several viewers for annotations.

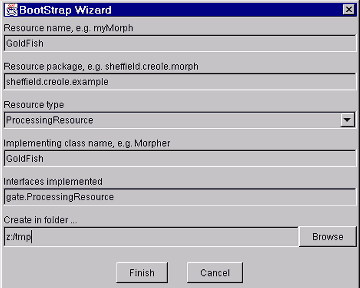

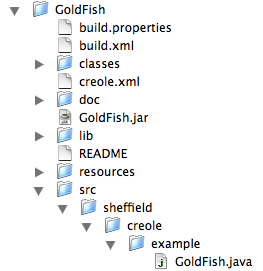

The GATE components are a decent start, but they need to be altered to deal specially with people from Cyberdyne’s personnel database. Therefore Fatima creates new “cyber-” vesions of the gazetteer and semantic tagger resources, using the “bootstrap” tool. This tool creates a directory structure on disk that has some Java stub code, a Makefile and an XML configuration file. After several hours struggling with badly written documentation, Fatima manages to compile the stubs and create a JAR file containing the new resources. She tells GATE the URL of these files8, and the system then allows her to load them in the same way that she loaded the built-in resources earlier on.

Fatima then creates a second copy of the email document, and uses the annotation editing facilities to mark up the results that she would like to see her system producing. She saves this and the version that she ran GATE on into her Oracle datastore (set up for her by the Herculean efforts of the Cyberdyne technical support team, who like GATE because it enables them to claim lots of overtime). From now on she can follow this routine:

- Run her application on the email test corpus.

- Check the performance of the system by running the ‘annotation diff’ tool to compare her manual results with the system’s results. This gives her both percentage accuracy figures and a graphical display of the differences between the machine and human outputs.

- Make edits to the code, pattern grammars or gazetteer lists in her resources, and recompile where necessary.

- Tell GATE to re-initialise the resources.

- Go to 1.

To make the alterations that she requires, Fatima re-implements the ANNIE gazetteer so that it regenerates itself from the local personnel data. She then alters the pattern grammar in the semantic tagger to prioritise recognition of names from that source. This latter job involves learning the JAPE language (see chapter 7), but as this is based on regular expressions it isn’t too difficult.

Eventually the system is running nicely, and her accuracy is 93% (there are still some problem cases, e.g. when people use nicknames, but the performance is good enough for production use). Now Fatima stops using the GATE development environment and works instead on embedding the new components in her email application. This application is written in Java, so embedding is very easy9: the two GATE JAR files are added to the project CLASSPATH, the new components are placed on a web server, and with a little code to do initialisation, loading of components and so on, the job is finished in half a day – the code to talk to GATE takes up only around 150 lines of the eventual application, most of which is just copied from the example in the sheffield.examples.StandAloneAnnie class.

Because Fatima is worried about Cyberdyne’s unethical policy of developing Skynet to help the large corporates of the West strengthen their strangle-hold over the World, she wants to get a job as an academic instead (so that her conscience will only have to cope with the torture of students, as opposed to humanity). She takes the accuracy measures that she has attained for her system and writes a paper for the Journal of Nasturtium Logarithm Encitement describing the approach used and the results obtained. Because she used GATE for development, she can cite the repeatability of her experiments and offer access to example binary versions of her software by putting them on an external web server.

And everybody lived happily ever after.

1.4 Structure of the Book [#]

The material presented in this book ranges from the conceptual (e.g. ‘what is software architecture?’) to practical instructions for programmers (e.g. how to deal with GATE exceptions) and linguists (e.g. how to write a pattern grammar). This diversity is something of an organisational challenge. Our (no doubt imperfect) solution is to collect specific instructions for ‘how to do X’ in a separate chapter (3). Other chapters give a more discursive presentation. In order to understand the whole system you must, unfortunately, read much of the book; in order to get help with a particular task, however, look first in chapter 3 and refer to other material as necessary.

The other chapters:

Chapter 4 describes the GATE architecture’s component-based model of language processing, describes the lifecycle of GATE components, and how they can be grouped into applications and stored in databases and files.

Chapter 5 describes the set of Visual Resources that are bundled with GATE.

Chapter 6 describes GATE’s model of document formats, annotated documents, annotation types, and corpora (sets of documents). It also covers GATE’s facilities for reading and writing in the XML data interchange language.

Chapter 7 describes JAPE, a pattern/action rule language based on regular expressions over annotations on documents. JAPE grammars compile into cascaded finite state transducers.

Chapter 8 describes ANNIE, a pipelined Information Extraction system which is supplied with GATE.

Chapter 9 describes CREOLE resources bundled with the system that don’t fit into the previous categories.

Chapter 10 describes processing resources and language resources for working with ontologies.

Chapter 11 describes a machine learning layer specifically targetted at NLP tasks including text classification, chunk learning (e.g. for named entity recognition) and relation learning.

Chapter 13 describes how to measure the performance of language analysis components.

Chapter 14 describes the data store security model.

Appendix A discusses the design of the system.

Appendix B describes the implementation details and formal definitions of the JAPE annotation patterns language.

Appendix D describes in some detail the JAPE pattern grammars that are used in ANNIE for named-entity recognition.

1.5 Further Reading [#]

Lots of documentation lives on the GATE web server, including:

- the concise application developer’s guide (with emphasis on using the GATE API);

- movies of the system in operation;

- the main system documentation tree;

- JavaDoc API documentation;

- HTML of the source code;

- parts of the requirements analysis that version 3 is based on.

For more details about Sheffield University’s work in human language processing see the NLP group pages or A Definition and Short History of Language Engineering ([Cunningham 99a]). For more details about Information Extraction see IE, a User Guide or the GATE IE pages.

A list of publications on GATE and projects that use it (some of which are available on-line):

- [Cunningham 05]

- is an overview of the field of Information Extraction for the 2nd Edition of the Encyclopaedia of Language and Linguistics.

- [Cunningham & Bontcheva 05]

- is an overview of the field of Software Architecture for Language Engineering for the 2nd Edition of the Encyclopaedia of Language and Linguistics.

- [Li et al. 04]

- (Machine Learning Workshop 2004) describes an SVM based learning algortihm for IE using GATE.

- [Wood et al. 04]

- (NLDB 2004) looks at ontology-based IE from parallel texts.

- [Cunningham & Scott 04b]

- (JNLE) is a collection of papers covering many important areas of Software Architecture for Language Engineering.

- [Cunningham & Scott 04a]

- (JNLE) is the introduction to the above collection.

- [Bontcheva 04]

- (LREC 2004) describes lexical and ontological resources in GATE used for Natural Language Generation.

- [Bontcheva et al. 04]

- (JNLE) discusses developments in GATE in the early naughties.

- [Maynard et al. 04a]

- (LREC 2004) presents algorithms for the automatic induction of gazetteer lists from multi-language data.

- [Maynard et al. 04c]

- (AIMSA 2004) presents automatic creation and monitoring of semantic metadata in a dynamic knowledge portal.

- [Maynard et al. 04b]

- (ESWS 2004) discusses ontology-based IE in the hTechSight project.

- [Dimitrov et al. 04]

- (Anaphora Processing) gives a lightweight method for named entity coreference resolution.

- [Kiryakov 03]

- (Technical Report) discusses semantic web technology in the context of multimedia indexing and search.

- [Tablan et al. 03]

- (HLT-NAACL 2003) presents the OLLIE on-line learning for IE system.

- [Wood et al. 03]

- (Recent Advances in Natural Language Processing 2003) discusses using parallel texts to improve IE recall.

- [Maynard et al. 03a]

- (Recent Advances in Natural Language Processing 2003) looks at semantics and named-entity extraction.

- [Maynard et al. 03b]

- (ACL Workshop 2003) describes NE extraction without training data on a language you don’t speak (!).

- [Maynard et al. ]

- (EACL 2003) looks at the distinction between information and content extraction.

- [Manov et al. 03]

- (HLT-NAACL 2003) describes experiments with geographic knowledge for IE.

- [Saggion et al. 03a]

- (EACL 2003) discusses robust, generic and query-based summarisation.

- [Saggion et al. 03c]

- (EACL 2003) discusses event co-reference in the MUMIS project.

- [Saggion et al. 03b]

- (Data and Knowledge Engineering) discusses multimedia indexing and search from multisource multilingual data.

- [Cunningham et al. 03]

- (Corpus Linguistics 2003) describes GATE as a tool for collaborative corpus annotation.

- [Bontcheva et al. 03]

- (NLPXML-2003) looks at GATE for the semantic web.

- [Dimitrov 02a, Dimitrov et al. 02]

- (DAARC 2002, MSc thesis) discuss lightweight coreference methods.

- [Lal 02]

- (Master Thesis) looks at text summarisation using GATE.

- [Lal & Ruger 02]

- (ACL 2002) looks at text summarisation using GATE.

- [Cunningham et al. 02]

- (ACL 2002) describes the GATE framework and graphical development environment as a tool for robust NLP applications.

- [Bontcheva et al. 02b]

- (NLIS 2002) discusses how GATE can be used to create HLT modules for use in information systems.

- [Tablan et al. 02]

- (LREC 2002) describes GATE’s enhanced Unicode support.

- [Maynard et al. 02a]

- (ACL 2002 Summarisation Workshop) describes using GATE to build a portable IE-based summarisation system in the domain of health and safety.

- [Maynard et al. 02c]

- (Nordic Language Technology) describes various Named Entity recognition projects developed at Sheffield using GATE.

- [Maynard et al. 02b]

- (AIMSA 2002) describes the adaptation of the core ANNIE modules within GATE to the ACE (Automatic Content Extraction) tasks.

- [Maynard et al. 02d]

- (JNLE) describes robustness and predictability in LE systems, and presents GATE as an example of a system which contributes to robustness and to low overhead systems development.

- [Bontcheva et al. 02c], [Dimitrov 02a] and [Dimitrov 02b]

- (TALN 2002, DAARC 2002, MSc thesis) describe the shallow named entity coreference modules in GATE: the orthomatcher which resolves pronominal coreference, and the pronoun resolution module.

- [Bontcheva et al. 02a]

- (ACl 2002 Workshop) describes how GATE can be used as an environment for teaching NLP, with examples of and ideas for future student projects developed within GATE.

- [Pastra et al. 02]

- (LREC 2002) discusses the feasibility of grammar reuse in applications using ANNIE modules.

- [Baker et al. 02]

- (LREC 2002) report results from the EMILLE Indic languages corpus collection and processing project.

- [Saggion et al. 02b] and [Saggion et al. 02a]

- (LREC 2002, SPLPT 2002) describes how ANNIE modules have been adapted to extract information for indexing multimedia material.

- [Maynard et al. 01]

- (RANLP 2001) discusses a project using ANNIE for named-entity recognition across wide varieties of text type and genre.

- [Cunningham 00]

- (PhD thesis) defines the field of Software Architecture for Language Engineering, reviews previous work in the area, presents a requirements analysis for such systems (which was used as the basis for designing GATE versions 2 and 3), and evaluates the strengths and weaknesses of GATE version 1.

- [Cunningham 02]

- (Computers and the Humanities) describes the philosophy and motivation behind the system, describes GATE version 1 and how well it lived up to its design brief.

- [McEnery et al. 00]

- (Vivek) presents the EMILLE project in the context of which GATE’s Unicode support for Indic languages has been developed.

- [Cunningham et al. 00d] and [Cunningham 99c]

- (technical reports) document early versions of JAPE (superceded by the present document).

- [Cunningham et al. 00a], [Cunningham et al. 98a] and [Peters et al. 98]

- (OntoLex 2000, LREC 1998) presents GATE’s model of Language Resources, their access and distribution.

- [Maynard et al. 00]

- (technical report) surveys users of GATE up to mid-2000.

- [Cunningham et al. 00c] and [Cunningham et al. 99]

- (COLING 2000, AISB 1999) summarise experiences with GATE version 1.

- [Cunningham et al. 00b]

- (LREC 2000) taxonomises Language Engineering components and discusses the requirements analysis for GATE version 2.

- [Bontcheva et al. 00] and [Brugman et al. 99]

- (COLING 2000, technical report) describe a prototype of GATE version 2 that integrated with the EUDICO multimedia markup tool from the Max Planck Institute.

- [Gambäck & Olsson 00]

- (LREC 2000) discusses experiences in the Svensk project, which used GATE version 1 to develop a reusable toolbox of Swedish language processing components.

- [Cunningham 99a]

- (JNLE) reviewed and synthesised definitions of Language Engineering.

- [Stevenson et al. 98] and [Cunningham et al. 98b]

- (ECAI 1998, NeMLaP 1998) report work on implementing a word sense tagger in GATE version 1.

- [Cunningham et al. 97b]

- (ANLP 1997) presents motivation for GATE and GATE-like infrastructural systems for Language Engineering.

- [Gaizauskas et al. 96b, Cunningham et al. 97a, Cunningham et al. 96e]

- (ICTAI 1996, TITPSTER 1997, NeMLaP 1996) report work on GATE version 1.

- [Cunningham et al. 96c, Cunningham et al. 96d, Cunningham et al. 95]

- (COLING 1996, AISB Workshop 1996, technical report) report early work on GATE version 1.

- [Cunningham et al. 96b]

- (TIPSTER) discusses a selection of projects in Sheffield using GATE version 1 and the TIPSTER architecture it implemented.

- [Cunningham et al. 96a]

- (manual) was the guide to developing CREOLE components for GATE version 1.

- [Gaizauskas et al. 96a]

- (manual) was the user guide for GATE version 1.

- [Humphreys et al. 96]

- (manual) desribes the language processing components distributed with GATE version 1.

- [Cunningham 94, Cunningham et al. 94]

- (NeMLaP 1994, technical report) argue that software engineering issues such as reuse, and framework construction, are important for language processing R&D.

- [Dowman et al. 05b]

- (World Wide Web Conference Paper) The Web is used to assist the annotation and indexing of broadcast news.

- [Dowman et al. 05a]

- (Euro Interactive Television Conference Paper) A system which can use material from the Internet to augment television news broadcasts.

- [Dowman et al. 05c]

- (Second European Semantic Web Conference Paper) A system that semantically annotates television news broadcasts using news websites as a resource to aid in the annotation process.

- [Li et al. 05a]

- (Proceedings of Sheffield Machine Learning Workshop) describe an SVM based IE system which uses the SVM with uneven margins as learning component and the GATE as NLP processing module.

- [Li et al. 05b]

- (Proceedings of Ninth Conference on Computational Natural Language Learning (CoNLL-2005)) uses the uneven margins versions of two popular learning algorithms SVM and Perceptron for IE to deal with the imbalanced classification problems derived from IE.

- [Li et al. 05c]

- (Proceedings of Fourth SIGHAN Workshop on Chinese Language processing (Sighan-05)) used Perceptron learning, a simple, fast and effective learning algorithm, for Chinese word segmentation.

- [Aswani et al. 05]

- (Proceedings of Fifth International Conference on Recent Advances in Natural Language Processing (RANLP2005)) It is a full-featured annotation indexing and search engine, developed as a part of the GATE. It is powered with Apache Lucene technology and indexes a variety of documents supported by the GATE.

- [Li et al. 05c]

- (Proceedings of Fourth SIGHAN Workshop on Chinese Language processing (Sighan-05)) a system for Chinese word segmentation based on Perceptron learning, a simple, fast and effective learning algorithm.

- [Wang et al. 05]

- (Proceedings of the 2005 IEEE/WIC/ACM International Conference on Web Intelligence (WI 2005)) Extracting a Domain Ontology from Linguistic Resource Based on Relatedness Measurements.

- [Ursu et al. 05]

- (Proceedings of the 2nd European Workshop on the Integration of Knowledge, Semantic and Digital Media Technologies (EWIMT 2005))Digital Media Preservation and Access through Semantically Enhanced Web-Annotation.

- [Polajnar et al. 05] (University of Sheffield-Research Memorandum CS-05-10) User-Friendly Ontology Authoring Using a Controlled Language.

- [Aswani et al. 06] (Proceedings of the 5th International Semantic Web Conference (ISWC2006)) In this paper the problem of disambiguating author instances in ontology is addressed. We describe a web-based approach that uses various features such as publication titles, abstract, initials and co-authorship information.

Chapter 2

Change Log [#]

This chapter lists major changes to GATE in roughly chronological order by release. Changes in the documentation are also referenced here.

2.1 Version 5.0 (May 2009) [#]

Note: existing users – if you delete your user configuration file for any reason you will find that GATE no longer loads the ANNIE plugin by default. You will need to manually select “load always” in the plugin manager to get the old behaviour.

2.1.1 Major new features

JAPE language improvements

Several new extensions to the JAPE language to support more flexible pattern matching. Full details are in chapter 7 but briefly:

- Negative constraints, that prevent a rule from matching if certain other annotations are present (section 7.4).

- Additional matching operators for feature values, so you can now look for {Token.length < 5}, {Lookup.minorType != "ignore"}, etc. as well as simple equality (section 7.1).

- “Meta-property” accessors, to permit access to the string covered by an annotation, the length of the annotation, etc., e.g. {Lookup@length > 4}.

- Contextual operators, allowing you to search for one annotation contained within (or containing) another, e.g. {Sentence contains {Lookup.majorType == "location"}} (see section 7.1.4).

- Additional Kleene operator for ranges, e.g. ({Token})[2,5] matches between 2 and 5 consecutive tokens.

- Additional operators can be added via runtime configuration.

Some of these extensions are similar to, but not the same as, those provided by the Montreal Transducer plugin. If you are already familiar with the Montreal Transducer, you should first look at section 7.11 which summarises the differences.

Resource configuration via Java 5 Annotations

Introduced an alternative style for supplying resource configuration information via Java 5 annotations rather than in creole.xml. The previous approach is still fully supported as well, and the two styles can be freely mixed. See section 4.9 for full details.

Ontology-based Gazetteer

Added a new plugin Ontology_Based_Gazetteer, which contains OntoRoot Gazetteer – a dynamically created gazetteer which is, in combination with few other generic GATE resources, capable of producing ontology-aware annotations over the given content with regards to the given ontology. For more details see section 9.31.

Inter-annotator agreement and merging

New plugins to support tasks involving several annotators working on the same annotation task on the same documents. The “iaaPlugin” (section 13.6) computes inter-annotator agreement scores between the annotators, the “copyAS2AnoDoc” plugin (section 9.33) copies annotations from several parallel documents into a single master document, and the “annotationMerging” plugin (section 9.30) merges annotations from multiple annotators into a single “consensus” annotation set.

Packaging self-contained applications for GATE Teamware

Added a mechanism to assemble a saved GATE application along with all the resource files it uses into a single self-contained package to run on another machine (e.g. as a service in GATE Teamware). This is available as a menu option (section 3.24) which will work for most common cases, but for complex cases you can use the underlying Ant task described in section C.2.

GUI improvements

- A new schema-driven tool to streamline manual annotation tasks (see section 3.19.1).

- Context-sensitive help on elements in the resource tree and when pressing F1 key. Search in mailing list from the Help menu. Help is displayed in your browser or in a Java browser if you don’t have one.

- Improved search function inside documents with a regular expression builder. Search and replace annotation function in all annotation editors.

- Remember for each resource type the last path used when loading/saving a resource.

- Remember the last annotations selected in the annotation set view when you shift click on the annotation set view button.

- Improved context menu and when possible added drag and drop in: resource tree, annotation set view, annotation list view, corpus view, controller view. Context menu key can be now used if you have Java 1.6.

- New dialog box for error messages with user oriented messages, optional display of the configuration and proposing some useful actions. This will progressively replace the old stack trace dump into the message panel which is still here for the moment but should be hide by default in the future.

- Add read-only document mode that can be enable from the Options menu.

- Add a selection filter in the status bar of the annotations list table to easily select rows based on the text you enter.

- Add the last five applications loaded/saved in the context menu of the language resources in the resources tree.

- Display more informations on what going’s on in the waiting dialog box when running an application. The goal is to improve it to get a global progress bar and estimated time.

2.1.2 Other new features and improvements

- New parser plugins: A new plugin for the Stanford Parser (see section 9.13) and a rewritten plugin for the RASP NLP tools (section 9.11).

- A new sentence splitter, based on regular expressions, has been added to the ANNIE plugin. More details in section 8.4.

- “Real-time” corpus controller (section 4.6), which terminates processing of a document if it takes longer than a configurable timeout..

- Major update to Annie OrthoMatcher coreference engine. Now correctly matches the sequence ”David Jones ... David ... David Smith ... David” as referring to two people. Also handles nicknames (David = Dave) via a new nickname list. Added optional parameter ”highPrecisionOrgs”, which if set to true turns off riskier org matching rules. Many misc. bug fixes.

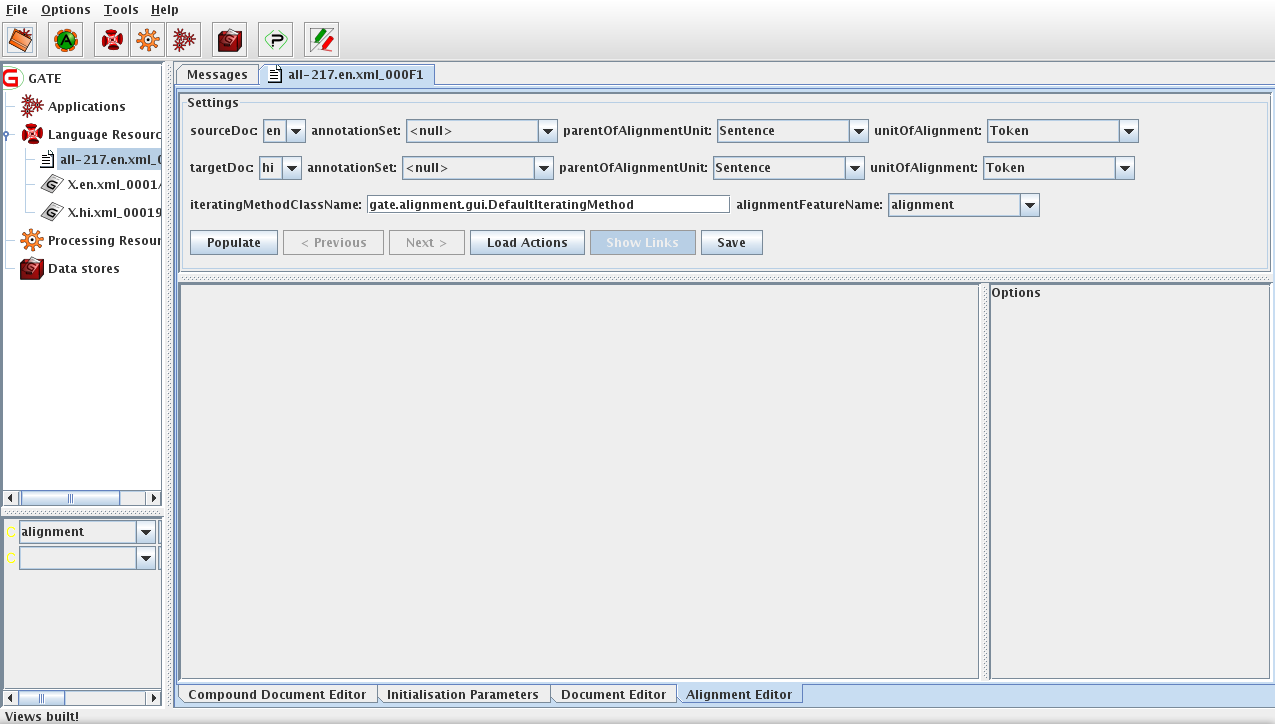

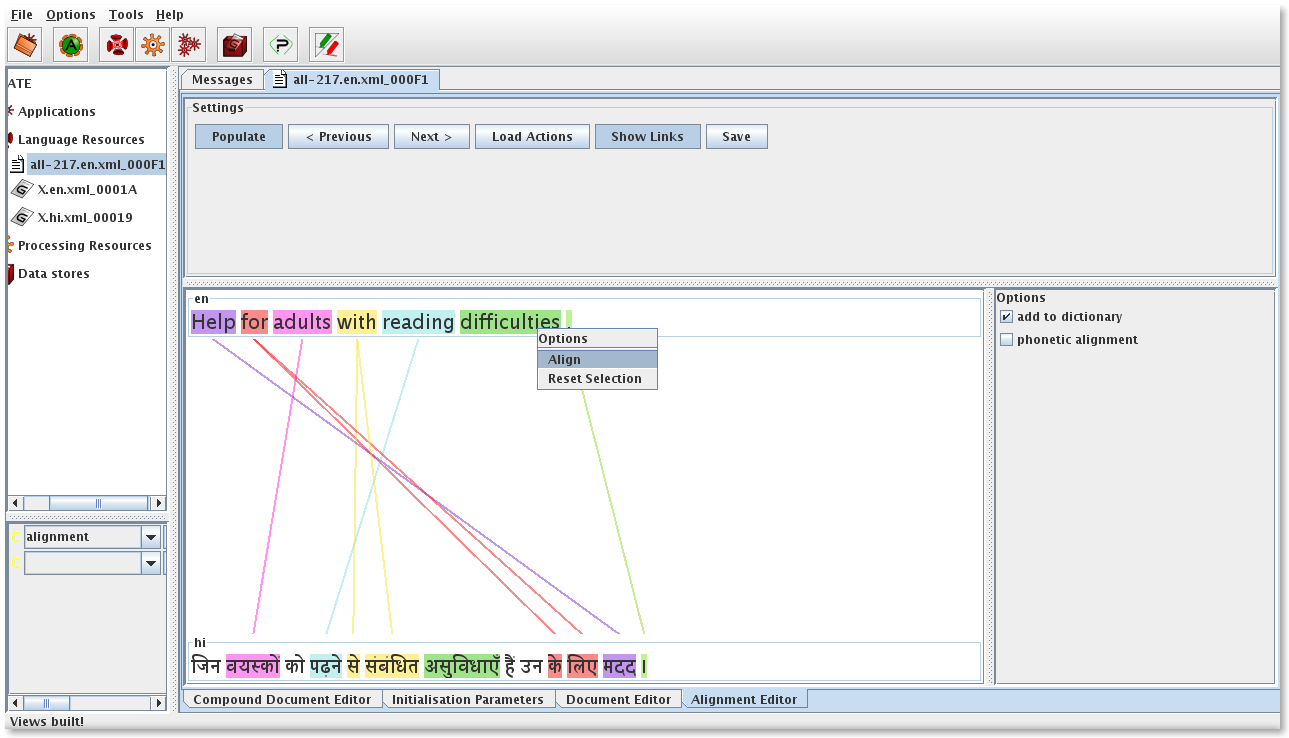

- Improved alignment editor (chapter 12) with several advanced features and an API for adding your own actions to the editor.

- A new plugin for Chinese word segmentation, which is based on our work using machine learning algorithms for the Sighan-05 Chinese word segmentation task. It can learn a model from manually segmented text, and apply a learned model to segment Chinese text. In addition several learned models are available with the plugin, which can be used to segment text. For details about the plugin and those learned models see Section 9.32.

- New features in the ML API to produce an n-gram based language model from a corpus and a so-called “document-term matrix” (see section 9.19). Also introduced features to support active learning, a new learning algorithm (PAUM) and various optimisations including the ability to use an external executable for SVM training. Full details in chapter 11.

- A new plugin to compute BDM scores for an ontology. The BDM score can be used to evaluate ontology based information extraction and classification. For details about the plugin see Section 13.7.

- Added new “getCovering” method to AnnotationSet. This method returns annotations that completely span the provided range. An optional annotation type parameter can be provided to further limit the returned set.

- Complete redesign of ANNIC GUI. More details in section 9.29.

2.1.3 Specific bug fixes

- HTML document format parser: several bugs fixed, including a null pointer exception if the document contained certain characters illegal in HTML (#1754749). Also, the HTML parser now respects the “Add space on markup unpack” configuration option – previously it would always add space, even if the option was set to false.

- Fixed a severe performance bug in the Annie Pronominal Coreferencer resulting in a 50X speed improvement.

- JAPE did not always correctly handle the case when the input and output annotation sets for a transducer were different. This has now been fixed.

- ‘Save preserving document format’ was not correctly escaping ampersands and less than signs when two HTML entities are close together. Only the first one was replaced: A & B & C was output as A & B & C instead of A & B & C. This has now been fixed, and the fix is also valid for the flexible exporter but only if the standoff annotations parameter is set to false.

Plus many more minor bug fixes

2.2 Version 4.0 (July 2007) [#]

2.2.1 Major new features

ANNIC

ANNotations In Context: a full-featured annotation indexing and retrieval system designed to support corpus querying and JAPE rule authoring. It is provided as part of an extention of the Serial Datastores, called Searchable Serial Datastore (SSD). See section 9.29 for more details.

New machine learning API

A brand new machine learning layer specifically targetted at NLP tasks including text classification, chunk learning (e.g. for named entity recognition) and relation learning. See chapter 11 for more details.

Ontology API

A new ontology API, based on OWL In Memory (OWLIM), which offers a better API, revised ontology event model and an improved ontology editor to name but few. See chapter 10 for more details.

OCAT

Ontology-based Corpus Annotation Tool to help annotators to manually annotate documents using ontologies. For more details please see section 10.9.

Alignment Tools

A new set of components (e.g. CompoundDocument, AlignmentEditor etc.) that help in building alignment tools and in carrying out cross-document processing. See chapter 12 for more details.

New HTML Parser

A new HTML document format parser, based on Andy Clark’s NekoHTML. This parser is much better than the old one at handling modern HTML and XHTML constructs, JavaScript blocks, etc., though the old parser is still available for existing applications that depend on its behaviour.

Java 5.0 support

GATE now requires Java 5.0 or later to compile and run. This brings a number of benefits:

- Java 5.0 syntax is now available on the right hand side of JAPE rules with the default Eclipse compiler. See section B.8 for details.

- enum types are now supported for resource parameters. see section 3.13 for details on defining the parameters of a resource.

- AnnotationSet and the CreoleRegister take advantage of generic types. The AnnotationSet interface is now an extension of Set<Annotation> rather than just Set, which should make for cleaner and more type-safe code when programming to the API, and the CreoleRegister now uses parameterized types, which are backwards-compatible but provide better type-safety for new code.

2.2.2 Other new features and improvements

- Hiding the view for a particular resource (by right clicking on its tab and selecting “Hide this view”) will now completely close the associated viewers and dispose them. Re-selecting the same resource at a later time will lead to re-creating the necessary viewers and displaying them. This has two advantages: firstly it offers a mechanism for disposing views that are not needed any more without actually closing the resource and secondly it provides a way to refresh the view of a resource in the situations where it becomes corrupted.

- The DataStore viewer now allows multiple selections. This lets users load or delete an arbitrarily large number of resources in one operation.

- The Corpus editor has been completely overhauled. It now allows re-ordering of documents as well as sorting the document list by either index or document name.

- Support has been added for resource parameters of type gate.FeatureMap, and it is also possible to specify a default value for parameters whose type is Collection, List or Set. See section 3.13 for details.

- (Feature Request #1446642) After several requests, a mechanism has been added to allow overriding of GATE’s document format detection routine. A new creation-time parameter mimeType has been added to the standard document implementation, which forces a document to be interpreted as a specific MIME type and prevents the usual detection based on file name extension and other information. See section 6.5.1 for details.

- A capability has been added to specify arbitrary sets of additional features on individual gazetteer entries. These features are passed forward into the Lookup annotations generated by the gazetteer. See section 8.2 for details.

- As an alternative to the Google plugin, a new plugin called yahoo has been added to GATE to allow users to submit their query to the Yahoo search engine and to load the found pages as GATE documents. See section 9.22 for more details.

- It is now easier to run a corpus pipeline over a single document in the GATE GUI – documents now provide a right-click menu item to create a singleton corpus containing just this document. See section 3.12.1 for details.

- A new interface has been added that lets PRs receive notification at the start and end of execution of their containing controller. This is useful for PRs that need to do cleanup or other processing after a whole corpus has been processed. See section 4.6 for details.

- The GATE GUI does not call System.exit() any more when it is closed. Instead an effort is made to stop all active GATE threads and to release all GUI resources, which leads to the JVM exiting gracefully. This is particularly useful when GATE is embedded in other systems as closing the main GATE window will not kill the JVM process any more.

- The set of AnnotationSchemas that used to be included in the core gate.jar and laoded as builtins have now been moved to the ANNIE plugin. When the plugin is loaded, the default annotation schemas are instantiated automatically and are available when doing manual annotation.

- There is now support in creole.xml files for automatically creating instances of a resource that are hidden (i.e. do not show in the GUI). One example of this can be seen in the creole.xml file of the ANNIE plugin where the default annotation schemas are defined.

- A couple of helper classes have been added to assist in using GATE within a Spring application. Section 3.29 explains the details.

- Improvements have been made to the thread-safety of some internal GATE components, which mean that it is now safe to create resources in multiple threads (though it is not safe to use the same resource instance in more than one thread). This is a big advantage when using GATE in a multithreaded environment, such as a web application. See section 3.31 for details.

- Plugins can now provide custom icons for their PRs and LRs in the plugin JAR file. See section 3.13 for details.

- It is now possible to override the default location for the saved session file using a system property. See section 3.3 for details.

- The TreeTagger plugin supports a system property to specify the location of the shell interpreter used for the tagger shell script. In combination with Cygwin this makes it much easier to use the tagger on Windows. See section 9.7 for details.

- The Buchart plugin has been removed. It is superseded by SUPPLE, and instructions on how to upgrade your applications from Buchart to SUPPLE are given in section 9.12. The probability finder plugin has also been removed, as it is no longer maintained.

- The bootstrap wizard now creates a basic plugin that builds with Ant. Since a Unix-style make command is no longer required this means that the generated plugin will build on Windows without needing Cygwin or MinGW.

- The GATE source code has moved from CVS into Subversion. See section 3.2.3 for details of how to check out the code from the new repository.

- An optional parameter, keepOriginalMarkupsAS, has been added to the DocumentReset PR which allows users to decide whether to keep the Original Markups AS or not while reseting the document. See section 9.1 for more details.

2.2.3 Bug fixes and optimizations

- The Morphological Analyser has been optimized. A new FSM based, although with minor alteration to the basic FSM algorithm, has been implemented to optimize the GATE Morphological Analyser. The previous profiling figures show that the morpher when integrated with ANNIE application used to take upto 60% of the overall processing time. The optimized version only takes 7.6% of the total processing time. See section 9.9 for more details on the morpher.

- The ANNIE Sentence Splitter was optimised. The new version is about twice as fast as the previous one. The actual speed increase varies widely depending on the nature of the document.

- The imlementation of the OrthoMatcher component has been improved. This resources takes significantly less time on large documents.

- The implementation of AnnotationSets has been improved. GATE now requires up to 40% less memory to run and is also 20% faster on average. The get methods of AnnotationSet return instances of ImmutableAnnotationSet. Any attempt at modifying the content of these objects will trigger an Exception. An empty ImmutableAnnotationSet is returned instead of null.

- The Chemistry tagger (section 9.16) has been updated with a number of bugfixes and improvements.

- The Document user interface has been optimised to deal better with large bursts of events

which tend to occur when the document that is currently displayed gets modified. The main

advantages brought by this new implementation are:

- The document UI refreshes faster than before.

- The presence of the GUI for a document induces a smaller performance penalty than it used to. Due to a better threading implementation, machines benefiting from multiple CPUs (e.g. dual CPU, dual core or hyperthreading machines) should only see a negligible increase in processing time when a document is displayed compared to the situations where the document view is not shown. In the previous version, displaying a document while it was processed used to increase execution time by an order of magnitude.

- The GUI is more responsive now when a large number of annotations are displayed, hidden or deleted.

- The strange exceptions that used to occur occasionally while working with the document GUI should not happen any more.

And as always there are many smaller bugfixes too numerous to list here...

2.3 Version 3.1 (April 2006)

2.3.1 Major new features

Support for UIMA

UIMA (http://www.research.ibm.com/UIMA/) is a language processing framework developed by IBM. UIMA and GATE share some functionality but are complementary in most respects. GATE now provides an interoperability layer to allow UIMA applications to include GATE components in their processing and vice-versa. For full information, see chapter 16.

New Ontology API

The ontology layer has been rewritten in order to provide an abstraction layer between the model representation and the tools used for input and output of the various representation formats. An implementation that uses Jena 2 (http://jena.sourceforge.net/ontology) for reading and writing OWL and RDF(S) is provided.

Ontotext Japec Compiler

Japec is a compiler for JAPE grammars developed by Ontotext Lab. It has some limitations compared to the standard JAPE transducer implementation, but can run JAPE grammars up to five times as fast. By default, GATE still uses the stable JAPE implementation, but if you want to experiment with Japec, see section 9.28.

2.3.2 Other new features and improvements

- Addition of a new JAPE matching style ”all”. This is similar to Brill, but once all rules from a given start point have matched, the matching will continue from the next offset to the current one, rather than from the position in the document where the longest match finishes. More details can be found in Section 7.3.

- Limited support for loading PDF and Microsoft Word document formats. Only the text is extracted from the documents, no formatting information is preserved.

- The Buchart parser has been deprecated and replaced by a new plugin called SUPPLE - the Sheffield University Prolog Parser for Language Engineering. Full details, including information on how to move your application from Buchart to SUPPLE, is in section 9.12.

- The Hepple POS Tagger is now open-source. The source code has been included in the GATE distribution, under src/hepple/postag. More information about the POS Tagger can be found in Section 8.5.

- Minipar is now supported on Windows. minipar-windows.exe, a modified version of pdemo.cpp is added under the gate/plugins/minipar directory to allow users to run Minipar on windows platform. While using Minipar on Windows, this binary should be provided as a value for miniparBinary parameter. For full information on Minipar in GATE, see section 9.10.

- The XmlGateFormat writer(Save As Xml from GATE GUI, gate.Document.toXml() from GATE API) and reader have been modified to write and read GATE annotation IDs. For backward compatibility reasons the old reader has been kept. This change fixes a bug which manifested in the following situation: If a GATE document had annotations carrying features of which values were numbers representing other GATE annotation IDs, after a save and a reload of the document to and from XML, the former values of the features could have become invalid by pointing to other annotations. By saving and restoring the GATE annotation ID, the former consistency of the GATE document is maintained. For more information, see Section 6.5.2.

- The NP chunker and chemistry tagger plugins have been updated. Mark Greenwood has relicenced them under the LGPL, so their source code has been moved into the GATE distribution. See sections 9.3 and 9.16 for details.

- The Tree Tagger wrapper has been updated with an option to be less strict when characters that cannot be represented in the tagger’s encoding are encountered in the document. Details are in section 9.7.

- JAPE Transducers can be serialized into binary files. The option to load serialized version of JAPE Transducer (an init-time parameter binaryGrammarURL) is also implemented which can be used as an alternative to the parameter grammarURL. More information can be found in Section 7.9.

- On Mac OS, GATE now behaves more ‘naturally’. The application menu items and keyboard shortcuts for About and Preferences now do what you would expect, and exiting GATE with command-Q or the Quit menu item properly saves your options and current session.

- Updated versions of Weka(3.4.6) and Maxent(2.4.0).

- Optimisation in gate.creole.ml: the conversion of AnnotationSet into ML examples is now faster.

- It is now possible to create your own implementation of Annotation, and have GATE use this instead of the default implementation. See AnnotationFactory and AnnotationSetImpl in the gate.annotation package for details.

2.3.3 Bug fixes

- The Tree Tagger wrapper has been updated in order to run under Windows. See 9.7.

- The SUPPLE parser has been made more user-friendly. It now produces more helpful error messages if things go wrong. Note that you will need to update any saved applications that include SUPPLE to work with this version - see section 9.12 for details.

- Miscellaneous fixes in the Ontotext JapeC compiler.

- Optimization : the creation of a Document is much faster.

- Google plugin: The optional pagesToExclude parameter was causing a NullPointerException when left empty at run time. Full details about the plugin functionality can be found in section 9.21.

- Minipar, SUPPLE, TreeTagger: These plugins that call external processes have been fixed to cope better with path names that contain spaces. Note that some of the external tools themselves still have problems handling spaces in file names, but these are beyond our control to fix. If you want to use any of these plugins, be sure to read the documentation to see if they have any such restrictions.

- When using a non-default location for GATE configuration files, the configuration data is saved back to the correct location when GATE exits. Previously the default locations were always used.

- Jape Debugger: ConcurrentModificationException in JAPE debugger. The JAPE debugger was generating a ConcurrentModificationException during an attempt to run ANNIE. There is no exception when running without the debugger enabled. As result of fixing one unnesesary and incorrect callback to debugger was removed from SinglePhaseTransducer class.

- Plus many other small bugfixes...

2.4 January 2005

Release of version 3.

New plugins for processing in various languages (see 9.15). These are not full IE systems but are designed as starting points for further development (French, German, Spanish, etc.), or as sample or toy applications (Cebuano, Hindi, etc.).

Other new plugins:

- Chemistry Tagger 9.16

- Montreal Transducer 9.14

- RASP Parser 9.11

- MiniPar 9.10

- Buchart Parser 9.12

- MinorThird 9.25

- NP Chunker 9.3

- Stemmer 9.8

- TreeTagger 9.7

- Probability Finder

- Crawler 9.20

- Google PR 9.21

Support for SVM Light, a support vector machine implementation, has been added to the machine learning plugin (see section 9.24.7).

2.5 December 2004

GATE no longer depends on the Sun Java compiler to run, which means it will now work on any Java runtime environment of at least version 1.4. JAPE grammars are now compiled using the Eclipse JDT Java compiler by default.

A welcome side-effect of this change is that it is now much easier to integrate GATE-based processing into web applications in Tomcat. See section 3.30 for details.

2.6 September 2004

GATE applications are now saved in XML format using the XStream library, rather than by using native java serialization. On loading an application, GATE will automatically detect whether it is in the old or the new format, and so applications in both formats can be loaded. However, older versions of GATE will be unable to load applications saved in the XML format. (A java.io.StreamCorruptedException: invalid stream header exception will occcur.) It is possible to get new versions of GATE to use the old format by setting a flag in the source code. (See the Gate.java file for details.) This change has been made because it allows the details of an application to be viewed and edited in a text editor, which is sometimes easier than loading the application into GATE.

2.7 Version 3 Beta 1 (August 2004)

Version 3 incorporates a lot of new functionality and some reorganisation of existing components.

Note that Beta 1 is feature-complete but needs further debugging (please send us bug reports!).

Highlights include: completely rewritten document viewer/editor; extensive ontology support; a new plugin management system; separate .jar files and a Tomcat classloading fix; lots more CREOLE components (and some more to come soon).

Almost all the changes are backwards-compatible; some recent classes have been renamed (particularly the ontologies support classes) and a few events added (see below); datastores created by version 3 will probably not read properly in version 2. If you have problems use the mailing list and we’ll help you fix your code!

The gorey details:

- Anonymous CVS is now available. See section 3.2.3 for details.

- CREOLE repositories and the components they contain are now managed as plugins. You can select the plugins the system knows about (and add new ones) by going to ”Manage CREOLE Plugins” on the file menu.

- The gate.jar file no longer contains all the subsiduary libraries and CREOLE component resources. This makes it easier to replace library versions and/or not load them when not required (libraries used by CREOLE builtins will now not be loaded unless you ask for them from the plugins manager console).

- ANNIE and other bundled components now have their resource files (e.g. pattern files, gazetteer lists) in a separate directory in the distribution – gate/plugins.

- Some testing with Sun’s JDK 1.5 pre-releases has been done and no problems reported.

- The gate:// URL system used to load CREOLE and ANNIE resources in past releases is no longer needed. This means that loading in systems like Tomcat is now much easier.

- MAC OS X is now properly supported by the installed and the runtime.

- An Ontology-based Corpus Annotation Tool (OCAT) has been implemented as a GATE plugin. Documentation of its functionality is in Section 10.9.

- The NLG Lexical tools from the MIAKT project have now been released. See documentation in Section 9.26.

- The Features viewer/editor has been completely updated – see Sections 3.16 and 3.19 for details.

- The Document editor has been completely rewritten – see Section 3.6 for more information.

- The datastore viewer is now a full-size VR – see Section 3.22 for more information.

2.8 July 2004

GATE Documents now fire events when the document content is edited. This was added in order

to support the new facility of editing documents from the GUI. This change will break backwards

compatibility by requiring all DocumentListener implementations to implement a new

method:

public void contentEdited(DocumentEvent e);

2.9 June 2004

A new algorithm has been implemented for the AnnotationDiff function. A new, more usable, GUI is included, and an ”Export to HTML” option added. More details about the AnnotationDiff tool are in Section 3.25.

A new build process, based on ANT (http://ant.apache.org/) is now available for GATE. The old build process, based on make, is now unsupported. See Section 3.8 for details of the new build process.

A Jape Debugger from Ontos AG has been integrated in GATE. You can turn integration ON with command line option ”-j”. If you run the GATE GUI with this option, the new menu item for Jape Debugger GUI will appear in the Tools menu. The default value of integration is OFF. We are currently awaiting documentation for this.

NOTE! Keep in mind there is ClassCastExceprion if you try to debug ConditionalCorpusPipeline. Jape Debugger is designed for Corpus Pipeline only. The Ontos code needs to be changed to allow debugging of ConditionalCorpusPipeline.

2.10 April 2004

GATE now has two alternative strategies for ontology-aware grammar transduction:

- using the [ontology] feature both in grammars and annotations; with the default Transducer.

- using the ontology aware transducer – passing an ontology LR to a new subsume method in the SimpleFeatureMapImpl. the latter strategy does not check for ontology features (this will make the writing of grammars easier – no need to specify ontology).

The changes are in:

- SinglePhaseTransducer (always call subsume with ontology – if null then the ordinary subsumption takes place)

- SimpleFeatureMapImpl (new subsume method using an ontology LR)

More information about the ontology-aware transducer can be found in Section 10.6.

A morphological analyser PR has been added to GATE. This finds the root and affix values of a token and adds them as features to that token.

A flexible gazetteer PR has been added to GATE. This performs lookup over a document based on the values of an arbitrary feature of an arbitrary annotation type, by using an externally provided gazetteer. See 9.5 for details.

2.11 March 2004

Support was added for the MAXENT machine learning library. (See 9.24.6 for details.)

2.12 Version 2.2 – August 2003

Note that GATE 2.2 works with JDK 1.4.0 or above. Version 1.4.2 is recommended, and is the one included with the latest installers.

GATE has been adapted to work with Postgres 7.3. The compatibility with PostgreSQL 7.2 has been preserved. See 3.38 for more details.

New library version – Lucene 1.3 (rc1)

A bug in gate.util.Javac has been fixed in order to account for situations when String literals require an encoding different from the platform default.

Temporary .java files used to compile JAPE RHS actions are now saved using UTF-8 and the ”-encoding UTF-8” option is passed to the javac compiler.

A custom tools.jar is no longer necessary

Minor changes have been made to the look and feel of GATE to improve its appearance with JDK 1.4.2

Some bug fixes (087, 088, 089, 090, 091, 092, 093, 095, 096 – see http://gate.ac.uk/gate/doc/bugs.html for more details).

2.13 Version 2.1 – February 2003

Integration of Machine Learning PR and WEKA wrapper (see Section 9.24).

Addition of DAML+OIL exporter.

Integration of WordNet in GATE (see Section 9.23).

The syntax tree viewer has been updated to fix some bugs.

2.14 June 2002

Conditional versions of the controllers are now available (see Section 3.15). These allow processing resources to be run conditionally on document features.

PostgreSQL Data Stores are now supported (see Section 4.7). These store data into a PostgreSQL RDBMS.

Addition of OntoGazetteer (see Section 5.2), an interface which makes ontologies visible within GATE, and supports basic methods for hierarchy management and traversal.

Integration of Protégé, so that people with developed Protégé ontologies can use them within GATE.

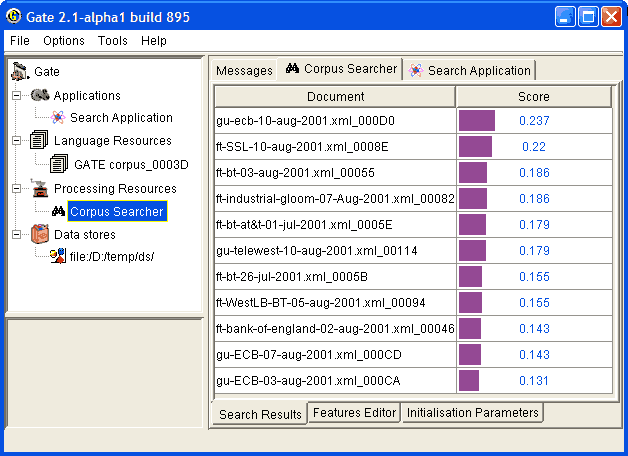

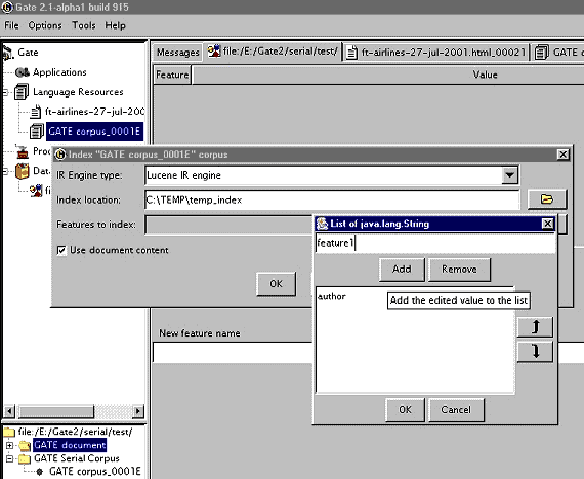

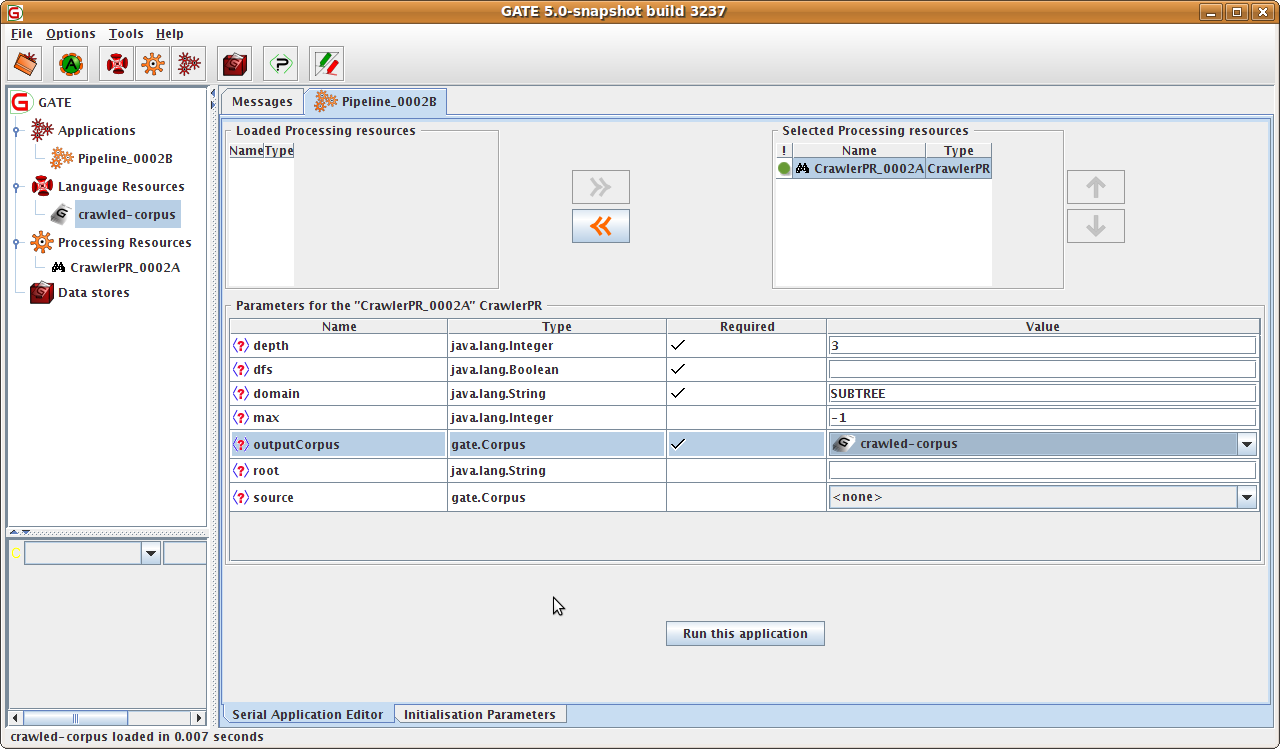

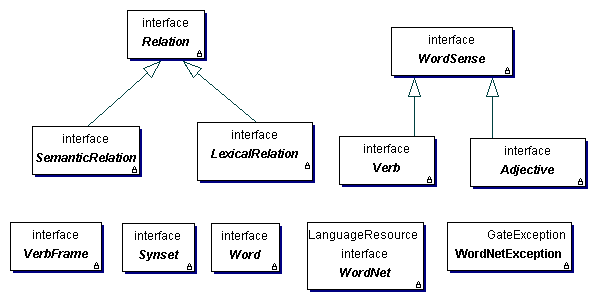

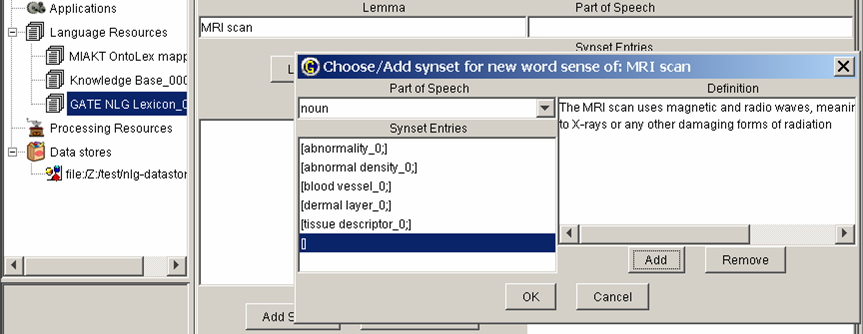

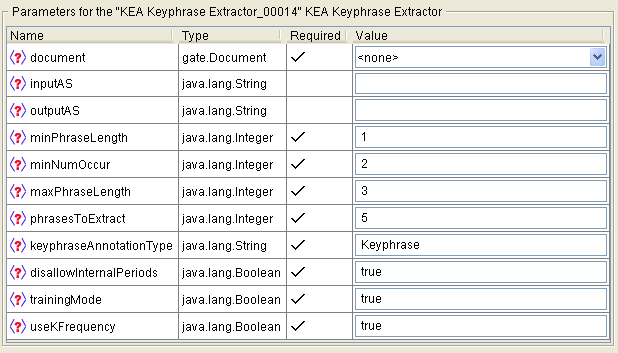

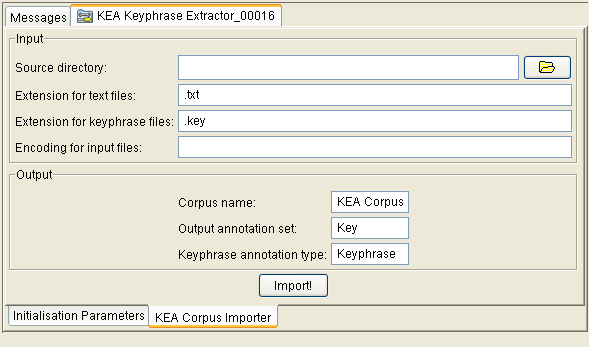

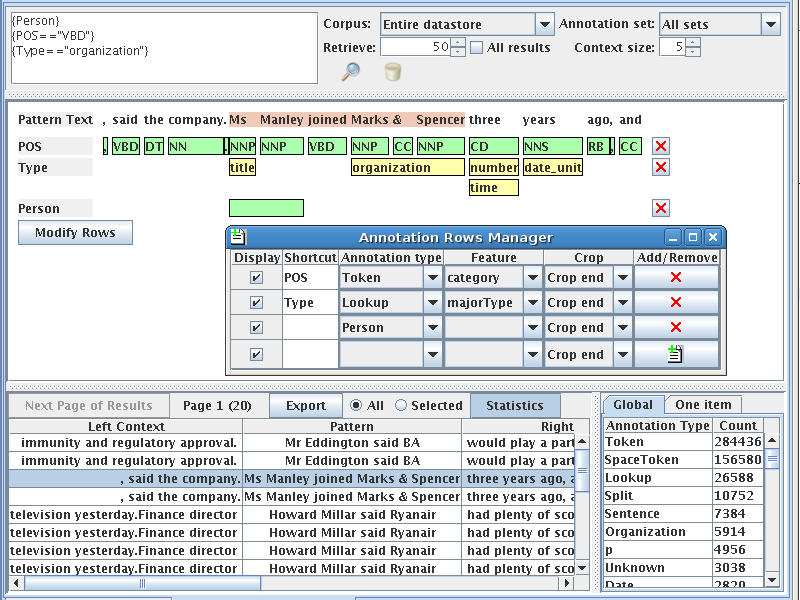

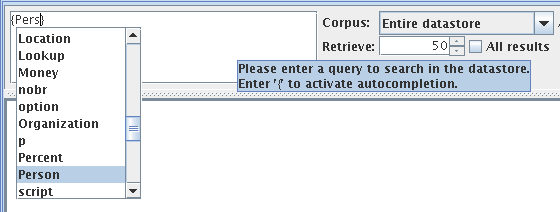

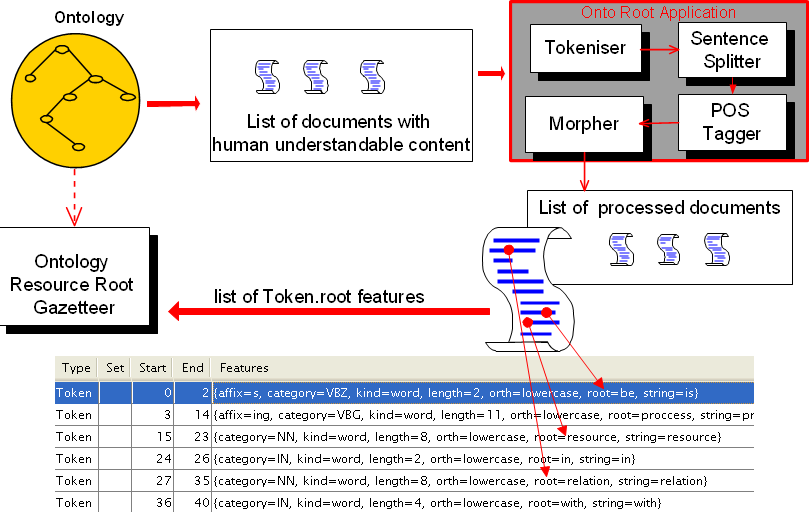

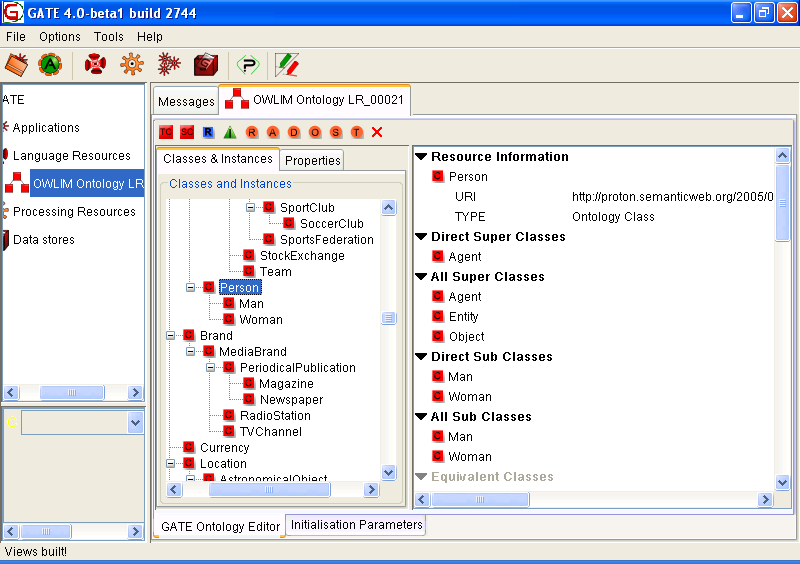

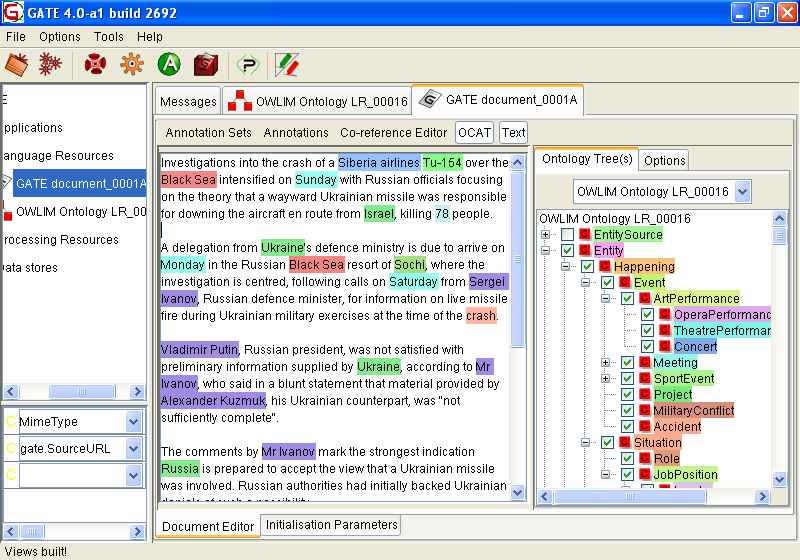

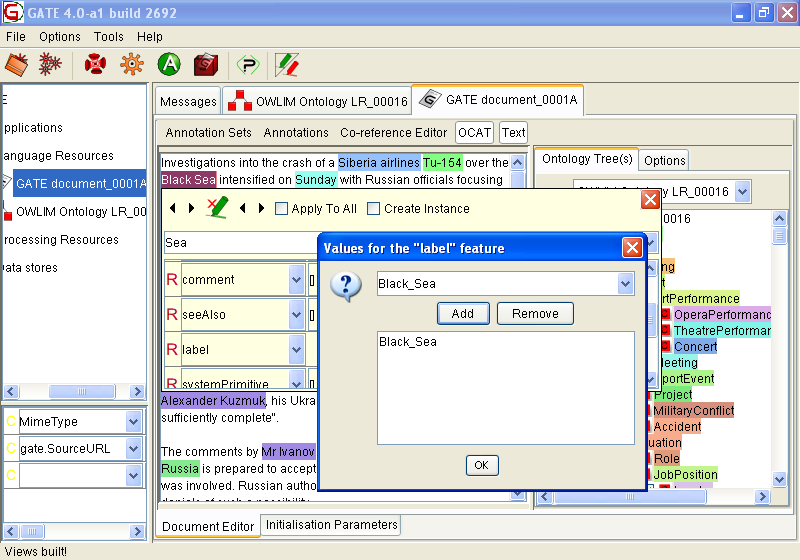

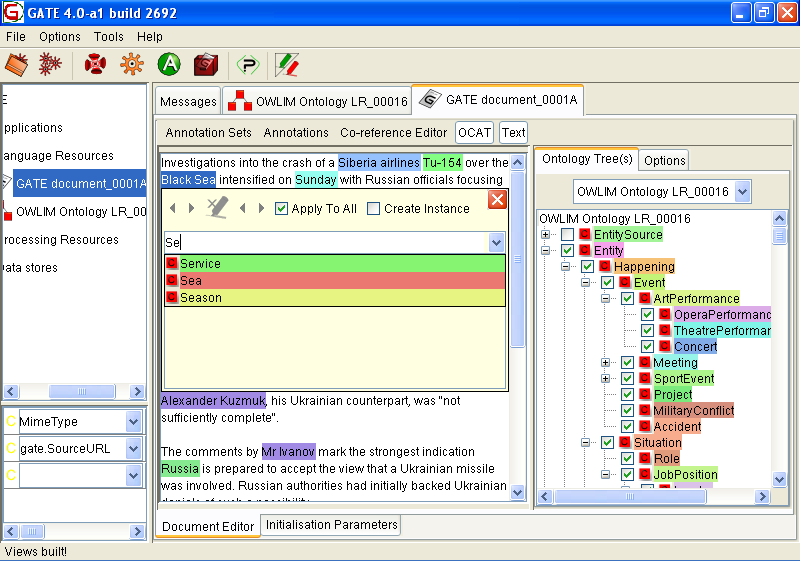

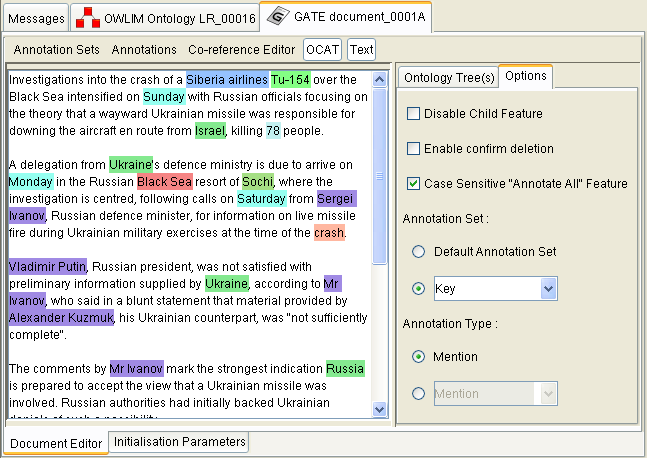

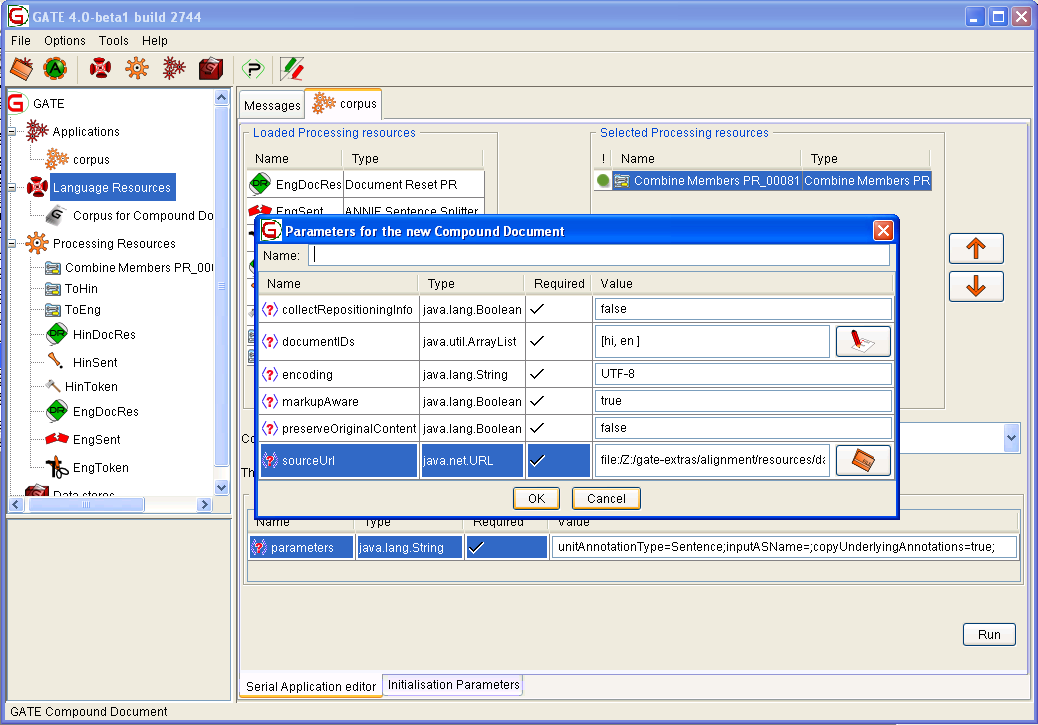

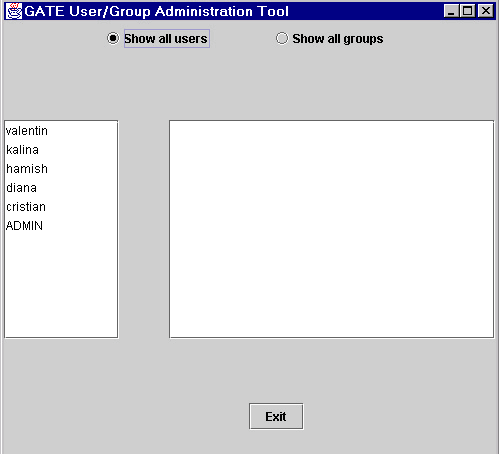

Addition of IR facilities in GATE (see Section 9.19).