Chapter 19

More (CREOLE) Plugins [#]

For the previous reader was none other than myself. I had already read this book long ago.

The old sickness has me in its grip again: amnesia in litteris, the total loss of literary memory. I am overcome by a wave of resignation at the vanity of all striving for knowledge, all striving of any kind. Why read at all? Why read this book a second time, since I know that very soon not even a shadow of a recollection will remain of it? Why do anything at all, when all things fall apart? Why live, when one must die? And I clap the lovely book shut, stand up, and slink back, vanquished, demolished, to place it again among the mass of anonymous and forgotten volumes lined up on the shelf.

…

But perhaps - I think, to console myself - perhaps reading (like life) is not a matter of being shunted on to some track or abruptly off it. Maybe reading is an act by which consciousness is changed in such an imperceptible manner that the reader is not even aware of it. The reader suffering from amnesia in litteris is most definitely changed by his reading, but without noticing it, because as he reads, those critical faculties of his brain that could tell him that change is occurring are changing as well. And for one who is himself a writer, the sickness may conceivably be a blessing, indeed a necessary precondition, since it protects him against that crippling awe which every great work of literature creates, and because it allows him to sustain a wholly uncomplicated relationship to plagiarism, without which nothing original can be created.

Three Stories and a Reflection, Patrick Suskind, 1995 (pp. 82, 86).

This chapter describes additional CREOLE resources which do not form part of ANNIE, and have not been covered in previous chapters.

19.1 Language Plugins [#]

There are plugins available for processing the following languages: French, German, Spanish, Italian, Chinese, Arabic, Romanian, Hindi and Cebuano. Some of the applications are quite basic and just contain some useful processing resources to get you started when developing a full application. Others (Cebuano and Hindi) are more like toy systems built as part of an exercise in language portability.

Note that if you wish to use individual language processing resources without loading the whole application, you will need to load the relevant plugin for that language in most cases. The plugins all follow the same kind of format. Load the plugin using the plugin manager in GATE Developer, and the relevant resources will be available in the Processing Resources set.

Some plugins just contain a list of resources which can be added ad hoc to other applications. For example, the Italian plugin simply contains a lexicon which can be used to replace the English lexicon in the default English POS tagger: this will provide a reasonable basic POS tagger for Italian.

In most cases you will also find a directory in the relevant plugin directory called data which contains some sample texts (in some cases, these are annotated with NEs).

19.1.1 French Plugin [#]

The French plugin contains two applications for NE recognition: one which includes the TreeTagger for POS tagging in French (french+tagger.gapp) , and one which does not (french.gapp). Simply load the application required from the plugins/Lang_French directory. You do not need to load the plugin itself from the GATE Developer’s Plugin Management Console. Note that the TreeTagger must first be installed and set up correctly (see Section 17.3 for details). Check that the runtime parameters are set correctly for your TreeTagger in your application. The applications both contain resources for tokenisation, sentence splitting, gazetteer lookup, NE recognition (via JAPE grammars) and orthographic coreference. Note that they are not intended to produce high quality results, they are simply a starting point for a developer working on French. Some sample texts are contained in the plugins/Lang_French/data directory.

19.1.2 German Plugin [#]

The German plugin contains two applications for NE recognition: one which includes the TreeTagger for POS tagging in German (german+tagger.gapp) , and one which does not (german.gapp). Simply load the application required from the plugins/Lang_German/resources directory. You do not need to load the plugin itself from the GATE Developer’s Plugin Management Console. Note that the TreeTagger must first be installed and set up correctly (see Section 17.3 for details). Check that the runtime parameters are set correctly for your TreeTagger in your application. The applications both contain resources for tokenisation, sentence splitting, gazetteer lookup, compound analysis, NE recognition (via JAPE grammars) and orthographic coreference. Some sample texts are contained in the plugins/Lang_German/data directory. We are grateful to Fabio Ciravegna and the Dot.KOM project for use of some of the components for the German plugin.

19.1.3 Romanian Plugin [#]

The Romanian plugin contains an application for Romanian NE recognition (romanian.gapp). Simply load the application from the plugins/Lang_Romanian/resources directory. You do not need to load the plugin itself from the GATE Developer’s Plugin Management Console. The application contains resources for tokenisation, gazetteer lookup, NE recognition (via JAPE grammars) and orthographic coreference. Some sample texts are contained in the plugins/romanian/corpus directory.

19.1.4 Arabic Plugin [#]

The Arabic plugin contains a simple application for Arabic NE recognition (arabic.gapp). Simply load the application from the plugins/Lang_Arabic/resources directory. You do not need to load the plugin itself from the GATE Developer’s Plugin Management Console. The application contains resources for tokenisation, gazetteer lookup, NE recognition (via JAPE grammars) and orthographic coreference. Note that there are two types of gazetteer used in this application: one which was derived automatically from training data (Arabic inferred gazetteer), and one which was created manually. Note that there are some other applications included which perform quite specific tasks (but can generally be ignored). For example, arabic-for-bbn.gapp and arabic-for-muse.gapp make use of a very specific set of training data and convert the result to a special format. There is also an application to collect new gazetteer lists from training data (arabic_lists_collector.gapp). For details of the gazetteer list collector please see Section 13.8.

19.1.5 Chinese Plugin [#]

The Chinese plugin contains two components: a simple application for Chinese NE recognition (chinese.gapp) and a component called “Chinese Segmenter”.

In order to use the former, simply load the application from the plugins/Lang_Chinese/resources directory. You do not need to load the plugin itself from the GATE Developer’s Plugin Management Console. The application contains resources for tokenisation, gazetteer lookup, NE recognition (via JAPE grammars) and orthographic coreference. The application makes use of some gazetteer lists (and a grammar to process them) derived automatically from training data, as well as regular hand-crafted gazetteer lists. There are also applications (listscollector.gapp, adj_collector.gapp and nounperson_collector.gapp) to create such lists, and various other application to perform special tasks such as coreference evaluation (coreference_eval.gapp) and converting the output to a different format (ace-to-muse.gapp).

For details on the Chinese Segmenter please see Section 19.13.

19.1.6 Hindi Plugin [#]

The Hindi plugin (‘Lang_Hindi’) contains a set of resources for basic Hindi NE recognition which mirror the ANNIE resources but are customised to the Hindi language. You need to have the ANNIE plugin loaded first in order to load any of these PRs. With the Hindi, you can create an application similar to ANNIE but replacing the ANNIE PRs with the default PRs from the plugin.

19.2 Flexible Exporter [#]

The Flexible Exporter enables the user to save a document (or corpus) in its original format with added annotations. The user can select the name of the annotation set from which these annotations are to be found, which annotations from this set are to be included, whether features are to be included, and various renaming options such as renaming the annotations and the file.

At load time, the following parameters can be set for the flexible exporter:

- includeFeatures - if set to true, features are included with the annotations exported; if false (the default status), they are not.

- useSuffixForDumpFiles - if set to true (the default status), the output files have the suffix defined in suffixForDumpFiles; if false, no suffix is defined, and the output file simply overwrites the existing file (but see the outputFileUrl runtime parameter for an alternative).

- suffixForDumpFiles - this defines the suffix if useSuffixForDumpFiles is set to true. By default the suffix is .gate.

- useStandOffXML - if true then the format will be the GATE XML format that separates nodes and annotations inside the file which allows overlapping annotations to be saved.

The following runtime parameters can also be set (after the file has been selected for the application):

- annotationSetName - this enables the user to specify the name of the annotation set which contains the annotations to be exported. If no annotation set is defined, it will use the Default annotation set.

- annotationTypes - this contains a list of the annotations to be exported. By default it is set to Person, Location and Date.

- dumpTypes - this contains a list of names for the exported annotations. If the annotation name is to remain the same, this list should be identical to the list in annotationTypes. The list of annotation names must be in the same order as the corresponding annotation types in annotationTypes.

- outputDirectoryUrl - this enables the user to specify the export directory where the file is exported with its original name and an extension (provided as a parameter) appended at the end of filename. Note that you can also save a whole corpus in one go. If not provided, use the temporary directory.

19.3 Annotation Set Transfer [#]

The Annotation Set Transfer allows copying or moving annotations to a new annotation set if they lie between the beginning and the end of an annotation of a particular type (the covering annotation). For example, this can be used when a user only wants to run a processing resource over a specific part of a document, such as the Body of an HTML document. The user specifies the name of the annotation set and the annotation which covers the part of the document they wish to transfer, and the name of the new annotation set. All the other annotations corresponding to the matched text will be transferred to the new annotation set. For example, we might wish to perform named entity recognition on the body of an HTML text, but not on the headers. After tokenising and performing gazetteer lookup on the whole text, we would use the Annotation Set Transfer to transfer those annotations (created by the tokeniser and gazetteer) into a new annotation set, and then run the remaining NE resources, such as the semantic tagger and coreference modules, on them.

The Annotation Set Transfer has no loadtime parameters. It has the following runtime parameters:

- inputASName - this defines the annotation set from which annotations will be transferred (copied or moved). If nothing is specified, the Default annotation set will be used.

- outputASName - this defines the annotation set to which the annotations will be transferred. This default value for this parameter is ‘Filtered’. If it is left blank the Default annotation set will be used.

- tagASName - this defines the annotation set which contains the annotation covering the relevant part of the document to be transferred. This default value for this parameter is ‘Original markups’. If it is left blank the Default annotation set will be used.

- textTagName - this defines the type of the annotation covering the annotations to be transferred. The default value for this parameter is ‘BODY’. If this is left blank, then all annotations from the inputASName annotation set will be transferred. If more than one covering annotation is found, the annotation covered by each of them will be transferred. If no covering annotation is found, the processing depends on the copyAllUnlessFound parameter (see below).

- copyAnnotations - this specifies whether the annotations should be moved or copied. The default value false will move annotations, removing them from the inputASName annotation set. If set to true the annotations will be copied.

- transferAllUnlessFound - this specifies what should happen if no covering annotation is found. The default value is true. In this case, all annotations will be copied or moved (depending on the setting of parameter copyAnnotations) if no covering annotation is found. If set to false, no annotation will be copied or moved.

For example, suppose we wish to perform named entity recognition on only the text covered by the BODY annotation from the Original Markups annotation set in an HTML document. We have to run the gazetteer and tokeniser on the entire document, because since these resources do not depend on any other annotations, we cannot specify an input annotation set for them to use. We therefore transfer these annotations to a new annotation set (Filtered) and then perform the NE recognition over these annotations, by specifying this annotation set as the input annotation set for all the following resources. In this example, we would set the following parameters (assuming that the annotations from the tokenise and gazetteer are initially placed in the Default annotation set).

- inputASName: Default

- outputASName: Filtered

- tagASName: Original markups

- textTagName: BODY

- copyAnnotations: true or false (depending on whether we want to keep the Token and Lookup annotations in the Default annotation set)

- copyAllUnlessFound: true

The AST PR makes a shallow copy of the feature map for each transferred annotation, i.e. it creates a new feature map containing the same keys and values as the original. It does not clone the feature values themselves, so if your annotations have a feature whose value is a collection and you need to make a deep copy of the collection value then you will not be able to use the AST PR to do this. Similarly if you are copying annotations and do in fact want to share the same feature map between the source and target annotations then the AST PR is not appropriate. In these sorts of cases a JAPE grammar or Groovy script would be a better choice.

19.4 Information Retrieval in GATE [#]

GATE comes with a full-featured Information Retrieval (IR) subsystem that allows queries to be performed against GATE corpora. This combination of IE and IR means that documents can be retrieved from the corpora not only based on their textual content but also according to their features or annotations. For example, a search over the Person annotations for ‘Bush’ will return documents with higher relevance, compared to a search in the content for the string ‘bush’. The current implementation is based on the most popular open source full-text search engine - Lucene (available at http://jakarta.apache.org/lucene/) but other implementations may be added in the future.

An Information Retrieval system is most often considered a system that accepts as input a set of documents (corpus) and a query (combination of search terms) and returns as input only those documents from the corpus which are considered as relevant according to the query. Usually, in addition to the documents, a proper relevance measure (score) is returned for each document. There exist many relevance metrics, but usually documents which are considered more relevant, according to the query, are scored higher.

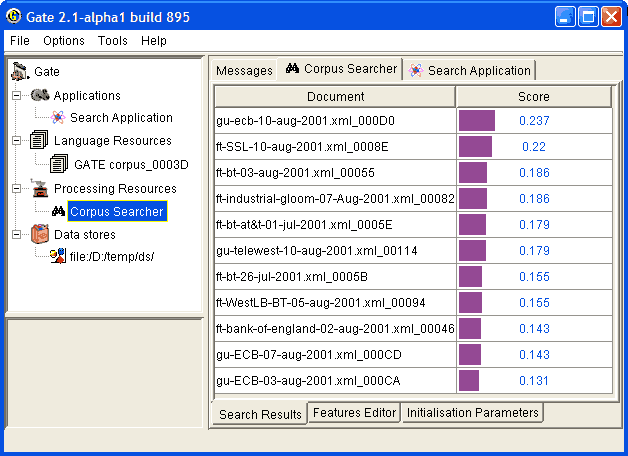

Figure 19.1 shows the results from running a query against an indexed corpus in GATE.

| term1 | term2 | ... | ... | termk | |

| doc1 | w1,1 | w1,2 | ... | ... | w1,k |

| doc2 | w2,1 | w2,1 | ... | ... | w2,k |

| ... | ... | ... | ... | ... | ... |

| ... | ... | ... | ... | ... | ... |

| docn | wn, 1 | wn,2 | ... | ... | wn,k |

Information Retrieval systems usually perform some preprocessing one the input corpus in order to create the document-term matrix for the corpus. A document-term matrix is usually presented as in Table 19.1, where doci is a document from the corpus, termj is a word that is considered as important and representative for the document and wi,j is the weight assigned to the term in the document. There are many ways to define the term weight functions, but most often it depends on the term frequency in the document and in the whole corpus (i.e. the local and the global frequency). Note that the machine learning plugin described in Chapter 15 can produce such document-term matrix (for detailed description of the matrix produced, see Section 15.2.4).

Note that not all of the words appearing in the document are considered terms. There are many words (called ‘stop-words’) which are ignored, since they are observed too often and are not representative enough. Such words are articles, conjunctions, etc. During the preprocessing phase which identifies such words, usually a form of stemming is performed in order to minimize the number of terms and to improve the retrieval recall. Various forms of the same word (e.g. ‘play’, ‘playing’ and ‘played’) are considered identical and multiple occurrences of the same term (probably ‘play’) will be observed.

It is recommended that the user reads the relevant Information Retrieval literature for a detailed explanation of stop words, stemming and term weighting.

IR systems, in a way similar to IE systems, are evaluated with the help of the precision and recall measures (see Section 10.1 for more details).

19.4.1 Using the IR Functionality in GATE

In order to run queries against a corpus, the latter should be ‘indexed’. The indexing process first processes the documents in order to identify the terms and their weights (stemming is performed too) and then creates the proper structures on the local file system. These file structures contain indexes that will be used by Lucene (the underlying IR engine) for the retrieval.

Once the corpus is indexed, queries may be run against it. Subsequently the index may be removed and then the structures on the local file system are removed too. Once the index is removed, queries cannot be run against the corpus.

Indexing the Corpus

In order to index a corpus, the latter should be stored in a serial datastore. In other words, the IR functionality is unavailable for corpora that are transient or stored in a RDBMS datastores (though support for the latter may be added in the future).

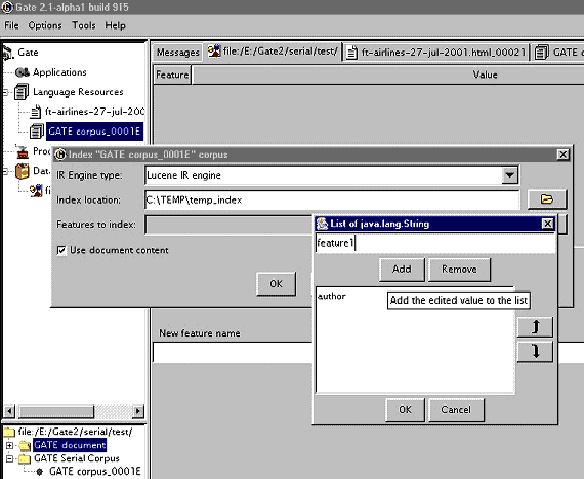

To index the corpus, follow these steps:

- Select the corpus from the resource tree (top-left pane) and from the context menu (right button click) choose ‘Index Corpus’. A dialogue appears that allows you to specify the index properties.

- In the index properties dialogue, specify the underlying IR system to be used (only Lucene is supported at present), the directory that will contain the index structures, and the set of properties that will be indexed such as document features, content, etc (the same properties will be indexed for each document in the corpus).

- Once the corpus in indexed, you may start running queries against it. Note that the directory specified for the index data should exist and be empty. Otherwise an error will occur during the index creation.

Querying the Corpus

To query the corpus, follow these steps:

- Create a SearchPR processing resource. All the parameters of SearchPR are runtime so they are set later.

- Create a pipeline application containing the SearchPR.

- Set the following SearchPR parameters:

- The corpus that will be queried.

- The query that will be executed.

- The maximum number of documents returned.

A query looks like the following:

{+/-}field1:term1 {+/-}field2:term2 ? {+/-}fieldN:termNwhere field is the name of a index field, such as the one specified at index creation (the document content field is body) and term is a term that should appear in the field.

For example the query:

+body:government +author:CNNwill inspect the document content for the term ‘government’ (together with variations such as ‘governments’ etc.) and the index field named ‘author’ for the term ‘CNN’. The ‘author’ field is specified at index creation time, and is either a document feature or another document property.

- After the SearchPR is initialized, running the application executes the specified query over the specified corpus.

- Finally, the results are displayed (see fig.1) after a double-click on the SearchPR processing resource.

Removing the Index

An index for a corpus may be removed at any time from the ‘Remove Index’ option of the context menu for the indexed corpus (right button click).

19.4.2 Using the IR API

The IR API within GATE Embedded makes it possible for corpora to be indexed, queried and results returned from any Java application, without using GATE Developer. The following sample indexes a corpus, runs a query against it and then removes the index.

2// open a serial datastore

3SerialDataStore sds =

4Factory.openDataStore("gate.persist.SerialDataStore",

5"/tmp/datastore1");

6sds.open();

7

8//set an AUTHOR feature for the test document

9Document doc0 = Factory.newDocument(new URL("/tmp/documents/doc0.html"));

10doc0.getFeatures().put("author","John Smith");

11

12Corpus corp0 = Factory.newCorpus("TestCorpus");

13corp0.add(doc0);

14

15//store the corpus in the serial datastore

16Corpus serialCorpus = (Corpus) sds.adopt(corp0,null);

17sds.sync(serialCorpus);

18

19//index the corpus - the content and the AUTHOR feature

20

21IndexedCorpus indexedCorpus = (IndexedCorpus) serialCorpus;

22

23DefaultIndexDefinition did = new DefaultIndexDefinition();

24did.setIrEngineClassName(

25 gate.creole.ir.lucene.LuceneIREngine.class.getName());

26did.setIndexLocation("/tmp/index1");

27did.addIndexField(new IndexField("content",

28 new DocumentContentReader(), false));

29did.addIndexField(new IndexField("author", null, false));

30indexedCorpus.setIndexDefinition(did);

31

32indexedCorpus.getIndexManager().createIndex();

33//the corpus is now indexed

34

35//search the corpus

36Search search = new LuceneSearch();

37search.setCorpus(ic);

38

39QueryResultList res = search.search("+content:government +author:John");

40

41//get the results

42Iterator it = res.getQueryResults();

43while (it.hasNext()) {

44QueryResult qr = (QueryResult) it.next();

45System.out.println("DOCUMENT_ID=" + qr.getDocumentID()

46 + ", score=" + qr.getScore());

47}

19.5 Websphinx Web Crawler [#]

The ‘Web_Crawler_Websphinx’ plugin enables GATE to build a corpus from a web crawl. It is based on Websphinx, a JAVA-based, customizable, multi-threaded web crawler.

Note: if you are using this plugin via an IDE, you may need to make sure that the websphinx.jar file is on the IDE’s classpath, or add it to the IDE’s lib directory.

The basic idea is to specify a source URL (or set of documents created from web URLs) and a depth and maximum number of documents to build the initial corpus upon which further processing could be done. The PR itself provides a number of other parameters to regulate the crawl.

This PR now uses the HTTP Content-Type headers to determine each web page’s encoding and MIME type before creating a GATE Document from it. It also adds to each document a Date feature (with a java.util.Date value) based on the HTTP Last-Modified header (if available) or the current timestamp and an originalMimeType feature taken from the Content-Type header.

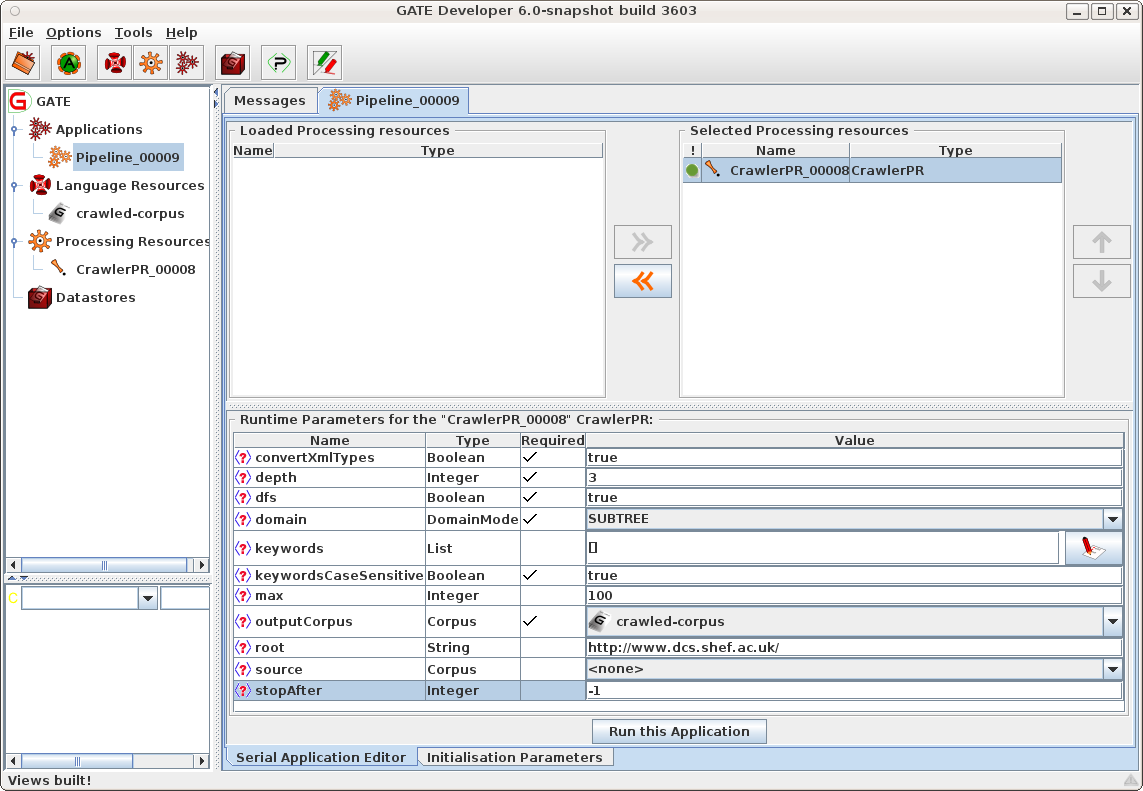

19.5.1 Using the Crawler PR

In order to use the processing resource you need to load the plugin using the plugin manager, create an instance of the crawl PR from the list of processing resources, and create a corpus in which to store crawled documents. In order to use the crawler, create a simple pipeline (not a corpus pipeline) and add the crawl PR to the pipeline.

Once the crawl PR is created there will be a number of parameters that can be set based on the PR required (see also Figure 19.3).

- depth

- The depth (integer) to which the crawl should proceed.

- dfs

- A boolean:

- true

- the crawler visits links with a depth-first strategy;

- false

- the crawler visits links with a breadth-first strategy;

- domain

- An enum value, presented as a pull-down list in the GUI:

- SUBTREE

- The crawler visits only the descendents of the pages specified as the roots for the crawl.

- WEB

- The crawler can visit any pages on the web.

- SERVER

- The crawler can visit only pages that are present on the server where the root pages are located.

- max

- The maximum number (integer) of pages to be kept: the crawler will stop when it has stored this number of documents in the output corpus. Use -1 to ignore this limit.

- stopAfter

- The maximum number (integer) of pages to be fetched: the crawler will stop when it has visited this number of pages. Use -1 to ignore this limit. If max > stopAfter > 0 then the crawl will store at most stopAfter (not max) documents.

- root

- A string containing one URL to start the crawl.

- source

- A corpus that contains the documents whose gate.sourceURL features will be used to start the crawl. If you use both root and source parameters, both the root value and the URLs collected from the source documents will seed the crawl.

- outputCorpus

- The corpus in which the fetched documents will be stored.

- keywords

- A List<String> for matching against crawled documents. If this list is empty or null, all documents fetched will be kept. Otherwise, only documents that contain one of these strings will be stored in the output corpus. (Documents that are fetched but not kept are still scanned for further links.)

- keywordsCaseSensitive

- This boolean determines whether keyword matching is case-sensitive or not.

- convertXmlTypes

- GATE’s XmlDocumentFormat only accepts certain MIME types. If this parameter is true, the crawl PR converts other XML types (such as application/atom+xml.xml) to text/xml before trying to instantiate the GATE document (this allows GATE to handle RSS feeds, for example).

Once the parameters are set, the crawl can be run and the documents fetched (and matched to the keywords, if that list is in use) are added to the specified corpus. Documents that are fetched but not matched are discarded after scanning them for further links.

Note that you must use a simple Pipeline, and not a Corpus Pipeline. If you wish to process the crawled documents, you must build a second Corpus Pipeline. Note that from GATE Version 5.1, you could combine the two pipelines as follows. Build a simple Pipeline containing your Web Crawler. Let’s call this Pipeline Ps. Let’s say you set the Corpus on your crawler to C. Now build your processing corpus pipeline, which we will call Pc. Put the original pipeline Ps as its first PR. Set its corpus to be the corpus as before, C.

19.6 Google Plugin [#]

This plugin is no longer operational because the functionality, provided by Google, on which it depends, is no longer available.

19.7 Yahoo Plugin [#]

The Yahoo API is now integrated with GATE, and can be used as a PR-based plugin. This plugin, ‘Web_Search_Yahoo’, allows the user to query Yahoo and build a document corpus that contains the search results returned by Yahoo for the query. For more information about the Yahoo API please refer to http://developer.yahoo.com/search/. In order to use the Yahoo PR, you need to obtain an application ID.

The Yahoo PR can be used for a number of different application scenarios. For example, one use case is where a user wants to find the different named entities that can be associated with a particular individual. In this example, the user could build a collection of documents by querying Yahoo with the individual’s name and then running ANNIE over the collection. This would annotate the results and show the different Organization, Location and other entities that are associated with the query.

19.7.1 Using the YahooPR

In order to use the PR, you first need to load the plugin using the GATE Developer plugin manager. Once the PR is loaded, it can be initialized by creating an instance of a new PR. Here you need to specify the Yahoo Application ID. Please use the license key assigned to you by registering with Yahoo.

Once the Yahoo PR is initialized, it can be placed in a pipeline or a conditional pipeline application. This pipeline would contain the instance of the Yahoo PR just initialized as above. There are a number of parameters to be set at runtime:

- corpus: The corpus used by the plugin to add or append documents from the Web.

- corpusAppendMode: If set to true, will append documents to the corpus. If set to false, will remove preexisting documents from the corpus, before adding the documents newly fetched by the PR

- limit: A limit on the results returned by the search. Default set to 10.

- pagesToExclude: This is an optional parameter. It is a list with URLs not to be included in the search.

- query: The query sent to Yahoo. It is in the format accepted by Yahoo.

Once the required parameters are set we can run the pipeline. This will then download all the URLs in the results and create a document for each. These documents would be added to the corpus.

19.8 Google Translator PR [#]

The Google Translator PR allows users to translate their documents into many other languages using the Google translation service. It is based on the library called google-translate-api-java which is distributed under the LGPL licence and is available to download from http://code.google.com/p/google-api-translate-java/.

The PR is included in the plugin called Web_Translate_Google and depends on the Alignment plugin. (chapter 16).

If a user wants to translate an English document into French using the Google Translator PR. The first thing user needs to do is to create an instance of CompoundDocument with the English document as a member of it. The CompoundDocument in GATE provides a convenient way to group parallel documents that are translations of one other (see chapter 16 for more information). The idea is to use text from one of the members of the provided compound document, translate it using the google translation service and create another member with the translated text. In the process, the PR also aligns the chunks of parallel texts. Here, a chunk could be a sentence, paragraph, section or the entire document.

siteReferrer is the only init-time parameter required to instantiate the PR. It has to be a valid website address. The value of this parameter is required to inform Google about the users using their service. There are seven run-time parameters:

- document - an instance of the compound document with a member document containing source text.

- sourceDocumentId - id of the source member document that needs to be translated.

- targetDocumentId - id of the target member document. This document is created by the PR and contains the translated text.

- sourceLanguage - the language of the source document.

- targetLanguage - the language into which the source document should be translated.

- unitOfTranslation - annotation type used for identifying chunks of texts to be translated and aligned.

- inputASName - name of the annotation set which contains unit of translations.

- alignmentFeatureName - name of the alignment feature used for storing the alignment information. The alignment feature is a document feature stored on the compound document.

19.9 WordNet in GATE [#]

GATE currently supports versions 1.6 and newer of WordNet, so in order to use WordNet in GATE, you must first install a compatible version of WordNet on your computer. WordNet is available at http://wordnet.princeton.edu/. The next step is to configure GATE to work with your local WordNet installation. Since GATE relies on the Java WordNet Library (JWNL) for WordNet access, this step consists of providing one special xml file that is used internally by JWNL. This file describes the location of your local copy of the WordNet index files. An example of this wn-config.xml file is shown below:

<?xml version="1.0" encoding="UTF-8"?>

<jwnl_properties language="en">

<version publisher="Princeton" number="3.0" language="en"/>

<dictionary class="net.didion.jwnl.dictionary.FileBackedDictionary">

<param name="morphological_processor" value="net.didion.jwnl.dictionary.morph.DefaultMorphologicalProcessor">

<param name="operations">

<param value="net.didion.jwnl.dictionary.morph.LookupExceptionsOperation"/>

<param value="net.didion.jwnl.dictionary.morph.DetachSuffixesOperation">

<param name="noun" value="|s=|ses=s|xes=x|zes=z|ches=ch|shes=sh|men=man|ies=y|"/>

<param name="verb" value="|s=|ies=y|es=e|es=|ed=e|ed=|ing=e|ing=|"/>

<param name="adjective" value="|er=|est=|er=e|est=e|"/>

<param name="operations">

<param value="net.didion.jwnl.dictionary.morph.LookupIndexWordOperation"/>

<param value="net.didion.jwnl.dictionary.morph.LookupExceptionsOperation"/>

</param>

</param>

<param value="net.didion.jwnl.dictionary.morph.TokenizerOperation">

<param name="delimiters">

<param value=" "/>

<param value="-"/>

</param>

<param name="token_operations">

<param value="net.didion.jwnl.dictionary.morph.LookupIndexWordOperation"/>

<param value="net.didion.jwnl.dictionary.morph.LookupExceptionsOperation"/>

<param value="net.didion.jwnl.dictionary.morph.DetachSuffixesOperation">

<param name="noun" value="|s=|ses=s|xes=x|zes=z|ches=ch|shes=sh|men=man|ies=y|"/>

<param name="verb" value="|s=|ies=y|es=e|es=|ed=e|ed=|ing=e|ing=|"/>

<param name="adjective" value="|er=|est=|er=e|est=e|"/>

<param name="operations">

<param value="net.didion.jwnl.dictionary.morph.LookupIndexWordOperation"/>

<param value="net.didion.jwnl.dictionary.morph.LookupExceptionsOperation"/>

</param>

</param>

</param>

</param>

</param>

</param>

<param name="dictionary_element_factory" value="net.didion.jwnl.princeton.data.PrincetonWN17FileDictionaryElementFactory"/>

<param name="file_manager" value="net.didion.jwnl.dictionary.file_manager.FileManagerImpl">

<param name="file_type" value="net.didion.jwnl.princeton.file.PrincetonRandomAccessDictionaryFile"/>

<param name="dictionary_path" value="/home/mark/WordNet-3.0/dict/"/>

</param>

</dictionary>

<resource class="PrincetonResource"/>

</jwnl_properties>

There are three things in this file which you need to configure based upon the version of WordNet you wish to use. Firstly change the number attribute of the version element to match the version of WordNet you are using. Then edit the value of the dictionary_path parameter to point to your local installation of WordNet. Finally, if you want to use version 1.6 of WordNet then you allso need to alter the dictionary_element_factory to use net.didion.jwnl.princeton.data.PrincetonWN16FileDictionaryElementFactory. For full details of the format of the configuration file see the JWNL documentation at http://sourceforge.net/projects/jwordnet.

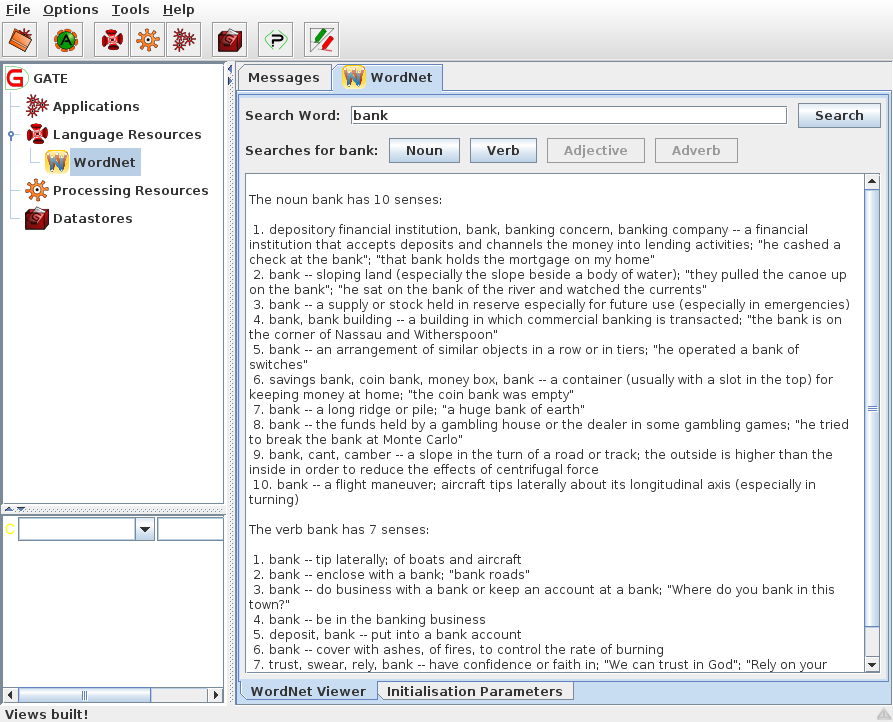

After configuring GATE to use WordNet, you can start using the built-in WordNet browser or API. In GATE Developer, load the WordNet plugin via the Plugin Management Console. Then load WordNet by selecting it from the set of available language resources. Set the value of the parameter to the path of the xml properties file which describes the WordNet location (wn-config).

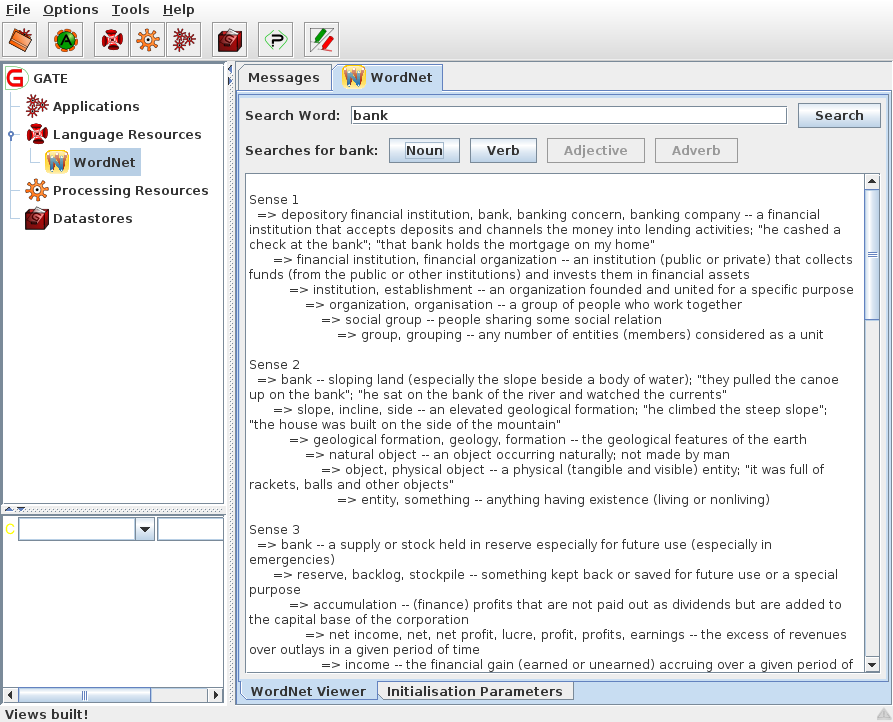

Once WordNet is loaded in GATE Developer, the well-known interface of WordNet will appear. You can search Word Net by typing a word in the box next to to the label ‘SearchWord” and then pressing ‘Search’. All the senses of the word will be displayed in the window below. Buttons for the possible parts of speech for this word will also be activated at this point. For instance, for the word ‘play’, the buttons ‘Noun’, ‘Verb’ and ‘Adjective’ are activated. Pressing one of these buttons will activate a menu with hyponyms, hypernyms, meronyms for nouns or verb groups, and cause for verbs, etc. Selecting an item from the menu will display the results in the window below.

To upgrade any existing GATE applications to use this improved WordNet plugin simply replace your existing configuration file with the example above and configure for WordNet 1.6. This will then give results identical to the previous version – unfortunately it was not possible to provide a transparent upgrade procedure.

More information about WordNet can be found at http://wordnet.princeton.edu/

More information about the JWNL library can be found at http://sourceforge.net/projects/jwordnet

An example of using the WordNet API in GATE is available on the GATE examples page at http://gate.ac.uk/gate-examples/doc/index.html.

19.9.1 The WordNet API

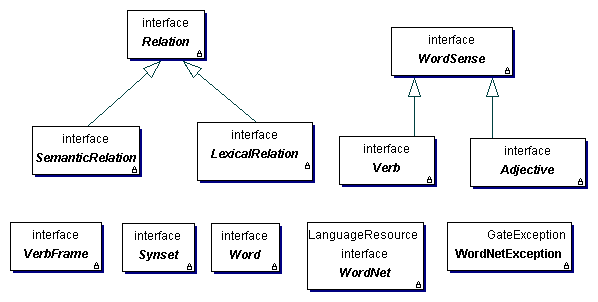

GATE Embedded offers a set of classes that can be used to access the WordNet Lexical Database. The implementation of the GATE API for WordNet is based on Java WordNet Library (JWNL). There are just a few basic classes, as shown in Figure 19.6. Details about the properties and methods of the interfaces/classes comprising the API can be obtained from the JavaDoc. Below is a brief overview of the interfaces:

- WordNet: the main WordNet class. Provides methods for getting the synsets of a lemma, for accessing the unique beginners, etc.

- Word: offers access to the word’s lemma and senses

- WordSense: gives access to the synset, the word, POS and lexical relations.

- Synset: gives access to the word senses (synonyms) in the synset, the semantic relations, POS etc.

- Verb: gives access to the verb frames (not working properly at present)

- Adjective: gives access to the adj. position (attributive, predicative, etc.).

- Relation: abstract relation such as type, symbol, inverse relation, set of POS tags, etc. to which it is applicable.

- LexicalRelation

- SemanticRelation

- VerbFrame

19.10 Kea - Automatic Keyphrase Detection [#]

Kea is a tool for automatic detection of key phrases developed at the University of Waikato in New Zealand. The home page of the project can be found at http://www.nzdl.org/Kea/.

This user guide section only deals with the aspects relating to the integration of Kea in GATE. For the inner workings of Kea, please visit the Kea web site and/or contact its authors.

In order to use Kea in GATE Developer, the ‘Keyphrase_Extraction_Algorithm’ plugin needs to be loaded using the plugins management console. After doing that, two new resource types are available for creation: the ‘KEA Keyphrase Extractor’ (a processing resource) and the ‘KEA Corpus Importer’ (a visual resource associated with the PR).

19.10.1 Using the ‘KEA Keyphrase Extractor’ PR

Kea is based on machine learning and it needs to be trained before it can be used to extract keyphrases. In order to do this, a corpus is required where the documents are annotated with keyphrases. Corpora in the Kea format (where the text and keyphrases are in separate files with the same name but different extensions) can be imported into GATE using the ‘KEA Corpus Importer’ tool. The usage of this tool is presented in a subsection below.

Once an annotated corpus is obtained, the ‘KEA Keyphrase Extractor’ PR can be used to build a model:

- load a ‘KEA Keyphrase Extractor’

- create a new ‘Corpus Pipeline’ controller.

- set the corpus for the controller

- set the ‘trainingMode’ parameter for the PR to ‘true’

- run the application.

After these steps, the Kea PR contains a trained model. This can be used immediately by switching the ‘trainingMode’ parameter to ‘false’ and running the PR over the documents that need to be annotated with keyphrases. Another possibility is to save the model for later use, by right-clicking on the PR name in the right hand side tree and choosing the ‘Save model’ option.

When a previously built model is available, the training procedure does not need to be repeated, the existing model can be loaded in memory by selecting the ‘Load model’ option in the PR’s context menu.

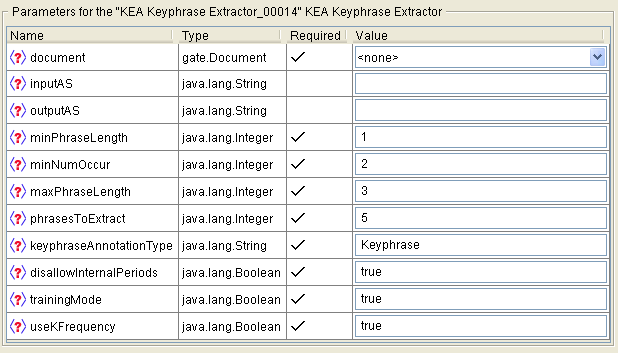

The Kea PR uses several parameters as seen in Figure 19.7:

- document

- The document to be processed.

- inputAS

- The input annotation set. This parameter is only relevant when the PR is running in training mode and it specifies the annotation set containing the keyphrase annotations.

- outputAS

- The output annotation set. This parameter is only relevant when the PR is running in application mode (i.e. when the ‘trainingMode’ parameter is set to false) and it specifies the annotation set where the generated keyphrase annotations will be saved.

- minPhraseLength

- the minimum length (in number of words) for a keyphrase.

- minNumOccur

- the minimum number of occurrences of a phrase for it to be a keyphrase.

- maxPhraseLength

- the maximum length of a keyphrase.

- phrasesToExtract

- how many different keyphrases should be generated.

- keyphraseAnnotationType

- the type of annotations used for keyphrases.

- dissallowInternalPeriods

- should internal periods be disallowed.

- trainingMode

- if ‘true’ the PR is running in training mode; otherwise it is running in application mode.

- useKFrequency

- should the K-frequency be used.

19.10.2 Using Kea Corpora

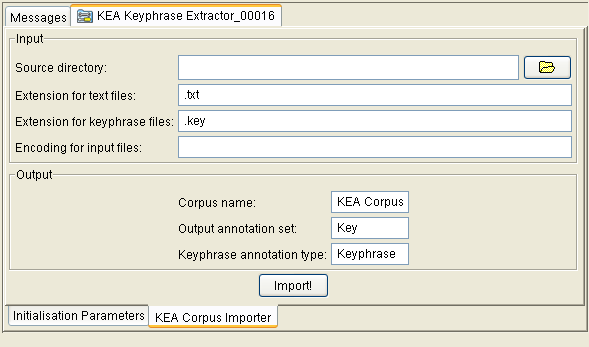

The authors of Kea provide on the project web page a few manually annotated corpora that can be used for training Kea. In order to do this from within GATE, these corpora need to be converted to the format used in GATE (i.e. GATE documents with annotations). This is possible using the ‘KEA Corpus Importer’ tool which is available as a visual resource associated with the Kea PR. The importer tool can be made visible by double-clicking on the Kea PR’s name in the resources tree and then selecting the ‘KEA Corpus Importer’ tab, see Figure 19.8.

The tool will read files from a given directory, converting the text ones into GATE documents and the ones containing keyphrases into annotations over the documents.

The user needs to specify a few values:

- Source Directory

- the directory containing the text and key files. This can be typed in or selected by pressing the folder button next to the text field.

- Extension for text files

- the extension used for text fields (by default .txt).

- Extension for keyphrase files

- the extension for the files listing keyphrases.

- Encoding for input files

- the encoding to be used when reading the files.

- Corpus name

- the name for the GATE corpus that will be created.

- Output annotation set

- the name for the annotation set that will contain the keyphrases read from the input files.

- Keyphrase annotation type

- the type for the generated annotations.

19.11 Ontotext JapeC Compiler [#]

Note: the JapeC compiler does not currently support the new JAPE language features introduced in July–September 2008. If you need to use negation, the @length and @string accessors, the contextual operators within and contains, or any comparison operators other than ==, then you will need to use the standard JAPE transducer instead of JapeC.

JapeC is an alternative implementation of the JAPE language which works by compiling JAPE grammars into Java code. Compared to the standard implementation, these compiled grammars can be several times faster to run. At Ontotext, a modified version of the ANNIE sentence splitter using compiled grammars has been found to run up to five times as fast as the standard version. The compiler can be invoked manually from the command line, or used through the ‘Ontotext Japec Compiler’ PR in the Jape_Compiler plugin.

The ‘Ontotext Japec Transducer’ (com.ontotext.gate.japec.JapecTransducer) is a processing resource that is designed to be an alternative to the original Jape Transducer. You can simply replace gate.creole.Transducer with com.ontotext.gate.japec.JapecTransducer in your gate application and it should work as expected.

The Japec transducer takes the same parameters as the standard JAPE transducer:

- grammarURL

- the URL from which the grammar is to be loaded. Note that the Japec Transducer will only work on file: URLs. Also, the alternative binaryGrammarURL parameter of the standard transducer is not supported.

- encoding

- the character encoding used to load the grammars.

- ontology

- the ontology used for ontolog-aware transduction.

Its runtime parameters are likewise the same as those of the standard transducer:

- document

- the document to process.

- inputASName

- name of the AnnotationSet from which input annotations to the transducer are read.

- outputASName

- name of the AnnotationSet to which output annotations from the transducer are written.

The Japec compiler itself is written in Haskell. Compiled binaries are provided for Windows, Linux (x86) and Mac OS X (PowerPC), so no Haskell interpreter is required to run Japec on these platforms. For other platforms, or if you make changes to the compiler source code, you can build the compiler yourself using the Ant build file in the Jape_Compiler plugin directory. You will need to install the latest version of the Glasgow Haskell Compiler1 and associated libraries. The japec compiler can then be built by running:

from the Jape_Compiler plugin directory.

19.12 Annotation Merging Plugin [#]

If we have annotations about the same subject on the same document from different annotators, we may need to merge the annotations.

This plugin implements two approaches for annotation merging.

MajorityVoting takes a parameter numMinK and selects the annotation on which at least numMinK annotators agree. If two or more merged annotations have the same span, then the annotation with the most supporters is kept and other annotations with the same span are discarded.

MergingByAnnotatorNum selects one annotation from those annotations with the same span, which the majority of the annotators support. Note that if one annotator did not create the annotation with the particular span, we count it as one non-support of the annotation with the span. If it turns out that the majority of the annotators did not support the annotation with that span, then no annotation with the span would be put into the merged annotations.

The annotation merging methods are available via the Annotation Merging plugin. The plugin can be used as a PR in a pipeline or corpus pipeline. To use the PR, each document in the pipeline or the corpus pipeline should have the annotation sets for merging. The annotation merging PR has no loading parameters but has several run-time parameters, explained further below.

The annotation merging methods are implemented in the GATE API, and are available in GATE Embedded as described in Section 7.18.

- annSetOutput: the annotation set in the current document for storing the merged annotations. You should not use an existing annotation set, as the contents may be deleted or overwritten.

- annSetsForMerging: the annotation sets in the document for merging. It is an optional parameter. If it is not assigned with any value, the annotation sets for merging would be all the annotation sets in the document except the default annotation set. If specified, it is a sequence of the names of the annotation sets for merging, separated by ‘;’. For example, the value ‘a-1;a-2;a-3’ represents three annotation set, ‘a-1’, ‘a-2’ and ‘a-3’.

- annTypeAndFeats: the annotation types in the annotation set for merging. It is an optional parameter. It specifies the annotation types in the annotation sets for merging. For each type specified, it may also specify an annotation feature of the type. The parameter is a sequence of names of annotation types, separated by ‘;’. A single annotation feature can be specified immediately following the annotation type’s name, separated by ‘->’ in the sequence. For example, the value ‘SENT->senRel;OPINION_OPR;OPINION_SRC->type’ specifies three annotation types, ‘SENT’, ‘OPINION_OPR’ and ‘OPINION_SRC’ and specifies the annotation feature ‘senRel’ and ‘type’ for the two types SENT and OPINION_SRC, respectively but does not specify any feature for the type OPINION_OPR. If the annTypeAndFeats parameter is not set, the annotation types for merging are all the types in the annotation sets for merging, and no annotation feature for each type is specified.

- keepSourceForMergedAnnotations: should source annotations be kept in the annSetsForMerging annotation sets when merged? True by default.

- mergingMethod: specifies the method used for merging. Possible values are MajorityVoting and MergingByAnnotatorNum, referring to the two merging methods described above, respectively.

- minimalAnnNum: specifies the minimal number of annotators who agree on one annotation in order to put the annotation into merged set, which is needed by the merging method MergingByAnnotatorNum. If the value of the parameter is smaller than 1, the parameter is taken as 1. If the value is bigger than total number of annotation sets for merging, it is taken to be total number of annotation sets. If no value is assigned, a default value of 1 is used. Note that the parameter does not have any effect on the other merging method MajorityVoting.

19.13 Chinese Word Segmentation [#]

Unlike English, Chinese text does not have a symbol (or delimiter) such as blank space to explicitly separate a word from the surrounding words. Therefore, for automatic Chinese text processing, we may need a system to recognise the words in Chinese text, a problem known as Chinese word segmentation. The plugin described in this section performs the task of Chinese word segmentation. It is based on our work using the Perceptron learning algorithm for the Chinese word segmentation task of the Sighan 20052. [Li et al. 05c]. Our Perceptron based system has achieved very good performance in the Sighan-05 task.

The plugin is called Lang_Chinese and is available in the GATE distribution. The corresponding processing resource’s name is Chinese Segmenter PR. Once you load the PR into GATE, you may put it into a Pipeline application. Note that it does not process a corpus of documents, but a directory of documents provided as a parameter (see description of parameters below). The plugin can be used to learn a model from segmented Chinese text as training data. It can also use the learned model to segment Chinese text. The plugin can use different learning algorithms to learn different models. It can deal with different character encodings for Chinese text, such as UTF-8, GB2312 or BIG5. These options can be selected by setting the run-time parameters of the plugin.

The plugin has five run-time parameters, which are described in the following.

- learningAlg is a String variable, which specifies the learning algorithm used for

producing the model. Currently it has two values, PAUM and SVM, representing the

two popular learning algorithms Perceptron and SVM, respectively. The default value

is PAUM.

Generally speaking, SVM may perform better than Perceptron, in particular for small training sets. On the other hand, Perceptron’s learning is much faster than SVM’s. Hence, if you have a small training set, you may want to use SVM to obtain a better model. However, if you have a big training set which is typical for the Chinese word segmentation task, you may want to use Perceptron for learning, because the SVM’s learning may take too long time. In addition, using a big training set, the performance of the Perceptron model is quite similar to that of the SVM model. See [Li et al. 05c] for the experimental comparison of SVM and Perceptron on Chinese word segmentation. - learningMode determines the two modes of using the plugin, either learning a model

from training data or applying a learned model to segment Chinese text. Accordingly it

has two values, SEGMENTING and LEARNING. The default value is SEGMENTING,

meaning segmenting the Chinese text.

Note that you first need to learn a model and then you can use the learned model to segment the text. Several models using the training data used in the Sighan-05 Bakeoff are available for this plugin, which you can use to segment your Chinese text. More descriptions about the provided models will be given below. - modelURL specifies an URL referring to a directory containing the model. If the plugin is in the LEARNING runmode, the model learned will be put into the directory. If it is in the SEGMENTING runmode, the plugin will use the model stored in the directory to segment the text. The models learned from the Sighan-05 bakeoff training data will be discussed below.

- textCode specifies the encoding of the text used. For example it can be UTF-8, BIG5, GB2312 or any other encoding for Chinese text. Note that, when you segment some Chinese text using a learned model, the Chinese text should use the same encoding as the one used by the training text for obtaining the model.

- textFilesURL specifies an URL referring to a directory containing the Chinese documents. All the documents contained in this directory (but not those documents contained in its sub-directory if there is any) will be used as input data. In the LEARNING runmode, those documents contain the segmented Chinese text as training data. In the SEGMENTING runmode, the text in those documents will be segmented. The segmented text will be stored in the corresponding documents in the sub-directory called segmented.

The following PAUM models are distributed with plugins and are available as compressed zip files under the plugins/Lang_Chinese/resources/models directory. Please unzip them to use. In detail, those models were learned using the PAUM learning algorithm from the corpora provided by Sighan-05 bakeoff task.

- the PAUM model learned from PKU training data, using the PAUM learning algorithm and the UTF-8 encoding, is available as model-paum-pku-utf8.zip.

- the PAUM model learned from PKU training data, using the PAUM learning algorithm and the GB2312 encoding, is available as model-paum-pku-gb.zip.

- the PAUM model learned from AS training data, using the PAUM learning algorithm and the UTF-8 encoding, is available as model-as-utf8.zip.

- the PAUM model learned from AS training data, using the PAUM learning algorithm and the BIG5 encoding, is available as model-as-big5.zip.

As you can see, those models were learned using different training data and different Chinese text encodings of the same training data. The PKU training data are news articles published in mainland China and use simplified Chinese, while the AS training data are news articles published in Taiwan and use traditional Chinese. If your text are in simplified Chinese, you can use the models trained by the PKU data. If your text are in traditional Chinese, you need to use the models trained by the AS data. If your data are in GB2312 encoding or any compatible encoding, you need use the model trained by the corpus in GB2312 encoding.

Note that the segmented Chinese text (either used as training data or produced by this plugin) use the blank space to separate a word from its surrounding words. Hence, if your data are in Unicode such as UTF-8, you can use the GATE Unicode Tokeniser to process the segmented text to add the Token annotations into your text to represent the Chinese words. Once you get the annotations for all the Chinese words, you can perform further processing such as POS tagging and named entity recognition.

19.14 Copying Annotations between Documents [#]

Sometimes a document has two copies, each of which was annotated by different annotators for the same task. We may want to copy the annotations in one copy to the other copy of the document. This could be in order to use less resources, or so that we can process them with some other plugin, such as annotation merging or IAA. The Copy_Annots_Between_Docs plugin does exactly this.

The plugin is available with the GATE distribution. When loading the plugin into GATE, it is represented as a processing resource, Copy Anns to Another Doc PR. You need to put the PR into a Corpus Pipeline to use it. The plugin does not have any initialisation parameters. It has several run-time parameters, which specify the annotations to be copied, the source documents and target documents. In detail, the run-time parameters are:

- sourceFilesURL specifies a directory in which the source documents are in. The source documents must be GATE xml documents. The plugin copies the annotations from these source documents to target documents.

- inputASName specifies the name of the annotation set in the source documents. Whole annotations or parts of annotations in the annotation set will be copied.

- annotationTypes specifies one or more annotation types in the annotation set inputASName which will be copied into target documents. If no value is given, the plugin will copy all annotations in the annotation set.

- outputASName specifies the name of the annotation set in the target documents, into which the annotations will be copied. If there is no such annotation set in the target documents, the annotation set will be created automatically.

The Corpus parameter of the Corpus Pipeline application containing the plugin specifies a corpus which contains the target documents. Given one (target) document in the corpus, the plugin tries to find a source document in the source directory specified by the parameter sourceFilesURL, according to the similarity of the names of the source and target documents. The similarity of two file names is calculated by comparing the two strings of names from the start to the end of the strings. Two names have greater similarity if they share more characters from the beginning of the strings. For example, suppose two target documents have the names aabcc.xml and abcab.xml and three source files have names abacc.xml, abcbb.xml and aacc.xml, respectively. Then the target document aabcc.xml has the corresponding source document aacc.xml, and abcab.xml has the corresponding source document abcbb.xml.

19.15 OpenCalais Plugin [#]

OpenCalais provides a web service for semantic annotation of text. The user submits a document to the web service, which returns entity and relations annotations in RDF, JSON or some other format. Typically, users integrate OpenCalais annotation of their web pages to provide additional links and ‘semantic functionality’. OpenCalais can be found at http://www.opencalais.com

The GATE OpenCalais PR submits a GATE document to the OpenCalais web service, and adds the annotations from the OpenCalais response as GATE annotations in the GATE document. It therefore provides OpenCalais semantic annotation functionality within GATE, for use by other PRs.

The PR only supports OpenCalais entities, not relations - although this should be straightforward for a competent Java programmer to add. Each OpenCalais entity is represented in GATE as an OpenCalais annotation, with features as given in the OpenCalais documentation.

The PR can be loaded with the CREOLE plugin manager dialog, from the creole directory in the gate distribution, gate/plugins/Tagger_OpenCalais. In order to use the PR, you will need to have an OpenCalais account, and request an OpenCalais service key. You can do this from the OpenCalais web site at http://www.opencalais.com. Provide your service key as an initialisation parameter when you create a new OpenCalais PR in GATE. OpenCalais make restrictions on the the number of requests you can make to their web service. See the OpenCalais web page for details.

Initialisation parameters are:

- openCalaisURL This is the URL of the OpenCalais REST service, and should not need to be changed - unless OpenCalais moves it!

- licenseID Your OpenCalais service key. This has to be requested from OpenCalais and is specific to you.

Various runtime parameters are available from the OpenCalais API, and are named the same as in that API. See the OpenCalais documentation for further details.

19.16 LingPipe Plugin [#]

LingPipe is a suite of Java libraries for the linguistic analysis of human language3. We have provided a plugin called ‘LingPipe’ with wrappers for some of the resources available in the LingPipe library. In order to use these resources, please load the ‘LingPipe’ plugin. Currently, we have integrated the following five processing resources.

- LingPipe Tokenizer PR

- LingPipe Sentence Splitter PR

- LingPipe POS Tagger PR

- LingPipe NER PR

- LingPipe Language Identifier PR

Please note that most of the resources in the LingPipe library allow learning of new models. However, in this version of the GATE plugin for LingPipe, we have only integrated the application functionality. You will need to learn new models with Lingpipe outside of GATE. We have provided some example models under the ‘resources’ folder which were downloaded from LingPipe’s website. For more information on licensing issues related to the use of these models, please refer to the licensing terms under the LingPipe plugin directory.

The LingPipe system can be loaded from the GATE GUI by simply selecting the ‘Load LingPipe System’ menu item under the ‘File’ menu. This is similar to loading the ANNIE application with default values.

19.16.1 LingPipe Tokenizer PR [#]

As the name suggests this PR tokenizes document text and identifies the boundaries of tokens. Each token is annotated with an annotation of type ‘Token’. Every annotation has a feature called ‘length’ that gives a length of the word in number of characters. There are no initialization parameters for this PR. The user needs to provide the name of the annotation set where the PR should output Token annotations.

19.16.2 LingPipe Sentence Splitter PR [#]

As the name suggests, this PR splits document text in sentences. It identifies sentence boundaries and annotates each sentence with an annotation of type ‘Sentence’. There are no initialization parameters for this PR. The user needs to provide name of the annotation set where the PR should output Sentence annotations.

19.16.3 LingPipe POS Tagger PR [#]

The LingPipe POS Tagger PR is useful for tagging individual tokens with their respective part of speech tags. This PR requires a model (dataset from training the tagger on a tagged corpus), which must be provided as an initialization parameter. Several models are included in this plugin’s resources directory. Each document must already be processed with a tokenizer and a sentence splitter (any kinds in GATE, not necessarily the LingPipe ones) since this PR has Token and Sentence annotations as prerequisites. This PR adds a category feature to each token.

This PR has the following run-time parameters.

- inputASName

- The name of the annotation set with Token and Sentence annotations.

- applicationMode

- The POS tagger can be applied on the text in three different modes.

- FIRSTBEST

- The tagger produces one tag for each token (the one that it calculates is best) and stores it as a simple String in the category feature.

- CONFIDENCE

- The tagger produces the best five tags for each token, with confidence scores, and stores them as a Map<String, Double> in the category feature.

- NBEST

- The tagger produces the five best taggings for the whole document and then stores one to five tags for each token (with document-based scores) as a Map<String, List<Double>> in the category feature. This application mode is noticeably slower than the others.

19.16.4 LingPipe NER PR [#]

The LingPipe NER PR is used for named entity recognition. The PR recognizes entities such as Persons, Organizations and Locations in the text. This PR requires a model which it then uses to classify text as different entity types. An example model is provided under the ‘resources’ folder of this plugin. It must be provided at initialization time. Similar to other PRs, this PR expects users to provide name of the annotation set where the PR should output annotations.

19.16.5 LingPipe Language Identifier PR [#]

As the name suggests, this PR is useful for identifying the language of a document or span of text. This PR uses a model file to identify the language of a text. A model is provided in this plugin’s resources/models subdirectory and as the default value of this required initialization parameter. The PR has the following runtime parameters.

- annotationType

- If this is supplied, the PR classifies the text underlying each annotation of the specified type and stores the result as a feature on that annotation. If this is left blank (null or empty), the PR classifies the text of each document and stores the result as a document feature.

- annotationSetName

- The annotation set used for input and output; ignored if annotationType is blank.

- languageIdFeatureName

- The name of the document or annotation feature used to store the results.

Unlike most other PRs (which produce annotations), this one adds either document features or annotation features. (To classify both whole documents and spans within them, use two instances of this PR.) Note that classification accuracy is better over long spans of text (paragraphs rather than sentences, for example). More information on the languages supported can be found in the LingPipe documentation.

19.17 OpenNLP Plugin [#]

OpenNLP provides java-based tools for sentence detection, tokenization, pos-tagging, chunking and parsing, named-entity detection, and coreference. See the OpenNLP website for details: http://opennlp.sourceforge.net/. The tools use the Maxent machine learning package. See http://maxent.sourceforge.net/ for details.

In order to use these tools as GATE processing resources, load the ‘OpenNLP’ plugin via the Plugin Management Console. Alternatively, the OpenNLP system can be loaded from the GATE GUI by simply selecting the ‘Load OpenNLP System’ menu item under the ‘File’ menu. The OpenNLP PRs will be loaded, together with a pre-configured corpus pipeline application containing these PRs. This is similar to loading the ANNIE application with default values.

We have integrated the following five processing resources:

- OpenNlpTokenizer Tokenizer PR

- OpenNlpSentenceSplit Sentence Splitter PR

- OpenNlpPOS POS Tagger PR

- OpenNlpNameFinder NER PR

- OpenNlpChunker Chunker PR

Note that in general, these PRs can be mixed with other PRs of similar types. For example, you could create a pipeline that uses the OpenNLP Tokenizer, and the ANNIE POS Tagger. You may occasionally have problems with some combinations. Notes on compatibility and PR prerequisites are given for each PR in Section 19.17.2.

Note also that some of the OpenNLP tools use quite large machine learning models, which the PRs need to load into memory. You may find that you have to give additional memory to GATE in order to use the OpenNLP PRs comfortably. See Section 2.7.5 for an example of how to do this.

Below, we describe the parameters common to all of the OpenNLP PRs. This is followed by a section which gives brief details of each PR. For more details on each, see the OpenNLP website, http://opennlp.sourceforge.net/.

19.17.1 Parameters common to all PRs [#]

Load-time Parameters [#]

All OpenNLP PRs have a ‘model’ parameter, which takes a URL. The URL should reference a valid Maxent model, or in the case of the Name Finder a directory containing a set of models for the different types of name sought. Default models can be found in the ‘models/english’ directory. In addition, the OpenNlpPOS POS Tagger PR has a ‘dictionary’ parameter, which also takes a URL, and a ‘dictionaryEncoding’ parameter giving the character encoding of the dictionary file. The default can be found in the ‘models/english’ directory.

For details of training new models (outside of the GATE framework), see Section 19.17.3

Run-time Parameters [#]

The OpenNLP PRs have runtime parameters to specify the annotation sets they should use for input and/or output. These are detailed below in the description of each PR, but all PRs will use the default unnamed annotation set unless told otherwise.

19.17.2 OpenNLP PRs [#]

OpenNlpTokenizer - Tokenizer PR [#]

This PR adds Token annotations to the annotation set specified by the annotationSetName parameter.

This PR does not require any other PR to be run beforehand. It creates annotations of type Token, with a feature and value ‘source=openNLP’ and a string feature that takes the underlying string as its value.

OpenNlpSentenceSplit - Sentence Splitter PR [#]

This PR adds Sentence annotations to the annotation set specified by the annotationSetName parameter.

This PR does not require any other PR to be run beforehand. It creates annotations of type Sentence, with a feature and value ‘source=openNLP’. If the OpenNLP sentence splitter returns no output (i.e. fails to find any sentences), then GATE will add a single sentence annotation from offset 0 to the end of the document. This is to prevent downstream PRs that require sentence annotations from failing.

OpenNlpPOS - POS Tagger PR [#]

This PR adds a feature for Part Of Speech to Token annotations.

This PR requires Sentence and Token annotations to be present in the annotation set specified by its annotationSetName parameter before it will work. These Sentence and Token annotations do not have to be from another OpenNLP PR. They could, for example, be from the ANNIE PRs. This PR adds a ‘category’ feature to each Token, with the predicted Part Of Speech as value.

OpenNlpNameFinder - NER PR [#]

This PR finds standard Named Entities, adding them as annotations named for each named Entity type.

This PR requires Sentence and Token annotations to be present in the annotation set specified by its inputASName parameter before it will work. These Sentence and Token annotations do not have to be from another OpenNLP PR. They could, for example, be from the ANNIE PRs. You may find, however, that not all pairings of Tokenizer and Sentence Splitter will work successfully.

The Token annotations do not need to have a ‘category’ feature. In other words, you do not need to run a POS Tagger before using this PR.

This PR creates annotations for each named entity in the annotation set specified by the outputASName parameter, with a feature and value ‘source=openNLP’. It also adds a feature of ‘type’ to each annotation, with the same values as the annotation type. Types include:

- person

- organization

- location

- date

- time

- percentage

For full details of all types, see the OpenNLP website, http://opennlp.sourceforge.net/.

OpenNlpChunker - Chunker PR [#]

This PR finds noun, verb, and other chunks, adding their position as features Token annotations.

This PR requires Sentence and Token annotations to be present in the annotation set specified by the annotationSetName parameter before it will work. The Token annotations need to have a ‘category’ feature. In other words, you also need to run a POS Tagger before using this PR. The Sentence and Token annotations (and ‘category’ POS tag features) do not have to be from another OpenNLP PR. They could, for example, be from the ANNIE PRs.

This PR creates features of type ‘chunk’ for each token. The value of this feature define whether the token is at the beginning of a chunk, inside a chunk, or outside a chunk, using the standard BIO model. Example values and their interpretations are:

- B-NP Token is the first (beginning) token of a Noun Phrase

- I-NP Token is inside a Noun Phrase

- B-VP Token is the first (beginning) token of a Verb Phrase

- I-VP Token is inside a Verb Phrase

- O Token is outside any phrase

- B-PP Token is the first (beginning) token of a Prepositional Phrase

- B-ADVP Token is the first (beginning) token of an Adverbial Phrase

For full details of all chunk values, see the OpenNLP website, http://opennlp.sourceforge.net/.

19.17.3 Training new models [#]

Within the OpenNLP framework, new models can be trained for each of the tools. By default, the GATE PRs use the standard Maxent models for English which can be found in the plugin’s ‘models/english’ directory. The models are copies of those in the ”models” module in the OpenNLP CVS repository at http://opennlp.cvs.sourceforge.net. If you need to train a different model for an OpenNLP PR, you will have to do this outside of GATE, and then use the file URL of your new model as a value for the ‘model’ parameter of the PR. For details on how to train models, see the Maxent website http://maxent.sourceforge.net/.

19.18 Tagger_MetaMap Plugin [#]

MetaMap, from the National Library of Medicine (NLM), maps biomedical text to the UMLS Metathesaurus and allows Metathesaurus concepts to be discovered in a text corpus.

The Tagger_MetaMap plugin for GATE wraps the MetaMap Java API client to allow GATE to communicate with a remote (or local) MetaMap PrologBeans mmserver and MetaMap distribution. This allows the content of specified annotations (or the entire document content) to be processed by MetaMap and the results converted to GATE annotations and features.

To use this plugin, you will need access to a remote MetaMap server, or install one locally by downloading and installing the complete distribution:

and Java PrologBeans mmserver

http://metamap.nlm.nih.gov/README_javaapi.html

19.18.1 Parameters

Initialisation parameters

- excludeSemanticTypes: list of MetaMap semantic types that should be excluded from the final output annotation set. Useful for reducing the number of generic annotations output (e.g. for qualitative and temporal concepts).

- restrictSemanticTypes: list of MetaMap semantic types that should be the only types

from which output annotations are created. E.g. if bpoc (Body Part Organ or Organ

Component) is specified here, only MetaMap matches of these concepts will result

in GATE annotations being created for these matches, and no others. Overrides the

excludeSemanticTypes parameter.

See http://semanticnetwork.nlm.nih.gov/ for a list of UMLS semantic types used by MetaMap.

- mmServerHost: name or IP address of the server on which the MetaMap terminology

server (skrmedpostctl), disambiguation server (wsdserverctl) and API PrologServer

(mmserver09) are running. Default is localhost.

NB: all three need to be on the same server, unless you wish to recompile mmserver09 from source and add functionality to point to different terminology and word-sense disambiguation server locations.

- mmServerPort: port number of the mmserver09 PrologServer running on mmServerHost. Default is 8066

- mmServerTimeout: milliseconds to wait for PrologServer. Default is 150000

- outputASType: output annotation name to be used for all MetaMap annotations

Run-time parameters

- annotatePhrases: set to true to output MetaMap phrase-level annotations (generally noun-phrase chunks). Only phrases containing a MetaMap mapping will be annotated. Can be useful for post-coordination of phrase-level terms that do not exist in a pre-coordinated form in UMLS.

- inputASName: input Annotation Set name. Use in conjunction with inputASTypes: (see below). Unless specified, the entire document content will be sent to MetaMap.

- inputASTypes: only send the content of the named annotations listed here, within inputASName, to MetaMap. Unless specified, the entire document content will be sent to MetaMap.

- metaMapOptions: set parameter-less MetaMap options here. Default is -Xt (truncate Candidates mappings and do not use full text parsing). See http://metamap.nlm.nih.gov/README_javaapi.html for more details. NB: only set the -y parameter (word-sense disambiguation) if wsdserverctl is running. Running the Java MetaMap API with a large corpus with word-sense disambiguation can cause a ’too many open files’ error - this appears to be a PrologServer bug.

- outputASName: output Annotation Set name.

- outputMode: determines which mappings are output as annotations in the GATE

document:

- MappingsOnly: only annotate the final MetaMap Mappings. This will result in fewer annotations with higher precision (e.g. for ’lung cancer’ only the complete phrase will be annotated as neop)

- CandidatesOnly: annotate only Candidate mappings and not the final Mappings. This will result in more annotations with less precision (e.g. for ’lung cancer’ both ’lung’ (bpoc) and ’lung cancer’ (neop) will be annotated).

- CandidatesAndMappings: annotate both Candidate and final mappings. This will usually result in multiple, overlapping annotations for each term/phrase

- scoreThreshold: set from 0 to 1000. The lower the threshold, the greater the number of annotations but the lower precision. Default is 500.

- useNegEx: set this to true to add NegEx features to annotations (NegExType and NegExTrigger). See http://www.dbmi.pitt.edu/chapman/NegEx.html for more information on NegEx

19.19 Inter Annotator Agreement